* [client] Fix flow client Receive retry loop not stopping after Close Use backoff.Permanent for canceled gRPC errors so Receive returns immediately instead of retrying until context deadline when the connection is already closed. Add TestNewClient_PermanentClose to verify the behavior. The connectivity.Shutdown check was meaningless because when the connection is shut down, c.realClient.Events(ctx, grpc.WaitForReady(true)) on the nex line already fails with codes.Canceled — which is now handled as a permanent error. The explicit state check was just duplicating what gRPC already reports through its normal error path. * [client] remove WaitForReady from stream open call grpc.WaitForReady(true) parks the RPC call internally until the connection reaches READY, only unblocking on ctx cancellation. This means the external backoff.Retry loop in Receive() never gets control back during a connection outage — it cannot tick, log, or apply its retry intervals while WaitForReady is blocking. Removing it restores fail-fast behaviour: Events() returns immediately with codes.Unavailable when the connection is not ready, which is exactly what the backoff loop expects. The backoff becomes the single authority over retry timing and cadence, as originally intended. * [client] Add connection recreation and improve flow client error handling Store gRPC dial options on the client to enable connection recreation on Internal errors (RST_STREAM/PROTOCOL_ERROR). Treat Unauthenticated, PermissionDenied, and Unimplemented as permanent failures. Unify mutex usage and add reconnection logging for better observability. * [client] Remove Unauthenticated, PermissionDenied, and Unimplemented from permanent error handling * [client] Fix error handling in Receive to properly re-establish stream and improve reconnection messaging * Fix test * [client] Add graceful shutdown handling and test for concurrent Close during Receive Prevent reconnection attempts after client closure by tracking a `closed` flag. Use `backoff.Permanent` for errors caused by operations on a closed client. Add a test to ensure `Close` does not block when `Receive` is actively running. * [client] Fix connection swap to properly close old gRPC connection Close the old `gRPC.ClientConn` after successfully swapping to a new connection during reconnection. * [client] Reset backoff * [client] Ensure stream closure on error during initialization * [client] Add test for handling server-side stream closure and reconnection Introduce `TestReceive_ServerClosesStream` to verify the client's ability to recover and process acknowledgments after the server closes the stream. Enhance test server with a controlled stream closure mechanism. * [client] Add protocol error simulation and enhance reconnection test Introduce `connTrackListener` to simulate HTTP/2 RST_STREAM with PROTOCOL_ERROR for testing. Refactor and rename `TestReceive_ServerClosesStream` to `TestReceive_ProtocolErrorStreamReconnect` to verify client recovery on protocol errors. * [client] Update Close error message in test for clarity * [client] Fine-tune the tests * [client] Adjust connection tracking in reconnection test * [client] Wait for Events handler to exit in RST_STREAM reconnection test Ensure the old `Events` handler exits fully before proceeding in the reconnection test to avoid dropped acknowledgments on a broken stream. Add a `handlerDone` channel to synchronize handler exits. * [client] Prevent panic on nil connection during Close * [client] Refactor connection handling to use explicit target tracking Introduce `target` field to store the gRPC connection target directly, simplifying reconnections and ensuring consistent connection reuse logic. * [client] Rename `isCancellation` to `isContextDone` and extend handling for `DeadlineExceeded` Refactor error handling to include `DeadlineExceeded` scenarios alongside `Canceled`. Update related condition checks for consistency. * [client] Add connection generation tracking to prevent stale reconnections Introduce `connGen` to track connection generations and ensure that stale `recreateConnection` calls do not override newer connections. Update stream establishment and reconnection logic to incorporate generation validation. * [client] Add backoff reset condition to prevent short-lived retry cycles Refine backoff reset logic to ensure it only occurs for sufficiently long-lived stream connections, avoiding interference with `MaxElapsedTime`. * [client] Introduce `minHealthyDuration` to refine backoff reset logic Add `minHealthyDuration` constant to ensure stream retries only reset the backoff timer if the stream survives beyond a minimum duration. Prevents unhealthy, short-lived streams from interfering with `MaxElapsedTime`. * [client] IPv6 friendly connection parsedURL.Hostname() strips IPv6 brackets. For http://[::1]:443, this turns it into ::1:443, which is not a valid host:port target for gRPC. Additionally, fmt.Sprintf("%s:%s", hostname, port) produces a trailing colon when the URL has no explicit port—http://example.com becomes example.com:. Both cases break the initial dial and reconnect paths. Use parsedURL.Host directly instead. * [client] Add `handlerStarted` channel to synchronize stream establishment in tests Introduce `handlerStarted` channel in the test server to signal when the server-side handler begins, ensuring robust synchronization between client and server during stream establishment. Update relevant test cases to wait for this signal before proceeding. * [client] Replace `receivedAcks` map with atomic counter and improve stream establishment sync in tests Refactor acknowledgment tracking in tests to use an `atomic.Int32` counter instead of a map. Replace fixed sleep with robust synchronization by waiting on `handlerStarted` signal for stream establishment. * [client] Extract `handleReceiveError` to simplify receive logic Refactor error handling in `receive` to a dedicated `handleReceiveError` method. Streamlines the main logic and isolates error recovery, including backoff reset and connection recreation. * [client] recreate gRPC ClientConn on every retry to prevent dual backoff The flow client had two competing retry loops: our custom exponential backoff and gRPC's internal subchannel reconnection. When establishStream failed, the same ClientConn was reused, allowing gRPC's internal backoff state to accumulate and control dial timing independently. Changes: - Consolidate error handling into handleRetryableError, which now handles context cancellation, permanent errors, backoff reset, and connection recreation in a single path - Call recreateConnection on every retryable error so each retry gets a fresh ClientConn with no internal backoff state - Remove connGen tracking since Receive is sequential and protected by a new receiving guard against concurrent calls - Reduce RandomizationFactor from 1 to 0.5 to avoid near-zero backoff intervals

Start using NetBird at netbird.io

See Documentation

Join our Slack channel or our Community forum

🚀 We are hiring! Join us at careers.netbird.io

New: NetBird terraform provider

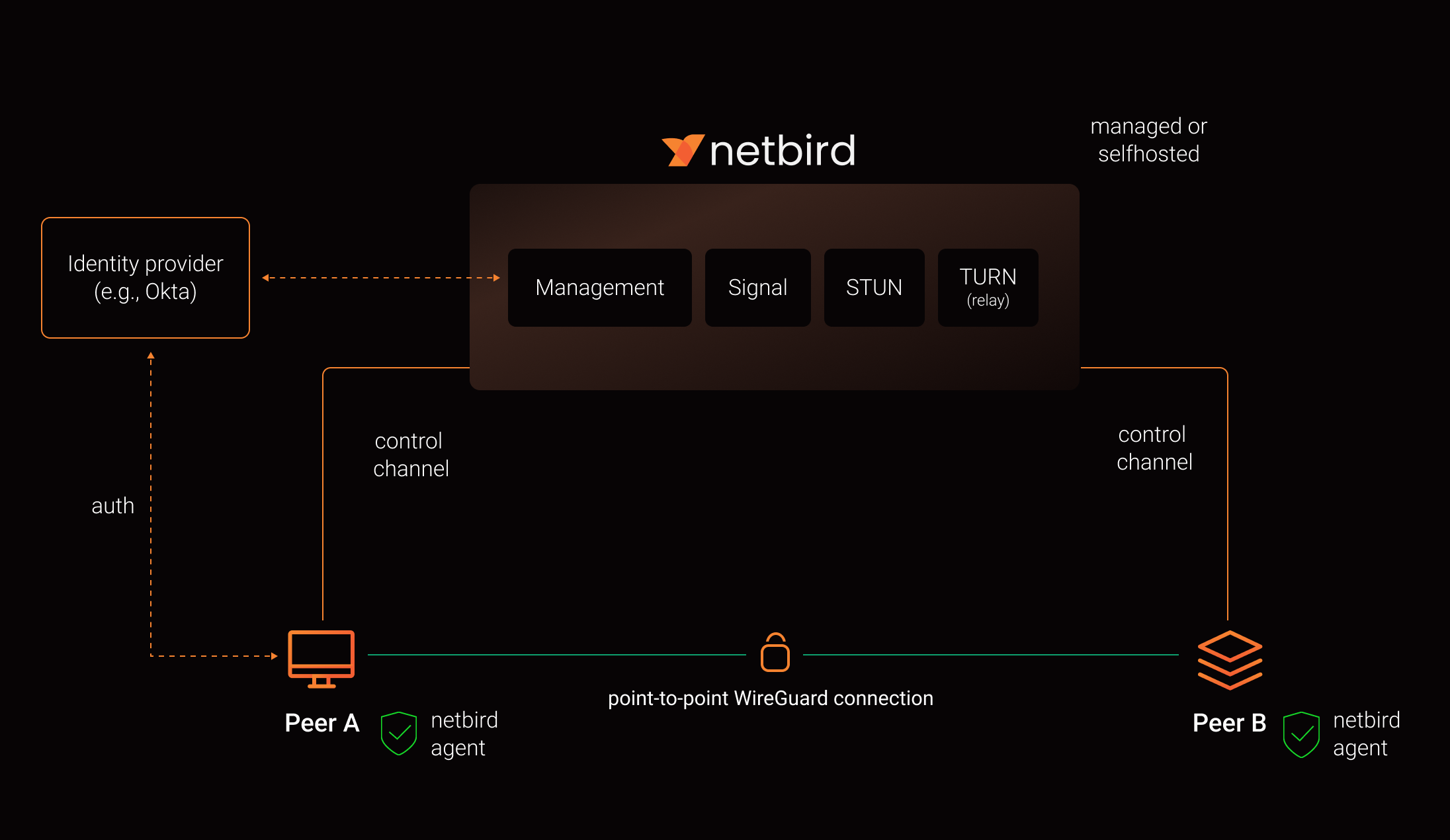

NetBird combines a configuration-free peer-to-peer private network and a centralized access control system in a single platform, making it easy to create secure private networks for your organization or home.

Connect. NetBird creates a WireGuard-based overlay network that automatically connects your machines over an encrypted tunnel, leaving behind the hassle of opening ports, complex firewall rules, VPN gateways, and so forth.

Secure. NetBird enables secure remote access by applying granular access policies while allowing you to manage them intuitively from a single place. Works universally on any infrastructure.

Open Source Network Security in a Single Platform

https://github.com/user-attachments/assets/10cec749-bb56-4ab3-97af-4e38850108d2

Self-Host NetBird (Video)

Key features

| Connectivity | Management | Security | Automation | Platforms |

|---|---|---|---|---|

|

|

|

|

|

|

|

|

||

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

||||

|

||||

|

Quickstart with NetBird Cloud

- Download and install NetBird at https://app.netbird.io/install

- Follow the steps to sign-up with Google, Microsoft, GitHub or your email address.

- Check NetBird admin UI.

- Add more machines.

Quickstart with self-hosted NetBird

This is the quickest way to try self-hosted NetBird. It should take around 5 minutes to get started if you already have a public domain and a VM. Follow the Advanced guide with a custom identity provider for installations with different IDPs.

Infrastructure requirements:

- A Linux VM with at least 1CPU and 2GB of memory.

- The VM should be publicly accessible on TCP ports 80 and 443 and UDP port: 3478.

- Public domain name pointing to the VM.

Software requirements:

- Docker installed on the VM with the docker-compose plugin (Docker installation guide) or docker with docker-compose in version 2 or higher.

- jq installed. In most distributions

Usually available in the official repositories and can be installed with

sudo apt install jqorsudo yum install jq - curl installed.

Usually available in the official repositories and can be installed with

sudo apt install curlorsudo yum install curl

Steps

- Download and run the installation script:

export NETBIRD_DOMAIN=netbird.example.com; curl -fsSL https://github.com/netbirdio/netbird/releases/latest/download/getting-started.sh | bash

- Once finished, you can manage the resources via

docker-compose

A bit on NetBird internals

- Every machine in the network runs NetBird Agent (or Client) that manages WireGuard.

- Every agent connects to Management Service that holds network state, manages peer IPs, and distributes network updates to agents (peers).

- NetBird agent uses WebRTC ICE implemented in pion/ice library to discover connection candidates when establishing a peer-to-peer connection between machines.

- Connection candidates are discovered with the help of STUN servers.

- Agents negotiate a connection through Signal Service passing p2p encrypted messages with candidates.

- Sometimes the NAT traversal is unsuccessful due to strict NATs (e.g. mobile carrier-grade NAT) and a p2p connection isn't possible. When this occurs the system falls back to a relay server called TURN, and a secure WireGuard tunnel is established via the TURN server.

Coturn is the one that has been successfully used for STUN and TURN in NetBird setups.

See a complete architecture overview for details.

Community projects

- NetBird installer script

- NetBird ansible collection by Dominion Solutions

- netbird-tui — terminal UI for managing NetBird peers, routes, and settings

Note: The main branch may be in an unstable or even broken state during development.

For stable versions, see releases.

Support acknowledgement

In November 2022, NetBird joined the StartUpSecure program sponsored by The Federal Ministry of Education and Research of The Federal Republic of Germany. Together with CISPA Helmholtz Center for Information Security NetBird brings the security best practices and simplicity to private networking.

Testimonials

We use open-source technologies like WireGuard®, Pion ICE (WebRTC), Coturn, and Rosenpass. We very much appreciate the work these guys are doing and we'd greatly appreciate if you could support them in any way (e.g., by giving a star or a contribution).

Legal

This repository is licensed under BSD-3-Clause license that applies to all parts of the repository except for the directories management/, signal/ and relay/. Those directories are licensed under the GNU Affero General Public License version 3.0 (AGPLv3). See the respective LICENSE files inside each directory.

WireGuard and the WireGuard logo are registered trademarks of Jason A. Donenfeld.