mirror of

https://github.com/netbirdio/netbird.git

synced 2026-05-08 01:39:55 +00:00

Compare commits

30 Commits

test/trans

...

test/engin

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

c495eaa549 | ||

|

|

b8026ad541 | ||

|

|

6ce09bca16 | ||

|

|

b79c1d64cc | ||

|

|

a5deeda727 | ||

|

|

5b2d5f8df1 | ||

|

|

6369706ade | ||

|

|

b1eda43f4b | ||

|

|

d4ef84fe6e | ||

|

|

e3dfbe5acf | ||

|

|

deeb05047d | ||

|

|

1814b07a4b | ||

|

|

b04d19bb0a | ||

|

|

44e8107383 | ||

|

|

2c1f5e46d5 | ||

|

|

20815c9f90 | ||

|

|

ba3cdb30ee | ||

|

|

1f25bb0751 | ||

|

|

9e7aac3a56 | ||

|

|

718d9526a7 | ||

|

|

48184ecf21 | ||

|

|

f18ae8b925 | ||

|

|

90d9dd4c08 | ||

|

|

dbec24b520 | ||

|

|

f603cd9202 | ||

|

|

5897a48e29 | ||

|

|

8bf729c7b4 | ||

|

|

7f09b39769 | ||

|

|

acad98e328 | ||

|

|

9d75cc3273 |

2

.github/workflows/golang-test-linux.yml

vendored

2

.github/workflows/golang-test-linux.yml

vendored

@@ -16,7 +16,7 @@ jobs:

|

||||

matrix:

|

||||

arch: [ '386','amd64' ]

|

||||

store: [ 'sqlite', 'postgres']

|

||||

runs-on: ubuntu-latest

|

||||

runs-on: ubuntu-22.04

|

||||

steps:

|

||||

- name: Install Go

|

||||

uses: actions/setup-go@v5

|

||||

|

||||

2

.github/workflows/release.yml

vendored

2

.github/workflows/release.yml

vendored

@@ -20,7 +20,7 @@ concurrency:

|

||||

|

||||

jobs:

|

||||

release:

|

||||

runs-on: ubuntu-latest

|

||||

runs-on: ubuntu-22.04

|

||||

env:

|

||||

flags: ""

|

||||

steps:

|

||||

|

||||

@@ -49,6 +49,8 @@

|

||||

|

||||

|

||||

|

||||

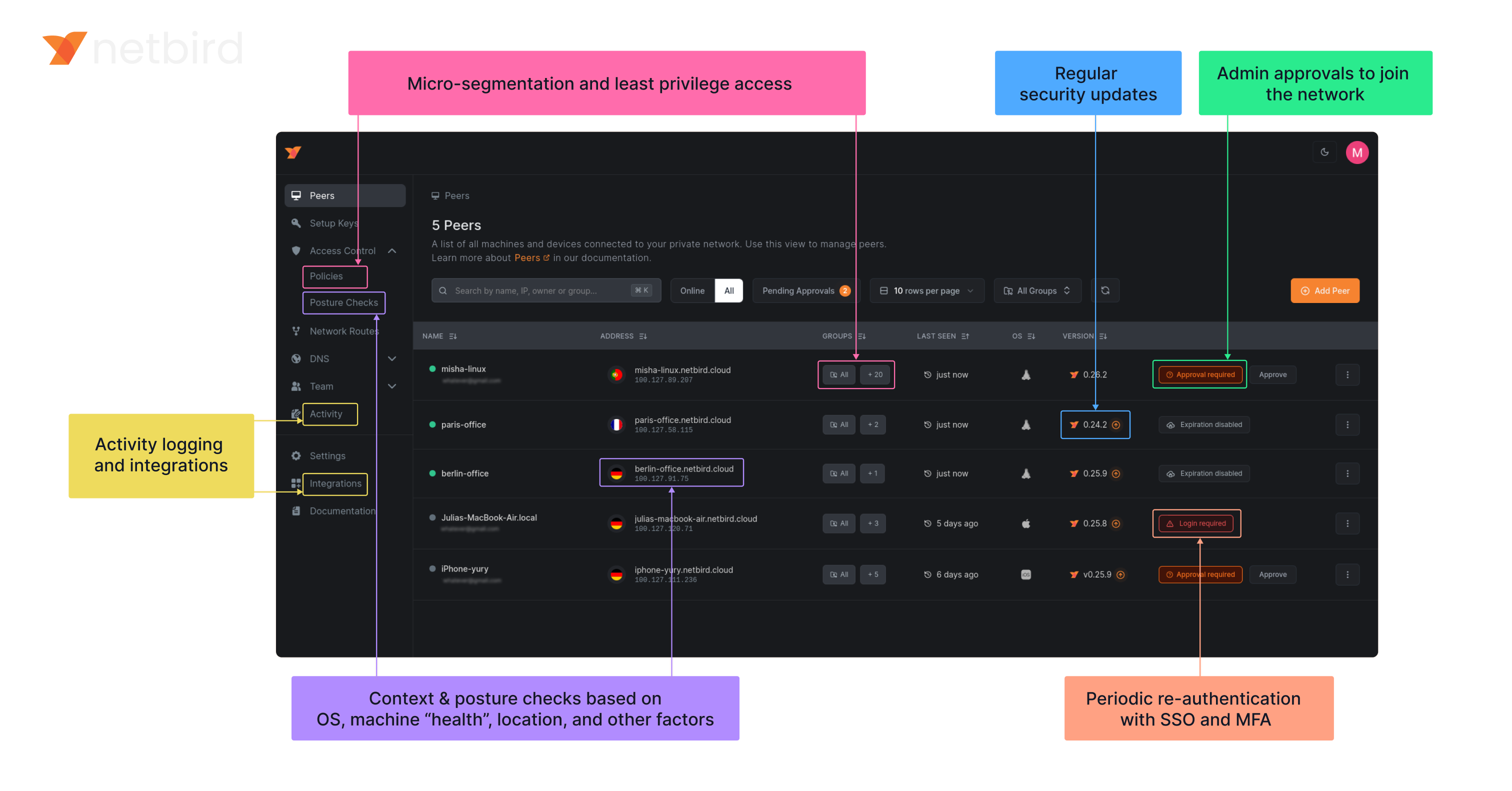

### NetBird on Lawrence Systems (Video)

|

||||

[](https://www.youtube.com/watch?v=Kwrff6h0rEw)

|

||||

|

||||

### Key features

|

||||

|

||||

@@ -62,6 +64,7 @@

|

||||

| | | <ul><li> - \[x] [Quantum-resistance with Rosenpass](https://netbird.io/knowledge-hub/the-first-quantum-resistant-mesh-vpn) </ul></li> | | <ul><li> - \[x] OpenWRT </ul></li> |

|

||||

| | | <ui><li> - \[x] [Periodic re-authentication](https://docs.netbird.io/how-to/enforce-periodic-user-authentication)</ul></li> | | <ul><li> - \[x] [Serverless](https://docs.netbird.io/how-to/netbird-on-faas) </ul></li> |

|

||||

| | | | | <ul><li> - \[x] Docker </ul></li> |

|

||||

|

||||

### Quickstart with NetBird Cloud

|

||||

|

||||

- Download and install NetBird at [https://app.netbird.io/install](https://app.netbird.io/install)

|

||||

|

||||

@@ -269,12 +269,6 @@ func (c *ConnectClient) run(

|

||||

checks := loginResp.GetChecks()

|

||||

|

||||

c.engineMutex.Lock()

|

||||

if c.engine != nil && c.engine.ctx.Err() != nil {

|

||||

log.Info("Stopping Netbird Engine")

|

||||

if err := c.engine.Stop(); err != nil {

|

||||

log.Errorf("Failed to stop engine: %v", err)

|

||||

}

|

||||

}

|

||||

c.engine = NewEngineWithProbes(engineCtx, cancel, signalClient, mgmClient, relayManager, engineConfig, mobileDependency, c.statusRecorder, probes, checks)

|

||||

|

||||

c.engineMutex.Unlock()

|

||||

@@ -294,6 +288,15 @@ func (c *ConnectClient) run(

|

||||

}

|

||||

|

||||

<-engineCtx.Done()

|

||||

c.engineMutex.Lock()

|

||||

if c.engine != nil && c.engine.wgInterface != nil {

|

||||

log.Infof("ensuring %s is removed, Netbird engine context cancelled", c.engine.wgInterface.Name())

|

||||

if err := c.engine.Stop(); err != nil {

|

||||

log.Errorf("Failed to stop engine: %v", err)

|

||||

}

|

||||

c.engine = nil

|

||||

}

|

||||

c.engineMutex.Unlock()

|

||||

c.statusRecorder.ClientTeardown()

|

||||

|

||||

backOff.Reset()

|

||||

|

||||

@@ -141,7 +141,7 @@ type Engine struct {

|

||||

ctx context.Context

|

||||

cancel context.CancelFunc

|

||||

|

||||

wgInterface iface.IWGIface

|

||||

wgInterface IWGIface

|

||||

wgProxyFactory *wgproxy.Factory

|

||||

|

||||

udpMux *bind.UniversalUDPMuxDefault

|

||||

@@ -251,6 +251,13 @@ func (e *Engine) Stop() error {

|

||||

}

|

||||

log.Info("Network monitor: stopped")

|

||||

|

||||

// stop/restore DNS first so dbus and friends don't complain because of a missing interface

|

||||

e.stopDNSServer()

|

||||

|

||||

if e.routeManager != nil {

|

||||

e.routeManager.Stop()

|

||||

}

|

||||

|

||||

err := e.removeAllPeers()

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to remove all peers: %s", err)

|

||||

@@ -319,7 +326,7 @@ func (e *Engine) Start() error {

|

||||

}

|

||||

e.dnsServer = dnsServer

|

||||

|

||||

e.routeManager = routemanager.NewManager(e.ctx, e.config.WgPrivateKey.PublicKey().String(), e.config.DNSRouteInterval, e.wgInterface, e.statusRecorder, e.relayManager, initialRoutes)

|

||||

e.routeManager = routemanager.NewManager(e.ctx, e.config.WgPrivateKey.PublicKey().String(), e.config.DNSRouteInterval, e.wgInterface.(*iface.WGIface), e.statusRecorder, e.relayManager, initialRoutes)

|

||||

beforePeerHook, afterPeerHook, err := e.routeManager.Init()

|

||||

if err != nil {

|

||||

log.Errorf("Failed to initialize route manager: %s", err)

|

||||

@@ -914,7 +921,7 @@ func (e *Engine) createPeerConn(pubKey string, allowedIPs string) (*peer.Conn, e

|

||||

wgConfig := peer.WgConfig{

|

||||

RemoteKey: pubKey,

|

||||

WgListenPort: e.config.WgPort,

|

||||

WgInterface: e.wgInterface,

|

||||

WgInterface: e.wgInterface.(*iface.WGIface),

|

||||

AllowedIps: allowedIPs,

|

||||

PreSharedKey: e.config.PreSharedKey,

|

||||

}

|

||||

@@ -1116,18 +1123,12 @@ func (e *Engine) close() {

|

||||

}

|

||||

}

|

||||

|

||||

// stop/restore DNS first so dbus and friends don't complain because of a missing interface

|

||||

e.stopDNSServer()

|

||||

|

||||

if e.routeManager != nil {

|

||||

e.routeManager.Stop()

|

||||

}

|

||||

|

||||

log.Debugf("removing Netbird interface %s", e.config.WgIfaceName)

|

||||

if e.wgInterface != nil {

|

||||

if err := e.wgInterface.Close(); err != nil {

|

||||

log.Errorf("failed closing Netbird interface %s %v", e.config.WgIfaceName, err)

|

||||

}

|

||||

e.wgInterface = nil

|

||||

}

|

||||

|

||||

if !isNil(e.sshServer) {

|

||||

@@ -1395,7 +1396,7 @@ func (e *Engine) startNetworkMonitor() {

|

||||

}

|

||||

|

||||

// Set a new timer to debounce rapid network changes

|

||||

debounceTimer = time.AfterFunc(1*time.Second, func() {

|

||||

debounceTimer = time.AfterFunc(2*time.Second, func() {

|

||||

// This function is called after the debounce period

|

||||

mu.Lock()

|

||||

defer mu.Unlock()

|

||||

@@ -1426,6 +1427,11 @@ func (e *Engine) addrViaRoutes(addr netip.Addr) (bool, netip.Prefix, error) {

|

||||

}

|

||||

|

||||

func (e *Engine) stopDNSServer() {

|

||||

if e.dnsServer == nil {

|

||||

return

|

||||

}

|

||||

e.dnsServer.Stop()

|

||||

e.dnsServer = nil

|

||||

err := fmt.Errorf("DNS server stopped")

|

||||

nsGroupStates := e.statusRecorder.GetDNSStates()

|

||||

for i := range nsGroupStates {

|

||||

@@ -1433,10 +1439,6 @@ func (e *Engine) stopDNSServer() {

|

||||

nsGroupStates[i].Error = err

|

||||

}

|

||||

e.statusRecorder.UpdateDNSStates(nsGroupStates)

|

||||

if e.dnsServer != nil {

|

||||

e.dnsServer.Stop()

|

||||

e.dnsServer = nil

|

||||

}

|

||||

}

|

||||

|

||||

// isChecksEqual checks if two slices of checks are equal.

|

||||

|

||||

@@ -242,13 +242,13 @@ func TestEngine_UpdateNetworkMap(t *testing.T) {

|

||||

peer.NewRecorder("https://mgm"),

|

||||

nil)

|

||||

|

||||

wgIface := &iface.MockWGIface{

|

||||

wgIface := &MockWGIface{

|

||||

RemovePeerFunc: func(peerKey string) error {

|

||||

return nil

|

||||

},

|

||||

}

|

||||

engine.wgInterface = wgIface

|

||||

engine.routeManager = routemanager.NewManager(ctx, key.PublicKey().String(), time.Minute, engine.wgInterface, engine.statusRecorder, relayMgr, nil)

|

||||

engine.routeManager = routemanager.NewManager(ctx, key.PublicKey().String(), time.Minute, engine.wgInterface.(*iface.WGIface), engine.statusRecorder, relayMgr, nil)

|

||||

engine.dnsServer = &dns.MockServer{

|

||||

UpdateDNSServerFunc: func(serial uint64, update nbdns.Config) error { return nil },

|

||||

}

|

||||

|

||||

@@ -1,4 +1,4 @@

|

||||

package iface

|

||||

package internal

|

||||

|

||||

import (

|

||||

"net"

|

||||

@@ -1,6 +1,6 @@

|

||||

//go:build !windows

|

||||

|

||||

package iface

|

||||

package internal

|

||||

|

||||

import (

|

||||

"net"

|

||||

@@ -1,4 +1,4 @@

|

||||

package iface

|

||||

package internal

|

||||

|

||||

import (

|

||||

"net"

|

||||

@@ -32,12 +32,14 @@ const (

|

||||

connPriorityRelay ConnPriority = 1

|

||||

connPriorityICETurn ConnPriority = 1

|

||||

connPriorityICEP2P ConnPriority = 2

|

||||

|

||||

reconnectMaxElapsedTime = 30 * time.Minute

|

||||

)

|

||||

|

||||

type WgConfig struct {

|

||||

WgListenPort int

|

||||

RemoteKey string

|

||||

WgInterface iface.IWGIface

|

||||

WgInterface *iface.WGIface

|

||||

AllowedIps string

|

||||

PreSharedKey *wgtypes.Key

|

||||

}

|

||||

@@ -80,9 +82,8 @@ type Conn struct {

|

||||

config ConnConfig

|

||||

statusRecorder *Status

|

||||

wgProxyFactory *wgproxy.Factory

|

||||

wgProxyICE wgproxy.Proxy

|

||||

wgProxyRelay wgproxy.Proxy

|

||||

signaler *Signaler

|

||||

iFaceDiscover stdnet.ExternalIFaceDiscover

|

||||

relayManager *relayClient.Manager

|

||||

allowedIPsIP string

|

||||

handshaker *Handshaker

|

||||

@@ -103,11 +104,14 @@ type Conn struct {

|

||||

beforeAddPeerHooks []nbnet.AddHookFunc

|

||||

afterRemovePeerHooks []nbnet.RemoveHookFunc

|

||||

|

||||

endpointRelay *net.UDPAddr

|

||||

wgProxyICE wgproxy.Proxy

|

||||

wgProxyRelay wgproxy.Proxy

|

||||

|

||||

// for reconnection operations

|

||||

iCEDisconnected chan bool

|

||||

relayDisconnected chan bool

|

||||

connMonitor *ConnMonitor

|

||||

reconnectCh <-chan struct{}

|

||||

}

|

||||

|

||||

// NewConn creates a new not opened Conn to the remote peer.

|

||||

@@ -123,21 +127,31 @@ func NewConn(engineCtx context.Context, config ConnConfig, statusRecorder *Statu

|

||||

connLog := log.WithField("peer", config.Key)

|

||||

|

||||

var conn = &Conn{

|

||||

log: connLog,

|

||||

ctx: ctx,

|

||||

ctxCancel: ctxCancel,

|

||||

config: config,

|

||||

statusRecorder: statusRecorder,

|

||||

wgProxyFactory: wgProxyFactory,

|

||||

signaler: signaler,

|

||||

relayManager: relayManager,

|

||||

allowedIPsIP: allowedIPsIP.String(),

|

||||

statusRelay: NewAtomicConnStatus(),

|

||||

statusICE: NewAtomicConnStatus(),

|

||||

log: connLog,

|

||||

ctx: ctx,

|

||||

ctxCancel: ctxCancel,

|

||||

config: config,

|

||||

statusRecorder: statusRecorder,

|

||||

wgProxyFactory: wgProxyFactory,

|

||||

signaler: signaler,

|

||||

iFaceDiscover: iFaceDiscover,

|

||||

relayManager: relayManager,

|

||||

allowedIPsIP: allowedIPsIP.String(),

|

||||

statusRelay: NewAtomicConnStatus(),

|

||||

statusICE: NewAtomicConnStatus(),

|

||||

|

||||

iCEDisconnected: make(chan bool, 1),

|

||||

relayDisconnected: make(chan bool, 1),

|

||||

}

|

||||

|

||||

conn.connMonitor, conn.reconnectCh = NewConnMonitor(

|

||||

signaler,

|

||||

iFaceDiscover,

|

||||

config,

|

||||

conn.relayDisconnected,

|

||||

conn.iCEDisconnected,

|

||||

)

|

||||

|

||||

rFns := WorkerRelayCallbacks{

|

||||

OnConnReady: conn.relayConnectionIsReady,

|

||||

OnDisconnected: conn.onWorkerRelayStateDisconnected,

|

||||

@@ -200,6 +214,8 @@ func (conn *Conn) startHandshakeAndReconnect() {

|

||||

conn.log.Errorf("failed to send initial offer: %v", err)

|

||||

}

|

||||

|

||||

go conn.connMonitor.Start(conn.ctx)

|

||||

|

||||

if conn.workerRelay.IsController() {

|

||||

conn.reconnectLoopWithRetry()

|

||||

} else {

|

||||

@@ -240,8 +256,7 @@ func (conn *Conn) Close() {

|

||||

conn.wgProxyICE = nil

|

||||

}

|

||||

|

||||

err := conn.config.WgConfig.WgInterface.RemovePeer(conn.config.WgConfig.RemoteKey)

|

||||

if err != nil {

|

||||

if err := conn.removeWgPeer(); err != nil {

|

||||

conn.log.Errorf("failed to remove wg endpoint: %v", err)

|

||||

}

|

||||

|

||||

@@ -309,12 +324,14 @@ func (conn *Conn) reconnectLoopWithRetry() {

|

||||

// With it, we can decrease to send necessary offer

|

||||

select {

|

||||

case <-conn.ctx.Done():

|

||||

return

|

||||

case <-time.After(3 * time.Second):

|

||||

}

|

||||

|

||||

ticker := conn.prepareExponentTicker()

|

||||

defer ticker.Stop()

|

||||

time.Sleep(1 * time.Second)

|

||||

|

||||

for {

|

||||

select {

|

||||

case t := <-ticker.C:

|

||||

@@ -342,20 +359,11 @@ func (conn *Conn) reconnectLoopWithRetry() {

|

||||

if err != nil {

|

||||

conn.log.Errorf("failed to do handshake: %v", err)

|

||||

}

|

||||

case changed := <-conn.relayDisconnected:

|

||||

if !changed {

|

||||

continue

|

||||

}

|

||||

conn.log.Debugf("Relay state changed, reset reconnect timer")

|

||||

ticker.Stop()

|

||||

ticker = conn.prepareExponentTicker()

|

||||

case changed := <-conn.iCEDisconnected:

|

||||

if !changed {

|

||||

continue

|

||||

}

|

||||

conn.log.Debugf("ICE state changed, reset reconnect timer")

|

||||

|

||||

case <-conn.reconnectCh:

|

||||

ticker.Stop()

|

||||

ticker = conn.prepareExponentTicker()

|

||||

|

||||

case <-conn.ctx.Done():

|

||||

conn.log.Debugf("context is done, stop reconnect loop")

|

||||

return

|

||||

@@ -366,10 +374,10 @@ func (conn *Conn) reconnectLoopWithRetry() {

|

||||

func (conn *Conn) prepareExponentTicker() *backoff.Ticker {

|

||||

bo := backoff.WithContext(&backoff.ExponentialBackOff{

|

||||

InitialInterval: 800 * time.Millisecond,

|

||||

RandomizationFactor: 0.01,

|

||||

RandomizationFactor: 0.1,

|

||||

Multiplier: 2,

|

||||

MaxInterval: conn.config.Timeout,

|

||||

MaxElapsedTime: 0,

|

||||

MaxElapsedTime: reconnectMaxElapsedTime,

|

||||

Stop: backoff.Stop,

|

||||

Clock: backoff.SystemClock,

|

||||

}, conn.ctx)

|

||||

@@ -420,54 +428,59 @@ func (conn *Conn) iCEConnectionIsReady(priority ConnPriority, iceConnInfo ICECon

|

||||

|

||||

conn.log.Debugf("ICE connection is ready")

|

||||

|

||||

conn.statusICE.Set(StatusConnected)

|

||||

|

||||

defer conn.updateIceState(iceConnInfo)

|

||||

|

||||

if conn.currentConnPriority > priority {

|

||||

conn.statusICE.Set(StatusConnected)

|

||||

conn.updateIceState(iceConnInfo)

|

||||

return

|

||||

}

|

||||

|

||||

conn.log.Infof("set ICE to active connection")

|

||||

|

||||

endpoint, wgProxy, err := conn.getEndpointForICEConnInfo(iceConnInfo)

|

||||

if err != nil {

|

||||

return

|

||||

var (

|

||||

ep *net.UDPAddr

|

||||

wgProxy wgproxy.Proxy

|

||||

err error

|

||||

)

|

||||

if iceConnInfo.RelayedOnLocal {

|

||||

wgProxy, err = conn.newProxy(iceConnInfo.RemoteConn)

|

||||

if err != nil {

|

||||

conn.log.Errorf("failed to add turn net.Conn to local proxy: %v", err)

|

||||

return

|

||||

}

|

||||

ep = wgProxy.EndpointAddr()

|

||||

conn.wgProxyICE = wgProxy

|

||||

} else {

|

||||

directEp, err := net.ResolveUDPAddr("udp", iceConnInfo.RemoteConn.RemoteAddr().String())

|

||||

if err != nil {

|

||||

log.Errorf("failed to resolveUDPaddr")

|

||||

conn.handleConfigurationFailure(err, nil)

|

||||

return

|

||||

}

|

||||

ep = directEp

|

||||

}

|

||||

|

||||

endpointUdpAddr, _ := net.ResolveUDPAddr(endpoint.Network(), endpoint.String())

|

||||

conn.log.Debugf("Conn resolved IP is %s for endopint %s", endpoint, endpointUdpAddr.IP)

|

||||

|

||||

conn.connIDICE = nbnet.GenerateConnID()

|

||||

for _, hook := range conn.beforeAddPeerHooks {

|

||||

if err := hook(conn.connIDICE, endpointUdpAddr.IP); err != nil {

|

||||

conn.log.Errorf("Before add peer hook failed: %v", err)

|

||||

}

|

||||

if err := conn.runBeforeAddPeerHooks(ep.IP); err != nil {

|

||||

conn.log.Errorf("Before add peer hook failed: %v", err)

|

||||

}

|

||||

|

||||

conn.workerRelay.DisableWgWatcher()

|

||||

|

||||

err = conn.configureWGEndpoint(endpointUdpAddr)

|

||||

if err != nil {

|

||||

if wgProxy != nil {

|

||||

if err := wgProxy.CloseConn(); err != nil {

|

||||

conn.log.Warnf("Failed to close turn connection: %v", err)

|

||||

}

|

||||

}

|

||||

conn.log.Warnf("Failed to update wg peer configuration: %v", err)

|

||||

if conn.wgProxyRelay != nil {

|

||||

conn.wgProxyRelay.Pause()

|

||||

}

|

||||

|

||||

if wgProxy != nil {

|

||||

wgProxy.Work()

|

||||

}

|

||||

|

||||

if err = conn.configureWGEndpoint(ep); err != nil {

|

||||

conn.handleConfigurationFailure(err, wgProxy)

|

||||

return

|

||||

}

|

||||

wgConfigWorkaround()

|

||||

|

||||

if conn.wgProxyICE != nil {

|

||||

if err := conn.wgProxyICE.CloseConn(); err != nil {

|

||||

conn.log.Warnf("failed to close deprecated wg proxy conn: %v", err)

|

||||

}

|

||||

}

|

||||

conn.wgProxyICE = wgProxy

|

||||

|

||||

conn.currentConnPriority = priority

|

||||

|

||||

conn.statusICE.Set(StatusConnected)

|

||||

conn.updateIceState(iceConnInfo)

|

||||

conn.doOnConnected(iceConnInfo.RosenpassPubKey, iceConnInfo.RosenpassAddr)

|

||||

}

|

||||

|

||||

@@ -482,11 +495,18 @@ func (conn *Conn) onWorkerICEStateDisconnected(newState ConnStatus) {

|

||||

|

||||

conn.log.Tracef("ICE connection state changed to %s", newState)

|

||||

|

||||

if conn.wgProxyICE != nil {

|

||||

if err := conn.wgProxyICE.CloseConn(); err != nil {

|

||||

conn.log.Warnf("failed to close deprecated wg proxy conn: %v", err)

|

||||

}

|

||||

}

|

||||

|

||||

// switch back to relay connection

|

||||

if conn.endpointRelay != nil && conn.currentConnPriority != connPriorityRelay {

|

||||

if conn.isReadyToUpgrade() {

|

||||

conn.log.Debugf("ICE disconnected, set Relay to active connection")

|

||||

err := conn.configureWGEndpoint(conn.endpointRelay)

|

||||

if err != nil {

|

||||

conn.wgProxyRelay.Work()

|

||||

|

||||

if err := conn.configureWGEndpoint(conn.wgProxyRelay.EndpointAddr()); err != nil {

|

||||

conn.log.Errorf("failed to switch to relay conn: %v", err)

|

||||

}

|

||||

conn.workerRelay.EnableWgWatcher(conn.ctx)

|

||||

@@ -496,10 +516,7 @@ func (conn *Conn) onWorkerICEStateDisconnected(newState ConnStatus) {

|

||||

changed := conn.statusICE.Get() != newState && newState != StatusConnecting

|

||||

conn.statusICE.Set(newState)

|

||||

|

||||

select {

|

||||

case conn.iCEDisconnected <- changed:

|

||||

default:

|

||||

}

|

||||

conn.notifyReconnectLoopICEDisconnected(changed)

|

||||

|

||||

peerState := State{

|

||||

PubKey: conn.config.Key,

|

||||

@@ -520,61 +537,48 @@ func (conn *Conn) relayConnectionIsReady(rci RelayConnInfo) {

|

||||

|

||||

if conn.ctx.Err() != nil {

|

||||

if err := rci.relayedConn.Close(); err != nil {

|

||||

log.Warnf("failed to close unnecessary relayed connection: %v", err)

|

||||

conn.log.Warnf("failed to close unnecessary relayed connection: %v", err)

|

||||

}

|

||||

return

|

||||

}

|

||||

|

||||

conn.log.Debugf("Relay connection is ready to use")

|

||||

conn.statusRelay.Set(StatusConnected)

|

||||

conn.log.Debugf("Relay connection has been established, setup the WireGuard")

|

||||

|

||||

wgProxy := conn.wgProxyFactory.GetProxy()

|

||||

endpoint, err := wgProxy.AddTurnConn(conn.ctx, rci.relayedConn)

|

||||

wgProxy, err := conn.newProxy(rci.relayedConn)

|

||||

if err != nil {

|

||||

conn.log.Errorf("failed to add relayed net.Conn to local proxy: %v", err)

|

||||

return

|

||||

}

|

||||

conn.log.Infof("created new wgProxy for relay connection: %s", endpoint)

|

||||

|

||||

endpointUdpAddr, _ := net.ResolveUDPAddr(endpoint.Network(), endpoint.String())

|

||||

conn.endpointRelay = endpointUdpAddr

|

||||

conn.log.Debugf("conn resolved IP for %s: %s", endpoint, endpointUdpAddr.IP)

|

||||

conn.log.Infof("created new wgProxy for relay connection: %s", wgProxy.EndpointAddr().String())

|

||||

|

||||

defer conn.updateRelayStatus(rci.relayedConn.RemoteAddr().String(), rci.rosenpassPubKey)

|

||||

|

||||

if conn.currentConnPriority > connPriorityRelay {

|

||||

if conn.statusICE.Get() == StatusConnected {

|

||||

log.Debugf("do not switch to relay because current priority is: %v", conn.currentConnPriority)

|

||||

return

|

||||

}

|

||||

if conn.iceP2PIsActive() {

|

||||

conn.log.Debugf("do not switch to relay because current priority is: %v", conn.currentConnPriority)

|

||||

conn.wgProxyRelay = wgProxy

|

||||

conn.statusRelay.Set(StatusConnected)

|

||||

conn.updateRelayStatus(rci.relayedConn.RemoteAddr().String(), rci.rosenpassPubKey)

|

||||

return

|

||||

}

|

||||

|

||||

conn.connIDRelay = nbnet.GenerateConnID()

|

||||

for _, hook := range conn.beforeAddPeerHooks {

|

||||

if err := hook(conn.connIDRelay, endpointUdpAddr.IP); err != nil {

|

||||

conn.log.Errorf("Before add peer hook failed: %v", err)

|

||||

}

|

||||

if err := conn.runBeforeAddPeerHooks(wgProxy.EndpointAddr().IP); err != nil {

|

||||

conn.log.Errorf("Before add peer hook failed: %v", err)

|

||||

}

|

||||

|

||||

err = conn.configureWGEndpoint(endpointUdpAddr)

|

||||

if err != nil {

|

||||

wgProxy.Work()

|

||||

if err := conn.configureWGEndpoint(wgProxy.EndpointAddr()); err != nil {

|

||||

if err := wgProxy.CloseConn(); err != nil {

|

||||

conn.log.Warnf("Failed to close relay connection: %v", err)

|

||||

}

|

||||

conn.log.Errorf("Failed to update wg peer configuration: %v", err)

|

||||

conn.log.Errorf("Failed to update WireGuard peer configuration: %v", err)

|

||||

return

|

||||

}

|

||||

conn.workerRelay.EnableWgWatcher(conn.ctx)

|

||||

|

||||

wgConfigWorkaround()

|

||||

|

||||

if conn.wgProxyRelay != nil {

|

||||

if err := conn.wgProxyRelay.CloseConn(); err != nil {

|

||||

conn.log.Warnf("failed to close deprecated wg proxy conn: %v", err)

|

||||

}

|

||||

}

|

||||

conn.wgProxyRelay = wgProxy

|

||||

conn.currentConnPriority = connPriorityRelay

|

||||

|

||||

conn.statusRelay.Set(StatusConnected)

|

||||

conn.wgProxyRelay = wgProxy

|

||||

conn.updateRelayStatus(rci.relayedConn.RemoteAddr().String(), rci.rosenpassPubKey)

|

||||

conn.log.Infof("start to communicate with peer via relay")

|

||||

conn.doOnConnected(rci.rosenpassPubKey, rci.rosenpassAddr)

|

||||

}

|

||||

@@ -587,29 +591,23 @@ func (conn *Conn) onWorkerRelayStateDisconnected() {

|

||||

return

|

||||

}

|

||||

|

||||

log.Debugf("relay connection is disconnected")

|

||||

conn.log.Debugf("relay connection is disconnected")

|

||||

|

||||

if conn.currentConnPriority == connPriorityRelay {

|

||||

log.Debugf("clean up WireGuard config")

|

||||

err := conn.config.WgConfig.WgInterface.RemovePeer(conn.config.WgConfig.RemoteKey)

|

||||

if err != nil {

|

||||

conn.log.Debugf("clean up WireGuard config")

|

||||

if err := conn.removeWgPeer(); err != nil {

|

||||

conn.log.Errorf("failed to remove wg endpoint: %v", err)

|

||||

}

|

||||

}

|

||||

|

||||

if conn.wgProxyRelay != nil {

|

||||

conn.endpointRelay = nil

|

||||

_ = conn.wgProxyRelay.CloseConn()

|

||||

conn.wgProxyRelay = nil

|

||||

}

|

||||

|

||||

changed := conn.statusRelay.Get() != StatusDisconnected

|

||||

conn.statusRelay.Set(StatusDisconnected)

|

||||

|

||||

select {

|

||||

case conn.relayDisconnected <- changed:

|

||||

default:

|

||||

}

|

||||

conn.notifyReconnectLoopRelayDisconnected(changed)

|

||||

|

||||

peerState := State{

|

||||

PubKey: conn.config.Key,

|

||||

@@ -617,9 +615,7 @@ func (conn *Conn) onWorkerRelayStateDisconnected() {

|

||||

Relayed: conn.isRelayed(),

|

||||

ConnStatusUpdate: time.Now(),

|

||||

}

|

||||

|

||||

err := conn.statusRecorder.UpdatePeerRelayedStateToDisconnected(peerState)

|

||||

if err != nil {

|

||||

if err := conn.statusRecorder.UpdatePeerRelayedStateToDisconnected(peerState); err != nil {

|

||||

conn.log.Warnf("unable to save peer's state to Relay disconnected, got error: %v", err)

|

||||

}

|

||||

}

|

||||

@@ -755,6 +751,16 @@ func (conn *Conn) isConnected() bool {

|

||||

return true

|

||||

}

|

||||

|

||||

func (conn *Conn) runBeforeAddPeerHooks(ip net.IP) error {

|

||||

conn.connIDICE = nbnet.GenerateConnID()

|

||||

for _, hook := range conn.beforeAddPeerHooks {

|

||||

if err := hook(conn.connIDICE, ip); err != nil {

|

||||

return err

|

||||

}

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (conn *Conn) freeUpConnID() {

|

||||

if conn.connIDRelay != "" {

|

||||

for _, hook := range conn.afterRemovePeerHooks {

|

||||

@@ -775,21 +781,52 @@ func (conn *Conn) freeUpConnID() {

|

||||

}

|

||||

}

|

||||

|

||||

func (conn *Conn) getEndpointForICEConnInfo(iceConnInfo ICEConnInfo) (net.Addr, wgproxy.Proxy, error) {

|

||||

if !iceConnInfo.RelayedOnLocal {

|

||||

return iceConnInfo.RemoteConn.RemoteAddr(), nil, nil

|

||||

}

|

||||

conn.log.Debugf("setup ice turn connection")

|

||||

func (conn *Conn) newProxy(remoteConn net.Conn) (wgproxy.Proxy, error) {

|

||||

conn.log.Debugf("setup proxied WireGuard connection")

|

||||

wgProxy := conn.wgProxyFactory.GetProxy()

|

||||

ep, err := wgProxy.AddTurnConn(conn.ctx, iceConnInfo.RemoteConn)

|

||||

if err != nil {

|

||||

if err := wgProxy.AddTurnConn(conn.ctx, remoteConn); err != nil {

|

||||

conn.log.Errorf("failed to add turn net.Conn to local proxy: %v", err)

|

||||

if errClose := wgProxy.CloseConn(); errClose != nil {

|

||||

conn.log.Warnf("failed to close turn proxy connection: %v", errClose)

|

||||

}

|

||||

return nil, nil, err

|

||||

return nil, err

|

||||

}

|

||||

return wgProxy, nil

|

||||

}

|

||||

|

||||

func (conn *Conn) isReadyToUpgrade() bool {

|

||||

return conn.wgProxyRelay != nil && conn.currentConnPriority != connPriorityRelay

|

||||

}

|

||||

|

||||

func (conn *Conn) iceP2PIsActive() bool {

|

||||

return conn.currentConnPriority == connPriorityICEP2P && conn.statusICE.Get() == StatusConnected

|

||||

}

|

||||

|

||||

func (conn *Conn) removeWgPeer() error {

|

||||

return conn.config.WgConfig.WgInterface.RemovePeer(conn.config.WgConfig.RemoteKey)

|

||||

}

|

||||

|

||||

func (conn *Conn) notifyReconnectLoopRelayDisconnected(changed bool) {

|

||||

select {

|

||||

case conn.relayDisconnected <- changed:

|

||||

default:

|

||||

}

|

||||

}

|

||||

|

||||

func (conn *Conn) notifyReconnectLoopICEDisconnected(changed bool) {

|

||||

select {

|

||||

case conn.iCEDisconnected <- changed:

|

||||

default:

|

||||

}

|

||||

}

|

||||

|

||||

func (conn *Conn) handleConfigurationFailure(err error, wgProxy wgproxy.Proxy) {

|

||||

conn.log.Warnf("Failed to update wg peer configuration: %v", err)

|

||||

if wgProxy != nil {

|

||||

if ierr := wgProxy.CloseConn(); ierr != nil {

|

||||

conn.log.Warnf("Failed to close wg proxy: %v", ierr)

|

||||

}

|

||||

}

|

||||

if conn.wgProxyRelay != nil {

|

||||

conn.wgProxyRelay.Work()

|

||||

}

|

||||

return ep, wgProxy, nil

|

||||

}

|

||||

|

||||

func isRosenpassEnabled(remoteRosenpassPubKey []byte) bool {

|

||||

|

||||

212

client/internal/peer/conn_monitor.go

Normal file

212

client/internal/peer/conn_monitor.go

Normal file

@@ -0,0 +1,212 @@

|

||||

package peer

|

||||

|

||||

import (

|

||||

"context"

|

||||

"fmt"

|

||||

"sync"

|

||||

"time"

|

||||

|

||||

"github.com/pion/ice/v3"

|

||||

log "github.com/sirupsen/logrus"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal/stdnet"

|

||||

)

|

||||

|

||||

const (

|

||||

signalerMonitorPeriod = 5 * time.Second

|

||||

candidatesMonitorPeriod = 5 * time.Minute

|

||||

candidateGatheringTimeout = 5 * time.Second

|

||||

)

|

||||

|

||||

type ConnMonitor struct {

|

||||

signaler *Signaler

|

||||

iFaceDiscover stdnet.ExternalIFaceDiscover

|

||||

config ConnConfig

|

||||

relayDisconnected chan bool

|

||||

iCEDisconnected chan bool

|

||||

reconnectCh chan struct{}

|

||||

currentCandidates []ice.Candidate

|

||||

candidatesMu sync.Mutex

|

||||

}

|

||||

|

||||

func NewConnMonitor(signaler *Signaler, iFaceDiscover stdnet.ExternalIFaceDiscover, config ConnConfig, relayDisconnected, iCEDisconnected chan bool) (*ConnMonitor, <-chan struct{}) {

|

||||

reconnectCh := make(chan struct{}, 1)

|

||||

cm := &ConnMonitor{

|

||||

signaler: signaler,

|

||||

iFaceDiscover: iFaceDiscover,

|

||||

config: config,

|

||||

relayDisconnected: relayDisconnected,

|

||||

iCEDisconnected: iCEDisconnected,

|

||||

reconnectCh: reconnectCh,

|

||||

}

|

||||

return cm, reconnectCh

|

||||

}

|

||||

|

||||

func (cm *ConnMonitor) Start(ctx context.Context) {

|

||||

signalerReady := make(chan struct{}, 1)

|

||||

go cm.monitorSignalerReady(ctx, signalerReady)

|

||||

|

||||

localCandidatesChanged := make(chan struct{}, 1)

|

||||

go cm.monitorLocalCandidatesChanged(ctx, localCandidatesChanged)

|

||||

|

||||

for {

|

||||

select {

|

||||

case changed := <-cm.relayDisconnected:

|

||||

if !changed {

|

||||

continue

|

||||

}

|

||||

log.Debugf("Relay state changed, triggering reconnect")

|

||||

cm.triggerReconnect()

|

||||

|

||||

case changed := <-cm.iCEDisconnected:

|

||||

if !changed {

|

||||

continue

|

||||

}

|

||||

log.Debugf("ICE state changed, triggering reconnect")

|

||||

cm.triggerReconnect()

|

||||

|

||||

case <-signalerReady:

|

||||

log.Debugf("Signaler became ready, triggering reconnect")

|

||||

cm.triggerReconnect()

|

||||

|

||||

case <-localCandidatesChanged:

|

||||

log.Debugf("Local candidates changed, triggering reconnect")

|

||||

cm.triggerReconnect()

|

||||

|

||||

case <-ctx.Done():

|

||||

return

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (cm *ConnMonitor) monitorSignalerReady(ctx context.Context, signalerReady chan<- struct{}) {

|

||||

if cm.signaler == nil {

|

||||

return

|

||||

}

|

||||

|

||||

ticker := time.NewTicker(signalerMonitorPeriod)

|

||||

defer ticker.Stop()

|

||||

|

||||

lastReady := true

|

||||

for {

|

||||

select {

|

||||

case <-ticker.C:

|

||||

currentReady := cm.signaler.Ready()

|

||||

if !lastReady && currentReady {

|

||||

select {

|

||||

case signalerReady <- struct{}{}:

|

||||

default:

|

||||

}

|

||||

}

|

||||

lastReady = currentReady

|

||||

case <-ctx.Done():

|

||||

return

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (cm *ConnMonitor) monitorLocalCandidatesChanged(ctx context.Context, localCandidatesChanged chan<- struct{}) {

|

||||

ufrag, pwd, err := generateICECredentials()

|

||||

if err != nil {

|

||||

log.Warnf("Failed to generate ICE credentials: %v", err)

|

||||

return

|

||||

}

|

||||

|

||||

ticker := time.NewTicker(candidatesMonitorPeriod)

|

||||

defer ticker.Stop()

|

||||

|

||||

for {

|

||||

select {

|

||||

case <-ticker.C:

|

||||

if err := cm.handleCandidateTick(ctx, localCandidatesChanged, ufrag, pwd); err != nil {

|

||||

log.Warnf("Failed to handle candidate tick: %v", err)

|

||||

}

|

||||

case <-ctx.Done():

|

||||

return

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (cm *ConnMonitor) handleCandidateTick(ctx context.Context, localCandidatesChanged chan<- struct{}, ufrag string, pwd string) error {

|

||||

log.Debugf("Gathering ICE candidates")

|

||||

|

||||

transportNet, err := newStdNet(cm.iFaceDiscover, cm.config.ICEConfig.InterfaceBlackList)

|

||||

if err != nil {

|

||||

log.Errorf("failed to create pion's stdnet: %s", err)

|

||||

}

|

||||

|

||||

agent, err := newAgent(cm.config, transportNet, candidateTypesP2P(), ufrag, pwd)

|

||||

if err != nil {

|

||||

return fmt.Errorf("create ICE agent: %w", err)

|

||||

}

|

||||

defer func() {

|

||||

if err := agent.Close(); err != nil {

|

||||

log.Warnf("Failed to close ICE agent: %v", err)

|

||||

}

|

||||

}()

|

||||

|

||||

gatherDone := make(chan struct{})

|

||||

err = agent.OnCandidate(func(c ice.Candidate) {

|

||||

log.Tracef("Got candidate: %v", c)

|

||||

if c == nil {

|

||||

close(gatherDone)

|

||||

}

|

||||

})

|

||||

if err != nil {

|

||||

return fmt.Errorf("set ICE candidate handler: %w", err)

|

||||

}

|

||||

|

||||

if err := agent.GatherCandidates(); err != nil {

|

||||

return fmt.Errorf("gather ICE candidates: %w", err)

|

||||

}

|

||||

|

||||

ctx, cancel := context.WithTimeout(ctx, candidateGatheringTimeout)

|

||||

defer cancel()

|

||||

|

||||

select {

|

||||

case <-ctx.Done():

|

||||

return fmt.Errorf("wait for gathering: %w", ctx.Err())

|

||||

case <-gatherDone:

|

||||

}

|

||||

|

||||

candidates, err := agent.GetLocalCandidates()

|

||||

if err != nil {

|

||||

return fmt.Errorf("get local candidates: %w", err)

|

||||

}

|

||||

log.Tracef("Got candidates: %v", candidates)

|

||||

|

||||

if changed := cm.updateCandidates(candidates); changed {

|

||||

select {

|

||||

case localCandidatesChanged <- struct{}{}:

|

||||

default:

|

||||

}

|

||||

}

|

||||

|

||||

return nil

|

||||

}

|

||||

|

||||

func (cm *ConnMonitor) updateCandidates(newCandidates []ice.Candidate) bool {

|

||||

cm.candidatesMu.Lock()

|

||||

defer cm.candidatesMu.Unlock()

|

||||

|

||||

if len(cm.currentCandidates) != len(newCandidates) {

|

||||

cm.currentCandidates = newCandidates

|

||||

return true

|

||||

}

|

||||

|

||||

for i, candidate := range cm.currentCandidates {

|

||||

if candidate.Address() != newCandidates[i].Address() {

|

||||

cm.currentCandidates = newCandidates

|

||||

return true

|

||||

}

|

||||

}

|

||||

|

||||

return false

|

||||

}

|

||||

|

||||

func (cm *ConnMonitor) triggerReconnect() {

|

||||

select {

|

||||

case cm.reconnectCh <- struct{}{}:

|

||||

default:

|

||||

}

|

||||

}

|

||||

@@ -6,6 +6,6 @@ import (

|

||||

"github.com/netbirdio/netbird/client/internal/stdnet"

|

||||

)

|

||||

|

||||

func (w *WorkerICE) newStdNet() (*stdnet.Net, error) {

|

||||

return stdnet.NewNet(w.config.ICEConfig.InterfaceBlackList)

|

||||

func newStdNet(_ stdnet.ExternalIFaceDiscover, ifaceBlacklist []string) (*stdnet.Net, error) {

|

||||

return stdnet.NewNet(ifaceBlacklist)

|

||||

}

|

||||

|

||||

@@ -2,6 +2,6 @@ package peer

|

||||

|

||||

import "github.com/netbirdio/netbird/client/internal/stdnet"

|

||||

|

||||

func (w *WorkerICE) newStdNet() (*stdnet.Net, error) {

|

||||

return stdnet.NewNetWithDiscover(w.iFaceDiscover, w.config.ICEConfig.InterfaceBlackList)

|

||||

func newStdNet(iFaceDiscover stdnet.ExternalIFaceDiscover, ifaceBlacklist []string) (*stdnet.Net, error) {

|

||||

return stdnet.NewNetWithDiscover(iFaceDiscover, ifaceBlacklist)

|

||||

}

|

||||

|

||||

@@ -233,41 +233,16 @@ func (w *WorkerICE) Close() {

|

||||

}

|

||||

|

||||

func (w *WorkerICE) reCreateAgent(agentCancel context.CancelFunc, relaySupport []ice.CandidateType) (*ice.Agent, error) {

|

||||

transportNet, err := w.newStdNet()

|

||||

transportNet, err := newStdNet(w.iFaceDiscover, w.config.ICEConfig.InterfaceBlackList)

|

||||

if err != nil {

|

||||

w.log.Errorf("failed to create pion's stdnet: %s", err)

|

||||

}

|

||||

|

||||

iceKeepAlive := iceKeepAlive()

|

||||

iceDisconnectedTimeout := iceDisconnectedTimeout()

|

||||

iceRelayAcceptanceMinWait := iceRelayAcceptanceMinWait()

|

||||

|

||||

agentConfig := &ice.AgentConfig{

|

||||

MulticastDNSMode: ice.MulticastDNSModeDisabled,

|

||||

NetworkTypes: []ice.NetworkType{ice.NetworkTypeUDP4, ice.NetworkTypeUDP6},

|

||||

Urls: w.config.ICEConfig.StunTurn.Load().([]*stun.URI),

|

||||

CandidateTypes: relaySupport,

|

||||

InterfaceFilter: stdnet.InterfaceFilter(w.config.ICEConfig.InterfaceBlackList),

|

||||

UDPMux: w.config.ICEConfig.UDPMux,

|

||||

UDPMuxSrflx: w.config.ICEConfig.UDPMuxSrflx,

|

||||

NAT1To1IPs: w.config.ICEConfig.NATExternalIPs,

|

||||

Net: transportNet,

|

||||

FailedTimeout: &failedTimeout,

|

||||

DisconnectedTimeout: &iceDisconnectedTimeout,

|

||||

KeepaliveInterval: &iceKeepAlive,

|

||||

RelayAcceptanceMinWait: &iceRelayAcceptanceMinWait,

|

||||

LocalUfrag: w.localUfrag,

|

||||

LocalPwd: w.localPwd,

|

||||

}

|

||||

|

||||

if w.config.ICEConfig.DisableIPv6Discovery {

|

||||

agentConfig.NetworkTypes = []ice.NetworkType{ice.NetworkTypeUDP4}

|

||||

}

|

||||

|

||||

w.sentExtraSrflx = false

|

||||

agent, err := ice.NewAgent(agentConfig)

|

||||

|

||||

agent, err := newAgent(w.config, transportNet, relaySupport, w.localUfrag, w.localPwd)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

return nil, fmt.Errorf("create agent: %w", err)

|

||||

}

|

||||

|

||||

err = agent.OnCandidate(w.onICECandidate)

|

||||

@@ -390,6 +365,36 @@ func (w *WorkerICE) turnAgentDial(ctx context.Context, remoteOfferAnswer *OfferA

|

||||

}

|

||||

}

|

||||

|

||||

func newAgent(config ConnConfig, transportNet *stdnet.Net, candidateTypes []ice.CandidateType, ufrag string, pwd string) (*ice.Agent, error) {

|

||||

iceKeepAlive := iceKeepAlive()

|

||||

iceDisconnectedTimeout := iceDisconnectedTimeout()

|

||||

iceRelayAcceptanceMinWait := iceRelayAcceptanceMinWait()

|

||||

|

||||

agentConfig := &ice.AgentConfig{

|

||||

MulticastDNSMode: ice.MulticastDNSModeDisabled,

|

||||

NetworkTypes: []ice.NetworkType{ice.NetworkTypeUDP4, ice.NetworkTypeUDP6},

|

||||

Urls: config.ICEConfig.StunTurn.Load().([]*stun.URI),

|

||||

CandidateTypes: candidateTypes,

|

||||

InterfaceFilter: stdnet.InterfaceFilter(config.ICEConfig.InterfaceBlackList),

|

||||

UDPMux: config.ICEConfig.UDPMux,

|

||||

UDPMuxSrflx: config.ICEConfig.UDPMuxSrflx,

|

||||

NAT1To1IPs: config.ICEConfig.NATExternalIPs,

|

||||

Net: transportNet,

|

||||

FailedTimeout: &failedTimeout,

|

||||

DisconnectedTimeout: &iceDisconnectedTimeout,

|

||||

KeepaliveInterval: &iceKeepAlive,

|

||||

RelayAcceptanceMinWait: &iceRelayAcceptanceMinWait,

|

||||

LocalUfrag: ufrag,

|

||||

LocalPwd: pwd,

|

||||

}

|

||||

|

||||

if config.ICEConfig.DisableIPv6Discovery {

|

||||

agentConfig.NetworkTypes = []ice.NetworkType{ice.NetworkTypeUDP4}

|

||||

}

|

||||

|

||||

return ice.NewAgent(agentConfig)

|

||||

}

|

||||

|

||||

func extraSrflxCandidate(candidate ice.Candidate) (*ice.CandidateServerReflexive, error) {

|

||||

relatedAdd := candidate.RelatedAddress()

|

||||

return ice.NewCandidateServerReflexive(&ice.CandidateServerReflexiveConfig{

|

||||

|

||||

@@ -43,7 +43,7 @@ type clientNetwork struct {

|

||||

ctx context.Context

|

||||

cancel context.CancelFunc

|

||||

statusRecorder *peer.Status

|

||||

wgInterface iface.IWGIface

|

||||

wgInterface *iface.WGIface

|

||||

routes map[route.ID]*route.Route

|

||||

routeUpdate chan routesUpdate

|

||||

peerStateUpdate chan struct{}

|

||||

@@ -53,7 +53,7 @@ type clientNetwork struct {

|

||||

updateSerial uint64

|

||||

}

|

||||

|

||||

func newClientNetworkWatcher(ctx context.Context, dnsRouteInterval time.Duration, wgInterface iface.IWGIface, statusRecorder *peer.Status, rt *route.Route, routeRefCounter *refcounter.RouteRefCounter, allowedIPsRefCounter *refcounter.AllowedIPsRefCounter) *clientNetwork {

|

||||

func newClientNetworkWatcher(ctx context.Context, dnsRouteInterval time.Duration, wgInterface *iface.WGIface, statusRecorder *peer.Status, rt *route.Route, routeRefCounter *refcounter.RouteRefCounter, allowedIPsRefCounter *refcounter.AllowedIPsRefCounter) *clientNetwork {

|

||||

ctx, cancel := context.WithCancel(ctx)

|

||||

|

||||

client := &clientNetwork{

|

||||

@@ -378,7 +378,7 @@ func (c *clientNetwork) peersStateAndUpdateWatcher() {

|

||||

}

|

||||

}

|

||||

|

||||

func handlerFromRoute(rt *route.Route, routeRefCounter *refcounter.RouteRefCounter, allowedIPsRefCounter *refcounter.AllowedIPsRefCounter, dnsRouterInteval time.Duration, statusRecorder *peer.Status, wgInterface iface.IWGIface) RouteHandler {

|

||||

func handlerFromRoute(rt *route.Route, routeRefCounter *refcounter.RouteRefCounter, allowedIPsRefCounter *refcounter.AllowedIPsRefCounter, dnsRouterInteval time.Duration, statusRecorder *peer.Status, wgInterface *iface.WGIface) RouteHandler {

|

||||

if rt.IsDynamic() {

|

||||

dns := nbdns.NewServiceViaMemory(wgInterface)

|

||||

return dynamic.NewRoute(rt, routeRefCounter, allowedIPsRefCounter, dnsRouterInteval, statusRecorder, wgInterface, fmt.Sprintf("%s:%d", dns.RuntimeIP(), dns.RuntimePort()))

|

||||

|

||||

@@ -48,7 +48,7 @@ type Route struct {

|

||||

currentPeerKey string

|

||||

cancel context.CancelFunc

|

||||

statusRecorder *peer.Status

|

||||

wgInterface iface.IWGIface

|

||||

wgInterface *iface.WGIface

|

||||

resolverAddr string

|

||||

}

|

||||

|

||||

@@ -58,7 +58,7 @@ func NewRoute(

|

||||

allowedIPsRefCounter *refcounter.AllowedIPsRefCounter,

|

||||

interval time.Duration,

|

||||

statusRecorder *peer.Status,

|

||||

wgInterface iface.IWGIface,

|

||||

wgInterface *iface.WGIface,

|

||||

resolverAddr string,

|

||||

) *Route {

|

||||

return &Route{

|

||||

|

||||

@@ -52,7 +52,7 @@ type DefaultManager struct {

|

||||

sysOps *systemops.SysOps

|

||||

statusRecorder *peer.Status

|

||||

relayMgr *relayClient.Manager

|

||||

wgInterface iface.IWGIface

|

||||

wgInterface *iface.WGIface

|

||||

pubKey string

|

||||

notifier *notifier.Notifier

|

||||

routeRefCounter *refcounter.RouteRefCounter

|

||||

@@ -64,7 +64,7 @@ func NewManager(

|

||||

ctx context.Context,

|

||||

pubKey string,

|

||||

dnsRouteInterval time.Duration,

|

||||

wgInterface iface.IWGIface,

|

||||

wgInterface *iface.WGIface,

|

||||

statusRecorder *peer.Status,

|

||||

relayMgr *relayClient.Manager,

|

||||

initialRoutes []*route.Route,

|

||||

|

||||

@@ -11,6 +11,6 @@ import (

|

||||

"github.com/netbirdio/netbird/client/internal/peer"

|

||||

)

|

||||

|

||||

func newServerRouter(context.Context, iface.IWGIface, firewall.Manager, *peer.Status) (serverRouter, error) {

|

||||

func newServerRouter(context.Context, *iface.WGIface, firewall.Manager, *peer.Status) (serverRouter, error) {

|

||||

return nil, fmt.Errorf("server route not supported on this os")

|

||||

}

|

||||

|

||||

@@ -22,11 +22,11 @@ type defaultServerRouter struct {

|

||||

ctx context.Context

|

||||

routes map[route.ID]*route.Route

|

||||

firewall firewall.Manager

|

||||

wgInterface iface.IWGIface

|

||||

wgInterface *iface.WGIface

|

||||

statusRecorder *peer.Status

|

||||

}

|

||||

|

||||

func newServerRouter(ctx context.Context, wgInterface iface.IWGIface, firewall firewall.Manager, statusRecorder *peer.Status) (serverRouter, error) {

|

||||

func newServerRouter(ctx context.Context, wgInterface *iface.WGIface, firewall firewall.Manager, statusRecorder *peer.Status) (serverRouter, error) {

|

||||

return &defaultServerRouter{

|

||||

ctx: ctx,

|

||||

routes: make(map[route.ID]*route.Route),

|

||||

|

||||

@@ -23,7 +23,7 @@ const (

|

||||

)

|

||||

|

||||

// Setup configures sysctl settings for RP filtering and source validation.

|

||||

func Setup(wgIface iface.IWGIface) (map[string]int, error) {

|

||||

func Setup(wgIface *iface.WGIface) (map[string]int, error) {

|

||||

keys := map[string]int{}

|

||||

var result *multierror.Error

|

||||

|

||||

|

||||

@@ -19,7 +19,7 @@ type ExclusionCounter = refcounter.Counter[netip.Prefix, struct{}, Nexthop]

|

||||

|

||||

type SysOps struct {

|

||||

refCounter *ExclusionCounter

|

||||

wgInterface iface.IWGIface

|

||||

wgInterface *iface.WGIface

|

||||

// prefixes is tracking all the current added prefixes im memory

|

||||

// (this is used in iOS as all route updates require a full table update)

|

||||

//nolint

|

||||

@@ -30,7 +30,7 @@ type SysOps struct {

|

||||

notifier *notifier.Notifier

|

||||

}

|

||||

|

||||

func NewSysOps(wgInterface iface.IWGIface, notifier *notifier.Notifier) *SysOps {

|

||||

func NewSysOps(wgInterface *iface.WGIface, notifier *notifier.Notifier) *SysOps {

|

||||

return &SysOps{

|

||||

wgInterface: wgInterface,

|

||||

notifier: notifier,

|

||||

|

||||

@@ -122,7 +122,7 @@ func (r *SysOps) addRouteForCurrentDefaultGateway(prefix netip.Prefix) error {

|

||||

|

||||

// addRouteToNonVPNIntf adds a new route to the routing table for the given prefix and returns the next hop and interface.

|

||||

// If the next hop or interface is pointing to the VPN interface, it will return the initial values.

|

||||

func (r *SysOps) addRouteToNonVPNIntf(prefix netip.Prefix, vpnIntf iface.IWGIface, initialNextHop Nexthop) (Nexthop, error) {

|

||||

func (r *SysOps) addRouteToNonVPNIntf(prefix netip.Prefix, vpnIntf *iface.WGIface, initialNextHop Nexthop) (Nexthop, error) {

|

||||

addr := prefix.Addr()

|

||||

switch {

|

||||

case addr.IsLoopback(),

|

||||

|

||||

@@ -5,7 +5,6 @@ package ebpf

|

||||

import (

|

||||

"context"

|

||||

"fmt"

|

||||

"io"

|

||||

"net"

|

||||

"os"

|

||||

"sync"

|

||||

@@ -94,13 +93,12 @@ func (p *WGEBPFProxy) Listen() error {

|

||||

}

|

||||

|

||||

// AddTurnConn add new turn connection for the proxy

|

||||

func (p *WGEBPFProxy) AddTurnConn(ctx context.Context, turnConn net.Conn) (net.Addr, error) {

|

||||

func (p *WGEBPFProxy) AddTurnConn(turnConn net.Conn) (*net.UDPAddr, error) {

|

||||

wgEndpointPort, err := p.storeTurnConn(turnConn)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

|

||||

go p.proxyToLocal(ctx, wgEndpointPort, turnConn)

|

||||

log.Infof("turn conn added to wg proxy store: %s, endpoint port: :%d", turnConn.RemoteAddr(), wgEndpointPort)

|

||||

|

||||

wgEndpoint := &net.UDPAddr{

|

||||

@@ -137,35 +135,6 @@ func (p *WGEBPFProxy) Free() error {

|

||||

return nberrors.FormatErrorOrNil(result)

|

||||

}

|

||||

|

||||

func (p *WGEBPFProxy) proxyToLocal(ctx context.Context, endpointPort uint16, remoteConn net.Conn) {

|

||||

defer p.removeTurnConn(endpointPort)

|

||||

|

||||

var (

|

||||

err error

|

||||

n int

|

||||

)

|

||||

buf := make([]byte, 1500)

|

||||

for ctx.Err() == nil {

|

||||

n, err = remoteConn.Read(buf)

|

||||

if err != nil {

|

||||

if ctx.Err() != nil {

|

||||

return

|

||||

}

|

||||

if err != io.EOF {

|

||||

log.Errorf("failed to read from turn conn (endpoint: :%d): %s", endpointPort, err)

|

||||

}

|

||||

return

|

||||

}

|

||||

|

||||

if err := p.sendPkg(buf[:n], endpointPort); err != nil {

|

||||

if ctx.Err() != nil || p.ctx.Err() != nil {

|

||||

return

|

||||

}

|

||||

log.Errorf("failed to write out turn pkg to local conn: %v", err)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// proxyToRemote read messages from local WireGuard interface and forward it to remote conn

|

||||

// From this go routine has only one instance.

|

||||

func (p *WGEBPFProxy) proxyToRemote() {

|

||||

@@ -280,7 +249,7 @@ func (p *WGEBPFProxy) prepareSenderRawSocket() (net.PacketConn, error) {

|

||||

return packetConn, nil

|

||||

}

|

||||

|

||||

func (p *WGEBPFProxy) sendPkg(data []byte, port uint16) error {

|

||||

func (p *WGEBPFProxy) sendPkg(data []byte, port int) error {

|

||||

localhost := net.ParseIP("127.0.0.1")

|

||||

|

||||

payload := gopacket.Payload(data)

|

||||

|

||||

@@ -4,8 +4,13 @@ package ebpf

|

||||

|

||||

import (

|

||||

"context"

|

||||

"errors"

|

||||

"fmt"

|

||||

"io"

|

||||

"net"

|

||||

"sync"

|

||||

|

||||

log "github.com/sirupsen/logrus"

|

||||

)

|

||||

|

||||

// ProxyWrapper help to keep the remoteConn instance for net.Conn.Close function call

|

||||

@@ -13,20 +18,55 @@ type ProxyWrapper struct {

|

||||

WgeBPFProxy *WGEBPFProxy

|

||||

|

||||

remoteConn net.Conn

|

||||

cancel context.CancelFunc // with thic cancel function, we stop remoteToLocal thread

|

||||

ctx context.Context

|

||||

cancel context.CancelFunc

|

||||

|

||||

wgEndpointAddr *net.UDPAddr

|

||||

|

||||

pausedMu sync.Mutex

|

||||

paused bool

|

||||

isStarted bool

|

||||

}

|

||||

|

||||

func (e *ProxyWrapper) AddTurnConn(ctx context.Context, remoteConn net.Conn) (net.Addr, error) {

|

||||

ctxConn, cancel := context.WithCancel(ctx)

|

||||

addr, err := e.WgeBPFProxy.AddTurnConn(ctxConn, remoteConn)

|

||||

|

||||

func (p *ProxyWrapper) AddTurnConn(ctx context.Context, remoteConn net.Conn) error {

|

||||

addr, err := p.WgeBPFProxy.AddTurnConn(remoteConn)

|

||||

if err != nil {

|

||||

cancel()

|

||||

return nil, fmt.Errorf("add turn conn: %w", err)

|

||||

return fmt.Errorf("add turn conn: %w", err)

|

||||

}

|

||||

e.remoteConn = remoteConn

|

||||

e.cancel = cancel

|

||||

return addr, err

|

||||

p.remoteConn = remoteConn

|

||||

p.ctx, p.cancel = context.WithCancel(ctx)

|

||||

p.wgEndpointAddr = addr

|

||||

return err

|

||||

}

|

||||

|

||||

func (p *ProxyWrapper) EndpointAddr() *net.UDPAddr {

|

||||

return p.wgEndpointAddr

|

||||

}

|

||||

|

||||

func (p *ProxyWrapper) Work() {

|

||||

if p.remoteConn == nil {

|

||||

return

|

||||

}

|

||||

|

||||

p.pausedMu.Lock()

|

||||

p.paused = false

|

||||

p.pausedMu.Unlock()

|

||||

|

||||

if !p.isStarted {

|

||||

p.isStarted = true

|

||||

go p.proxyToLocal(p.ctx)

|

||||

}

|

||||

}

|

||||

|

||||

func (p *ProxyWrapper) Pause() {

|

||||

if p.remoteConn == nil {

|

||||

return

|

||||

}

|

||||

|

||||

log.Tracef("pause proxy reading from: %s", p.remoteConn.RemoteAddr())

|

||||

p.pausedMu.Lock()

|

||||

p.paused = true

|

||||

p.pausedMu.Unlock()

|

||||

}

|

||||

|

||||

// CloseConn close the remoteConn and automatically remove the conn instance from the map

|

||||

@@ -42,3 +82,45 @@ func (e *ProxyWrapper) CloseConn() error {

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (p *ProxyWrapper) proxyToLocal(ctx context.Context) {

|

||||

defer p.WgeBPFProxy.removeTurnConn(uint16(p.wgEndpointAddr.Port))

|

||||

|

||||

buf := make([]byte, 1500)

|

||||

for {

|

||||

n, err := p.readFromRemote(ctx, buf)

|

||||

if err != nil {

|

||||

return

|

||||

}

|

||||

|

||||

p.pausedMu.Lock()

|

||||

if p.paused {

|

||||

p.pausedMu.Unlock()

|

||||

continue

|

||||

}

|

||||

|

||||

err = p.WgeBPFProxy.sendPkg(buf[:n], p.wgEndpointAddr.Port)

|

||||

p.pausedMu.Unlock()

|

||||

|

||||

if err != nil {

|

||||

if ctx.Err() != nil {

|

||||

return

|

||||

}

|

||||

log.Errorf("failed to write out turn pkg to local conn: %v", err)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (p *ProxyWrapper) readFromRemote(ctx context.Context, buf []byte) (int, error) {

|

||||

n, err := p.remoteConn.Read(buf)

|

||||

if err != nil {

|

||||

if ctx.Err() != nil {

|

||||

return 0, ctx.Err()

|

||||

}

|

||||

if !errors.Is(err, io.EOF) {

|

||||

log.Errorf("failed to read from turn conn (endpoint: :%d): %s", p.wgEndpointAddr.Port, err)

|

||||

}

|

||||

return 0, err

|

||||

}

|

||||

return n, nil

|

||||

}

|

||||

|

||||

@@ -7,6 +7,9 @@ import (

|

||||

|

||||

// Proxy is a transfer layer between the relayed connection and the WireGuard

|

||||

type Proxy interface {

|

||||

AddTurnConn(ctx context.Context, turnConn net.Conn) (net.Addr, error)

|

||||

AddTurnConn(ctx context.Context, turnConn net.Conn) error

|

||||

EndpointAddr() *net.UDPAddr

|

||||

Work()

|

||||

Pause()

|

||||

CloseConn() error

|

||||

}

|

||||

|

||||

@@ -114,7 +114,7 @@ func TestProxyCloseByRemoteConn(t *testing.T) {

|

||||

for _, tt := range tests {

|

||||

t.Run(tt.name, func(t *testing.T) {

|

||||

relayedConn := newMockConn()

|

||||

_, err := tt.proxy.AddTurnConn(ctx, relayedConn)

|

||||

err := tt.proxy.AddTurnConn(ctx, relayedConn)

|

||||

if err != nil {

|

||||

t.Errorf("error: %v", err)

|

||||

}

|

||||

|

||||

@@ -15,13 +15,17 @@ import (

|

||||

// WGUserSpaceProxy proxies

|

||||

type WGUserSpaceProxy struct {

|

||||

localWGListenPort int

|

||||

ctx context.Context

|

||||

cancel context.CancelFunc

|

||||

|

||||

remoteConn net.Conn

|

||||

localConn net.Conn

|

||||

ctx context.Context

|

||||

cancel context.CancelFunc

|

||||

closeMu sync.Mutex

|

||||

closed bool

|

||||

|

||||

pausedMu sync.Mutex

|

||||

paused bool

|

||||

isStarted bool

|

||||

}

|

||||

|

||||

// NewWGUserSpaceProxy instantiate a user space WireGuard proxy. This is not a thread safe implementation

|

||||

@@ -33,24 +37,60 @@ func NewWGUserSpaceProxy(wgPort int) *WGUserSpaceProxy {

|

||||

return p

|

||||

}

|

||||

|

||||

// AddTurnConn start the proxy with the given remote conn

|

||||

func (p *WGUserSpaceProxy) AddTurnConn(ctx context.Context, remoteConn net.Conn) (net.Addr, error) {

|

||||

p.ctx, p.cancel = context.WithCancel(ctx)

|

||||

|

||||

p.remoteConn = remoteConn

|

||||

|

||||

var err error

|

||||

// AddTurnConn

|

||||

// The provided Context must be non-nil. If the context expires before

|

||||

// the connection is complete, an error is returned. Once successfully

|

||||

// connected, any expiration of the context will not affect the

|

||||

// connection.

|

||||

func (p *WGUserSpaceProxy) AddTurnConn(ctx context.Context, remoteConn net.Conn) error {

|

||||

dialer := net.Dialer{}

|

||||

p.localConn, err = dialer.DialContext(p.ctx, "udp", fmt.Sprintf(":%d", p.localWGListenPort))

|

||||

localConn, err := dialer.DialContext(ctx, "udp", fmt.Sprintf(":%d", p.localWGListenPort))

|

||||

if err != nil {

|

||||

log.Errorf("failed dialing to local Wireguard port %s", err)

|

||||

return nil, err

|

||||

return err

|

||||

}

|

||||

|

||||

go p.proxyToRemote()

|

||||

go p.proxyToLocal()

|

||||

p.ctx, p.cancel = context.WithCancel(ctx)

|

||||

p.localConn = localConn

|

||||

p.remoteConn = remoteConn

|

||||

|

||||

return p.localConn.LocalAddr(), err

|

||||

return err

|

||||

}

|

||||

|

||||

func (p *WGUserSpaceProxy) EndpointAddr() *net.UDPAddr {

|

||||

if p.localConn == nil {

|

||||

return nil

|

||||

}

|

||||

endpointUdpAddr, _ := net.ResolveUDPAddr(p.localConn.LocalAddr().Network(), p.localConn.LocalAddr().String())

|

||||

return endpointUdpAddr

|

||||

}

|

||||

|

||||

// Work starts the proxy or resumes it if it was paused

|

||||

func (p *WGUserSpaceProxy) Work() {

|

||||

if p.remoteConn == nil {

|

||||

return

|

||||

}

|

||||

|

||||

p.pausedMu.Lock()

|

||||

p.paused = false

|

||||

p.pausedMu.Unlock()

|

||||

|

||||

if !p.isStarted {

|

||||

p.isStarted = true

|

||||

go p.proxyToRemote(p.ctx)

|

||||

go p.proxyToLocal(p.ctx)

|

||||

}

|

||||

}

|

||||

|

||||

// Pause pauses the proxy from receiving data from the remote peer

|