mirror of

https://github.com/netbirdio/netbird.git

synced 2026-04-20 17:26:40 +00:00

Merge branch 'main' into ssh-rewrite

This commit is contained in:

@@ -43,7 +43,7 @@ jobs:

|

||||

- name: gomobile init

|

||||

run: gomobile init

|

||||

- name: build android netbird lib

|

||||

run: PATH=$PATH:$(go env GOPATH) gomobile bind -o $GITHUB_WORKSPACE/netbird.aar -javapkg=io.netbird.gomobile -ldflags="-X golang.zx2c4.com/wireguard/ipc.socketDirectory=/data/data/io.netbird.client/cache/wireguard -X github.com/netbirdio/netbird/version.version=buildtest" $GITHUB_WORKSPACE/client/android

|

||||

run: PATH=$PATH:$(go env GOPATH) gomobile bind -o $GITHUB_WORKSPACE/netbird.aar -javapkg=io.netbird.gomobile -ldflags="-checklinkname=0 -X golang.zx2c4.com/wireguard/ipc.socketDirectory=/data/data/io.netbird.client/cache/wireguard -X github.com/netbirdio/netbird/version.version=buildtest" $GITHUB_WORKSPACE/client/android

|

||||

env:

|

||||

CGO_ENABLED: 0

|

||||

ANDROID_NDK_HOME: /usr/local/lib/android/sdk/ndk/23.1.7779620

|

||||

|

||||

@@ -50,10 +50,9 @@

|

||||

|

||||

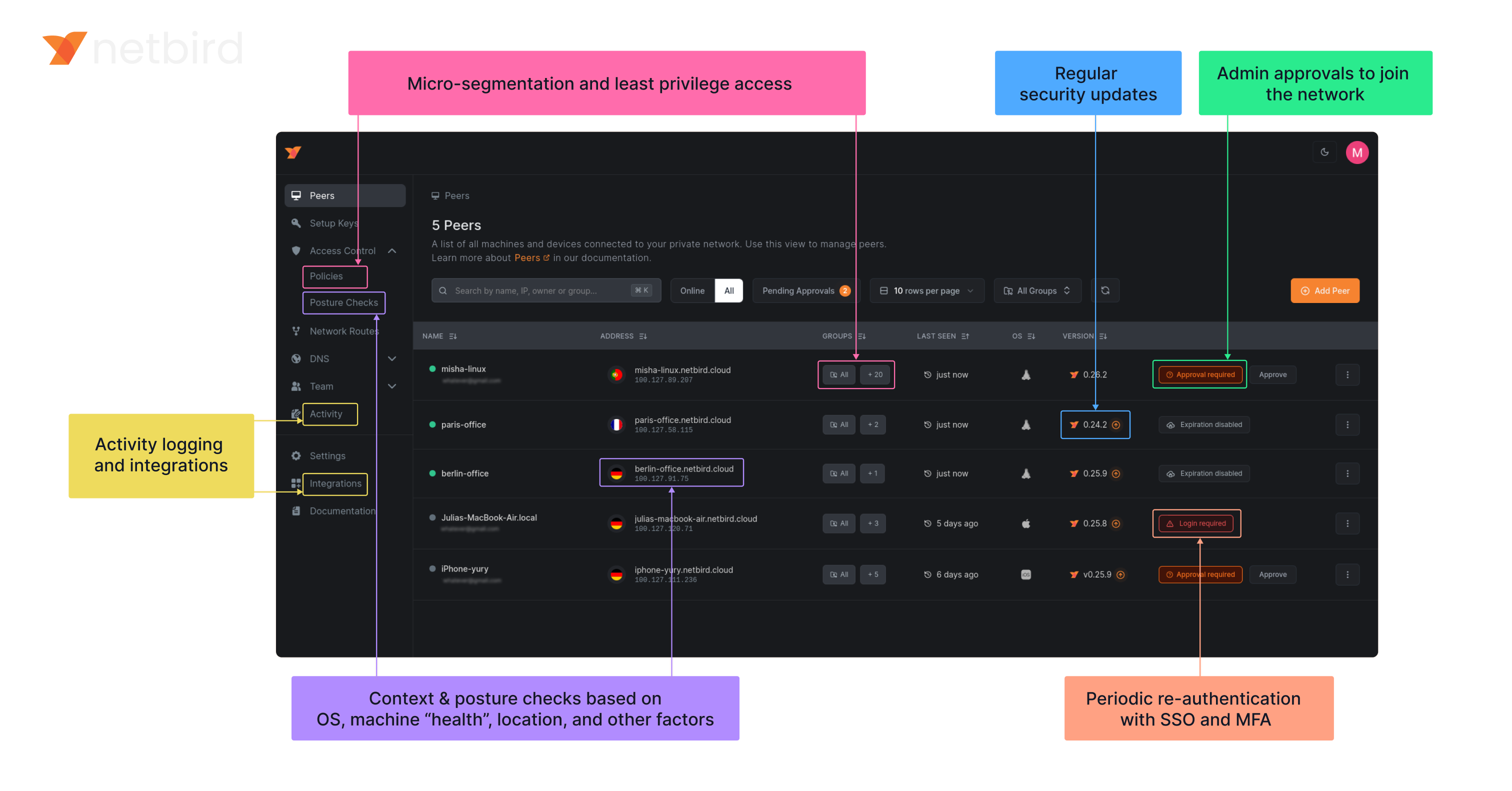

**Secure.** NetBird enables secure remote access by applying granular access policies while allowing you to manage them intuitively from a single place. Works universally on any infrastructure.

|

||||

|

||||

### Open-Source Network Security in a Single Platform

|

||||

### Open Source Network Security in a Single Platform

|

||||

|

||||

|

||||

|

||||

<img width="1188" alt="centralized-network-management 1" src="https://github.com/user-attachments/assets/c28cc8e4-15d2-4d2f-bb97-a6433db39d56" />

|

||||

|

||||

### NetBird on Lawrence Systems (Video)

|

||||

[](https://www.youtube.com/watch?v=Kwrff6h0rEw)

|

||||

|

||||

@@ -64,7 +64,9 @@ type Client struct {

|

||||

}

|

||||

|

||||

// NewClient instantiate a new Client

|

||||

func NewClient(cfgFile, deviceName string, uiVersion string, tunAdapter TunAdapter, iFaceDiscover IFaceDiscover, networkChangeListener NetworkChangeListener) *Client {

|

||||

func NewClient(cfgFile string, androidSDKVersion int, deviceName string, uiVersion string, tunAdapter TunAdapter, iFaceDiscover IFaceDiscover, networkChangeListener NetworkChangeListener) *Client {

|

||||

execWorkaround(androidSDKVersion)

|

||||

|

||||

net.SetAndroidProtectSocketFn(tunAdapter.ProtectSocket)

|

||||

return &Client{

|

||||

cfgFile: cfgFile,

|

||||

|

||||

26

client/android/exec.go

Normal file

26

client/android/exec.go

Normal file

@@ -0,0 +1,26 @@

|

||||

//go:build android

|

||||

|

||||

package android

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

_ "unsafe"

|

||||

)

|

||||

|

||||

// https://github.com/golang/go/pull/69543/commits/aad6b3b32c81795f86bc4a9e81aad94899daf520

|

||||

// In Android version 11 and earlier, pidfd-related system calls

|

||||

// are not allowed by the seccomp policy, which causes crashes due

|

||||

// to SIGSYS signals.

|

||||

|

||||

//go:linkname checkPidfdOnce os.checkPidfdOnce

|

||||

var checkPidfdOnce func() error

|

||||

|

||||

func execWorkaround(androidSDKVersion int) {

|

||||

if androidSDKVersion > 30 { // above Android 11

|

||||

return

|

||||

}

|

||||

|

||||

checkPidfdOnce = func() error {

|

||||

return fmt.Errorf("unsupported Android version")

|

||||

}

|

||||

}

|

||||

@@ -17,10 +17,18 @@ import (

|

||||

"github.com/netbirdio/netbird/client/server"

|

||||

nbstatus "github.com/netbirdio/netbird/client/status"

|

||||

mgmProto "github.com/netbirdio/netbird/management/proto"

|

||||

"github.com/netbirdio/netbird/upload-server/types"

|

||||

)

|

||||

|

||||

const errCloseConnection = "Failed to close connection: %v"

|

||||

|

||||

var (

|

||||

logFileCount uint32

|

||||

systemInfoFlag bool

|

||||

uploadBundleFlag bool

|

||||

uploadBundleURLFlag string

|

||||

)

|

||||

|

||||

var debugCmd = &cobra.Command{

|

||||

Use: "debug",

|

||||

Short: "Debugging commands",

|

||||

@@ -88,12 +96,13 @@ func debugBundle(cmd *cobra.Command, _ []string) error {

|

||||

|

||||

client := proto.NewDaemonServiceClient(conn)

|

||||

request := &proto.DebugBundleRequest{

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: getStatusOutput(cmd, anonymizeFlag),

|

||||

SystemInfo: debugSystemInfoFlag,

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: getStatusOutput(cmd, anonymizeFlag),

|

||||

SystemInfo: systemInfoFlag,

|

||||

LogFileCount: logFileCount,

|

||||

}

|

||||

if debugUploadBundle {

|

||||

request.UploadURL = debugUploadBundleURL

|

||||

if uploadBundleFlag {

|

||||

request.UploadURL = uploadBundleURLFlag

|

||||

}

|

||||

resp, err := client.DebugBundle(cmd.Context(), request)

|

||||

if err != nil {

|

||||

@@ -105,7 +114,7 @@ func debugBundle(cmd *cobra.Command, _ []string) error {

|

||||

return fmt.Errorf("upload failed: %s", resp.GetUploadFailureReason())

|

||||

}

|

||||

|

||||

if debugUploadBundle {

|

||||

if uploadBundleFlag {

|

||||

cmd.Printf("Upload file key:\n%s\n", resp.GetUploadedKey())

|

||||

}

|

||||

|

||||

@@ -223,12 +232,13 @@ func runForDuration(cmd *cobra.Command, args []string) error {

|

||||

headerPreDown := fmt.Sprintf("----- Netbird pre-down - Timestamp: %s - Duration: %s", time.Now().Format(time.RFC3339), duration)

|

||||

statusOutput = fmt.Sprintf("%s\n%s\n%s", statusOutput, headerPreDown, getStatusOutput(cmd, anonymizeFlag))

|

||||

request := &proto.DebugBundleRequest{

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: statusOutput,

|

||||

SystemInfo: debugSystemInfoFlag,

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: statusOutput,

|

||||

SystemInfo: systemInfoFlag,

|

||||

LogFileCount: logFileCount,

|

||||

}

|

||||

if debugUploadBundle {

|

||||

request.UploadURL = debugUploadBundleURL

|

||||

if uploadBundleFlag {

|

||||

request.UploadURL = uploadBundleURLFlag

|

||||

}

|

||||

resp, err := client.DebugBundle(cmd.Context(), request)

|

||||

if err != nil {

|

||||

@@ -255,7 +265,7 @@ func runForDuration(cmd *cobra.Command, args []string) error {

|

||||

return fmt.Errorf("upload failed: %s", resp.GetUploadFailureReason())

|

||||

}

|

||||

|

||||

if debugUploadBundle {

|

||||

if uploadBundleFlag {

|

||||

cmd.Printf("Upload file key:\n%s\n", resp.GetUploadedKey())

|

||||

}

|

||||

|

||||

@@ -375,3 +385,15 @@ func generateDebugBundle(config *internal.Config, recorder *peer.Status, connect

|

||||

}

|

||||

log.Infof("Generated debug bundle from SIGUSR1 at: %s", path)

|

||||

}

|

||||

|

||||

func init() {

|

||||

debugBundleCmd.Flags().Uint32VarP(&logFileCount, "log-file-count", "C", 1, "Number of rotated log files to include in debug bundle")

|

||||

debugBundleCmd.Flags().BoolVarP(&systemInfoFlag, "system-info", "S", true, "Adds system information to the debug bundle")

|

||||

debugBundleCmd.Flags().BoolVarP(&uploadBundleFlag, "upload-bundle", "U", false, "Uploads the debug bundle to a server")

|

||||

debugBundleCmd.Flags().StringVar(&uploadBundleURLFlag, "upload-bundle-url", types.DefaultBundleURL, "Service URL to get an URL to upload the debug bundle")

|

||||

|

||||

forCmd.Flags().Uint32VarP(&logFileCount, "log-file-count", "C", 1, "Number of rotated log files to include in debug bundle")

|

||||

forCmd.Flags().BoolVarP(&systemInfoFlag, "system-info", "S", true, "Adds system information to the debug bundle")

|

||||

forCmd.Flags().BoolVarP(&uploadBundleFlag, "upload-bundle", "U", false, "Uploads the debug bundle to a server")

|

||||

forCmd.Flags().StringVar(&uploadBundleURLFlag, "upload-bundle-url", types.DefaultBundleURL, "Service URL to get an URL to upload the debug bundle")

|

||||

}

|

||||

|

||||

@@ -22,7 +22,6 @@ import (

|

||||

"google.golang.org/grpc/credentials/insecure"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal"

|

||||

"github.com/netbirdio/netbird/upload-server/types"

|

||||

)

|

||||

|

||||

const (

|

||||

@@ -37,10 +36,7 @@ const (

|

||||

disableAutoConnectFlag = "disable-auto-connect"

|

||||

extraIFaceBlackListFlag = "extra-iface-blacklist"

|

||||

dnsRouteIntervalFlag = "dns-router-interval"

|

||||

systemInfoFlag = "system-info"

|

||||

enableLazyConnectionFlag = "enable-lazy-connection"

|

||||

uploadBundle = "upload-bundle"

|

||||

uploadBundleURL = "upload-bundle-url"

|

||||

)

|

||||

|

||||

var (

|

||||

@@ -73,10 +69,7 @@ var (

|

||||

autoConnectDisabled bool

|

||||

extraIFaceBlackList []string

|

||||

anonymizeFlag bool

|

||||

debugSystemInfoFlag bool

|

||||

dnsRouteInterval time.Duration

|

||||

debugUploadBundle bool

|

||||

debugUploadBundleURL string

|

||||

lazyConnEnabled bool

|

||||

|

||||

rootCmd = &cobra.Command{

|

||||

@@ -181,11 +174,8 @@ func init() {

|

||||

upCmd.PersistentFlags().BoolVar(&rosenpassEnabled, enableRosenpassFlag, false, "[Experimental] Enable Rosenpass feature. If enabled, the connection will be post-quantum secured via Rosenpass.")

|

||||

upCmd.PersistentFlags().BoolVar(&rosenpassPermissive, rosenpassPermissiveFlag, false, "[Experimental] Enable Rosenpass in permissive mode to allow this peer to accept WireGuard connections without requiring Rosenpass functionality from peers that do not have Rosenpass enabled.")

|

||||

upCmd.PersistentFlags().BoolVar(&autoConnectDisabled, disableAutoConnectFlag, false, "Disables auto-connect feature. If enabled, then the client won't connect automatically when the service starts.")

|

||||

upCmd.PersistentFlags().BoolVar(&lazyConnEnabled, enableLazyConnectionFlag, false, "[Experimental] Enable the lazy connection feature. If enabled, the client will establish connections on-demand.")

|

||||

upCmd.PersistentFlags().BoolVar(&lazyConnEnabled, enableLazyConnectionFlag, false, "[Experimental] Enable the lazy connection feature. If enabled, the client will establish connections on-demand. Note: this setting may be overridden by management configuration.")

|

||||

|

||||

debugCmd.PersistentFlags().BoolVarP(&debugSystemInfoFlag, systemInfoFlag, "S", true, "Adds system information to the debug bundle")

|

||||

debugCmd.PersistentFlags().BoolVarP(&debugUploadBundle, uploadBundle, "U", false, fmt.Sprintf("Uploads the debug bundle to a server from URL defined by %s", uploadBundleURL))

|

||||

debugCmd.PersistentFlags().StringVar(&debugUploadBundleURL, uploadBundleURL, types.DefaultBundleURL, "Service URL to get an URL to upload the debug bundle")

|

||||

}

|

||||

|

||||

// SetupCloseHandler handles SIGTERM signal and exits with success

|

||||

|

||||

94

client/iface/bind/activity.go

Normal file

94

client/iface/bind/activity.go

Normal file

@@ -0,0 +1,94 @@

|

||||

package bind

|

||||

|

||||

import (

|

||||

"net/netip"

|

||||

"sync"

|

||||

"sync/atomic"

|

||||

"time"

|

||||

|

||||

log "github.com/sirupsen/logrus"

|

||||

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

)

|

||||

|

||||

const (

|

||||

saveFrequency = int64(5 * time.Second)

|

||||

)

|

||||

|

||||

type PeerRecord struct {

|

||||

Address netip.AddrPort

|

||||

LastActivity atomic.Int64 // UnixNano timestamp

|

||||

}

|

||||

|

||||

type ActivityRecorder struct {

|

||||

mu sync.RWMutex

|

||||

peers map[string]*PeerRecord // publicKey to PeerRecord map

|

||||

addrToPeer map[netip.AddrPort]*PeerRecord // address to PeerRecord map

|

||||

}

|

||||

|

||||

func NewActivityRecorder() *ActivityRecorder {

|

||||

return &ActivityRecorder{

|

||||

peers: make(map[string]*PeerRecord),

|

||||

addrToPeer: make(map[netip.AddrPort]*PeerRecord),

|

||||

}

|

||||

}

|

||||

|

||||

// GetLastActivities returns a snapshot of peer last activity

|

||||

func (r *ActivityRecorder) GetLastActivities() map[string]time.Time {

|

||||

r.mu.RLock()

|

||||

defer r.mu.RUnlock()

|

||||

|

||||

activities := make(map[string]time.Time, len(r.peers))

|

||||

for key, record := range r.peers {

|

||||

unixNano := record.LastActivity.Load()

|

||||

activities[key] = time.Unix(0, unixNano)

|

||||

}

|

||||

return activities

|

||||

}

|

||||

|

||||

// UpsertAddress adds or updates the address for a publicKey

|

||||

func (r *ActivityRecorder) UpsertAddress(publicKey string, address netip.AddrPort) {

|

||||

r.mu.Lock()

|

||||

defer r.mu.Unlock()

|

||||

|

||||

if pr, exists := r.peers[publicKey]; exists {

|

||||

delete(r.addrToPeer, pr.Address)

|

||||

pr.Address = address

|

||||

} else {

|

||||

record := &PeerRecord{

|

||||

Address: address,

|

||||

}

|

||||

record.LastActivity.Store(monotime.Now())

|

||||

r.peers[publicKey] = record

|

||||

}

|

||||

|

||||

r.addrToPeer[address] = r.peers[publicKey]

|

||||

}

|

||||

|

||||

func (r *ActivityRecorder) Remove(publicKey string) {

|

||||

r.mu.Lock()

|

||||

defer r.mu.Unlock()

|

||||

if record, exists := r.peers[publicKey]; exists {

|

||||

delete(r.addrToPeer, record.Address)

|

||||

delete(r.peers, publicKey)

|

||||

}

|

||||

}

|

||||

|

||||

// record updates LastActivity for the given address using atomic store

|

||||

func (r *ActivityRecorder) record(address netip.AddrPort) {

|

||||

r.mu.RLock()

|

||||

record, ok := r.addrToPeer[address]

|

||||

r.mu.RUnlock()

|

||||

if !ok {

|

||||

log.Warnf("could not find record for address %s", address)

|

||||

return

|

||||

}

|

||||

|

||||

now := monotime.Now()

|

||||

last := record.LastActivity.Load()

|

||||

if now-last < saveFrequency {

|

||||

return

|

||||

}

|

||||

|

||||

_ = record.LastActivity.CompareAndSwap(last, now)

|

||||

}

|

||||

27

client/iface/bind/activity_test.go

Normal file

27

client/iface/bind/activity_test.go

Normal file

@@ -0,0 +1,27 @@

|

||||

package bind

|

||||

|

||||

import (

|

||||

"net/netip"

|

||||

"testing"

|

||||

"time"

|

||||

)

|

||||

|

||||

func TestActivityRecorder_GetLastActivities(t *testing.T) {

|

||||

peer := "peer1"

|

||||

ar := NewActivityRecorder()

|

||||

ar.UpsertAddress("peer1", netip.MustParseAddrPort("192.168.0.5:51820"))

|

||||

activities := ar.GetLastActivities()

|

||||

|

||||

p, ok := activities[peer]

|

||||

if !ok {

|

||||

t.Fatalf("Expected activity for peer %s, but got none", peer)

|

||||

}

|

||||

|

||||

if p.IsZero() {

|

||||

t.Fatalf("Expected activity for peer %s, but got zero", peer)

|

||||

}

|

||||

|

||||

if p.Before(time.Now().Add(-2 * time.Minute)) {

|

||||

t.Fatalf("Expected activity for peer %s to be recent, but got %v", peer, p)

|

||||

}

|

||||

}

|

||||

@@ -1,6 +1,7 @@

|

||||

package bind

|

||||

|

||||

import (

|

||||

"encoding/binary"

|

||||

"fmt"

|

||||

"net"

|

||||

"net/netip"

|

||||

@@ -51,22 +52,24 @@ type ICEBind struct {

|

||||

closedChanMu sync.RWMutex // protect the closeChan recreation from reading from it.

|

||||

closed bool

|

||||

|

||||

muUDPMux sync.Mutex

|

||||

udpMux *UniversalUDPMuxDefault

|

||||

address wgaddr.Address

|

||||

muUDPMux sync.Mutex

|

||||

udpMux *UniversalUDPMuxDefault

|

||||

address wgaddr.Address

|

||||

activityRecorder *ActivityRecorder

|

||||

}

|

||||

|

||||

func NewICEBind(transportNet transport.Net, filterFn FilterFn, address wgaddr.Address) *ICEBind {

|

||||

b, _ := wgConn.NewStdNetBind().(*wgConn.StdNetBind)

|

||||

ib := &ICEBind{

|

||||

StdNetBind: b,

|

||||

RecvChan: make(chan RecvMessage, 1),

|

||||

transportNet: transportNet,

|

||||

filterFn: filterFn,

|

||||

endpoints: make(map[netip.Addr]net.Conn),

|

||||

closedChan: make(chan struct{}),

|

||||

closed: true,

|

||||

address: address,

|

||||

StdNetBind: b,

|

||||

RecvChan: make(chan RecvMessage, 1),

|

||||

transportNet: transportNet,

|

||||

filterFn: filterFn,

|

||||

endpoints: make(map[netip.Addr]net.Conn),

|

||||

closedChan: make(chan struct{}),

|

||||

closed: true,

|

||||

address: address,

|

||||

activityRecorder: NewActivityRecorder(),

|

||||

}

|

||||

|

||||

rc := receiverCreator{

|

||||

@@ -100,6 +103,10 @@ func (s *ICEBind) Close() error {

|

||||

return s.StdNetBind.Close()

|

||||

}

|

||||

|

||||

func (s *ICEBind) ActivityRecorder() *ActivityRecorder {

|

||||

return s.activityRecorder

|

||||

}

|

||||

|

||||

// GetICEMux returns the ICE UDPMux that was created and used by ICEBind

|

||||

func (s *ICEBind) GetICEMux() (*UniversalUDPMuxDefault, error) {

|

||||

s.muUDPMux.Lock()

|

||||

@@ -199,6 +206,11 @@ func (s *ICEBind) createIPv4ReceiverFn(pc *ipv4.PacketConn, conn *net.UDPConn, r

|

||||

continue

|

||||

}

|

||||

addrPort := msg.Addr.(*net.UDPAddr).AddrPort()

|

||||

|

||||

if isTransportPkg(msg.Buffers, msg.N) {

|

||||

s.activityRecorder.record(addrPort)

|

||||

}

|

||||

|

||||

ep := &wgConn.StdNetEndpoint{AddrPort: addrPort} // TODO: remove allocation

|

||||

wgConn.GetSrcFromControl(msg.OOB[:msg.NN], ep)

|

||||

eps[i] = ep

|

||||

@@ -257,6 +269,13 @@ func (c *ICEBind) receiveRelayed(buffs [][]byte, sizes []int, eps []wgConn.Endpo

|

||||

copy(buffs[0], msg.Buffer)

|

||||

sizes[0] = len(msg.Buffer)

|

||||

eps[0] = wgConn.Endpoint(msg.Endpoint)

|

||||

|

||||

if isTransportPkg(buffs, sizes[0]) {

|

||||

if ep, ok := eps[0].(*Endpoint); ok {

|

||||

c.activityRecorder.record(ep.AddrPort)

|

||||

}

|

||||

}

|

||||

|

||||

return 1, nil

|

||||

}

|

||||

}

|

||||

@@ -272,3 +291,19 @@ func putMessages(msgs *[]ipv6.Message, msgsPool *sync.Pool) {

|

||||

}

|

||||

msgsPool.Put(msgs)

|

||||

}

|

||||

|

||||

func isTransportPkg(buffers [][]byte, n int) bool {

|

||||

// The first buffer should contain at least 4 bytes for type

|

||||

if len(buffers[0]) < 4 {

|

||||

return true

|

||||

}

|

||||

|

||||

// WireGuard packet type is a little-endian uint32 at start

|

||||

packetType := binary.LittleEndian.Uint32(buffers[0][:4])

|

||||

|

||||

// Check if packetType matches known WireGuard message types

|

||||

if packetType == 4 && n > 32 {

|

||||

return true

|

||||

}

|

||||

return false

|

||||

}

|

||||

|

||||

@@ -276,3 +276,7 @@ func (c *KernelConfigurer) GetStats() (map[string]WGStats, error) {

|

||||

}

|

||||

return stats, nil

|

||||

}

|

||||

|

||||

func (c *KernelConfigurer) LastActivities() map[string]time.Time {

|

||||

return nil

|

||||

}

|

||||

|

||||

@@ -16,6 +16,7 @@ import (

|

||||

"golang.zx2c4.com/wireguard/device"

|

||||

"golang.zx2c4.com/wireguard/wgctrl/wgtypes"

|

||||

|

||||

"github.com/netbirdio/netbird/client/iface/bind"

|

||||

nbnet "github.com/netbirdio/netbird/util/net"

|

||||

)

|

||||

|

||||

@@ -36,16 +37,18 @@ const (

|

||||

var ErrAllowedIPNotFound = fmt.Errorf("allowed IP not found")

|

||||

|

||||

type WGUSPConfigurer struct {

|

||||

device *device.Device

|

||||

deviceName string

|

||||

device *device.Device

|

||||

deviceName string

|

||||

activityRecorder *bind.ActivityRecorder

|

||||

|

||||

uapiListener net.Listener

|

||||

}

|

||||

|

||||

func NewUSPConfigurer(device *device.Device, deviceName string) *WGUSPConfigurer {

|

||||

func NewUSPConfigurer(device *device.Device, deviceName string, activityRecorder *bind.ActivityRecorder) *WGUSPConfigurer {

|

||||

wgCfg := &WGUSPConfigurer{

|

||||

device: device,

|

||||

deviceName: deviceName,

|

||||

device: device,

|

||||

deviceName: deviceName,

|

||||

activityRecorder: activityRecorder,

|

||||

}

|

||||

wgCfg.startUAPI()

|

||||

return wgCfg

|

||||

@@ -87,7 +90,19 @@ func (c *WGUSPConfigurer) UpdatePeer(peerKey string, allowedIps []netip.Prefix,

|

||||

Peers: []wgtypes.PeerConfig{peer},

|

||||

}

|

||||

|

||||

return c.device.IpcSet(toWgUserspaceString(config))

|

||||

if ipcErr := c.device.IpcSet(toWgUserspaceString(config)); ipcErr != nil {

|

||||

return ipcErr

|

||||

}

|

||||

|

||||

if endpoint != nil {

|

||||

addr, err := netip.ParseAddr(endpoint.IP.String())

|

||||

if err != nil {

|

||||

return fmt.Errorf("failed to parse endpoint address: %w", err)

|

||||

}

|

||||

addrPort := netip.AddrPortFrom(addr, uint16(endpoint.Port))

|

||||

c.activityRecorder.UpsertAddress(peerKey, addrPort)

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func (c *WGUSPConfigurer) RemovePeer(peerKey string) error {

|

||||

@@ -104,7 +119,10 @@ func (c *WGUSPConfigurer) RemovePeer(peerKey string) error {

|

||||

config := wgtypes.Config{

|

||||

Peers: []wgtypes.PeerConfig{peer},

|

||||

}

|

||||

return c.device.IpcSet(toWgUserspaceString(config))

|

||||

ipcErr := c.device.IpcSet(toWgUserspaceString(config))

|

||||

|

||||

c.activityRecorder.Remove(peerKey)

|

||||

return ipcErr

|

||||

}

|

||||

|

||||

func (c *WGUSPConfigurer) AddAllowedIP(peerKey string, allowedIP netip.Prefix) error {

|

||||

@@ -205,6 +223,10 @@ func (c *WGUSPConfigurer) FullStats() (*Stats, error) {

|

||||

return parseStatus(c.deviceName, ipcStr)

|

||||

}

|

||||

|

||||

func (c *WGUSPConfigurer) LastActivities() map[string]time.Time {

|

||||

return c.activityRecorder.GetLastActivities()

|

||||

}

|

||||

|

||||

// startUAPI starts the UAPI listener for managing the WireGuard interface via external tool

|

||||

func (t *WGUSPConfigurer) startUAPI() {

|

||||

var err error

|

||||

|

||||

@@ -79,7 +79,7 @@ func (t *WGTunDevice) Create(routes []string, dns string, searchDomains []string

|

||||

// this helps with support for the older NetBird clients that had a hardcoded direct mode

|

||||

// t.device.DisableSomeRoamingForBrokenMobileSemantics()

|

||||

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name)

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name, t.iceBind.ActivityRecorder())

|

||||

err = t.configurer.ConfigureInterface(t.key, t.port)

|

||||

if err != nil {

|

||||

t.device.Close()

|

||||

|

||||

@@ -61,7 +61,7 @@ func (t *TunDevice) Create() (WGConfigurer, error) {

|

||||

return nil, fmt.Errorf("error assigning ip: %s", err)

|

||||

}

|

||||

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name)

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name, t.iceBind.ActivityRecorder())

|

||||

err = t.configurer.ConfigureInterface(t.key, t.port)

|

||||

if err != nil {

|

||||

t.device.Close()

|

||||

|

||||

@@ -71,7 +71,7 @@ func (t *TunDevice) Create() (WGConfigurer, error) {

|

||||

// this helps with support for the older NetBird clients that had a hardcoded direct mode

|

||||

// t.device.DisableSomeRoamingForBrokenMobileSemantics()

|

||||

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name)

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name, t.iceBind.ActivityRecorder())

|

||||

err = t.configurer.ConfigureInterface(t.key, t.port)

|

||||

if err != nil {

|

||||

t.device.Close()

|

||||

|

||||

@@ -72,7 +72,7 @@ func (t *TunNetstackDevice) Create() (WGConfigurer, error) {

|

||||

device.NewLogger(wgLogLevel(), "[netbird] "),

|

||||

)

|

||||

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name)

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name, t.iceBind.ActivityRecorder())

|

||||

err = t.configurer.ConfigureInterface(t.key, t.port)

|

||||

if err != nil {

|

||||

_ = tunIface.Close()

|

||||

|

||||

@@ -64,7 +64,7 @@ func (t *USPDevice) Create() (WGConfigurer, error) {

|

||||

return nil, fmt.Errorf("error assigning ip: %s", err)

|

||||

}

|

||||

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name)

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name, t.iceBind.ActivityRecorder())

|

||||

err = t.configurer.ConfigureInterface(t.key, t.port)

|

||||

if err != nil {

|

||||

t.device.Close()

|

||||

|

||||

@@ -94,7 +94,7 @@ func (t *TunDevice) Create() (WGConfigurer, error) {

|

||||

return nil, fmt.Errorf("error assigning ip: %s", err)

|

||||

}

|

||||

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name)

|

||||

t.configurer = configurer.NewUSPConfigurer(t.device, t.name, t.iceBind.ActivityRecorder())

|

||||

err = t.configurer.ConfigureInterface(t.key, t.port)

|

||||

if err != nil {

|

||||

t.device.Close()

|

||||

|

||||

@@ -19,4 +19,5 @@ type WGConfigurer interface {

|

||||

Close()

|

||||

GetStats() (map[string]configurer.WGStats, error)

|

||||

FullStats() (*configurer.Stats, error)

|

||||

LastActivities() map[string]time.Time

|

||||

}

|

||||

|

||||

@@ -29,6 +29,11 @@ const (

|

||||

WgInterfaceDefault = configurer.WgInterfaceDefault

|

||||

)

|

||||

|

||||

var (

|

||||

// ErrIfaceNotFound is returned when the WireGuard interface is not found

|

||||

ErrIfaceNotFound = fmt.Errorf("wireguard interface not found")

|

||||

)

|

||||

|

||||

type wgProxyFactory interface {

|

||||

GetProxy() wgproxy.Proxy

|

||||

Free() error

|

||||

@@ -117,6 +122,9 @@ func (w *WGIface) UpdateAddr(newAddr string) error {

|

||||

func (w *WGIface) UpdatePeer(peerKey string, allowedIps []netip.Prefix, keepAlive time.Duration, endpoint *net.UDPAddr, preSharedKey *wgtypes.Key) error {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

if w.configurer == nil {

|

||||

return ErrIfaceNotFound

|

||||

}

|

||||

|

||||

log.Debugf("updating interface %s peer %s, endpoint %s, allowedIPs %v", w.tun.DeviceName(), peerKey, endpoint, allowedIps)

|

||||

return w.configurer.UpdatePeer(peerKey, allowedIps, keepAlive, endpoint, preSharedKey)

|

||||

@@ -126,6 +134,9 @@ func (w *WGIface) UpdatePeer(peerKey string, allowedIps []netip.Prefix, keepAliv

|

||||

func (w *WGIface) RemovePeer(peerKey string) error {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

if w.configurer == nil {

|

||||

return ErrIfaceNotFound

|

||||

}

|

||||

|

||||

log.Debugf("Removing peer %s from interface %s ", peerKey, w.tun.DeviceName())

|

||||

return w.configurer.RemovePeer(peerKey)

|

||||

@@ -135,6 +146,9 @@ func (w *WGIface) RemovePeer(peerKey string) error {

|

||||

func (w *WGIface) AddAllowedIP(peerKey string, allowedIP netip.Prefix) error {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

if w.configurer == nil {

|

||||

return ErrIfaceNotFound

|

||||

}

|

||||

|

||||

log.Debugf("Adding allowed IP to interface %s and peer %s: allowed IP %s ", w.tun.DeviceName(), peerKey, allowedIP)

|

||||

return w.configurer.AddAllowedIP(peerKey, allowedIP)

|

||||

@@ -144,6 +158,9 @@ func (w *WGIface) AddAllowedIP(peerKey string, allowedIP netip.Prefix) error {

|

||||

func (w *WGIface) RemoveAllowedIP(peerKey string, allowedIP netip.Prefix) error {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

if w.configurer == nil {

|

||||

return ErrIfaceNotFound

|

||||

}

|

||||

|

||||

log.Debugf("Removing allowed IP from interface %s and peer %s: allowed IP %s ", w.tun.DeviceName(), peerKey, allowedIP)

|

||||

return w.configurer.RemoveAllowedIP(peerKey, allowedIP)

|

||||

@@ -214,10 +231,29 @@ func (w *WGIface) GetWGDevice() *wgdevice.Device {

|

||||

|

||||

// GetStats returns the last handshake time, rx and tx bytes

|

||||

func (w *WGIface) GetStats() (map[string]configurer.WGStats, error) {

|

||||

if w.configurer == nil {

|

||||

return nil, ErrIfaceNotFound

|

||||

}

|

||||

return w.configurer.GetStats()

|

||||

}

|

||||

|

||||

func (w *WGIface) LastActivities() map[string]time.Time {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

|

||||

if w.configurer == nil {

|

||||

return nil

|

||||

}

|

||||

|

||||

return w.configurer.LastActivities()

|

||||

|

||||

}

|

||||

|

||||

func (w *WGIface) FullStats() (*configurer.Stats, error) {

|

||||

if w.configurer == nil {

|

||||

return nil, ErrIfaceNotFound

|

||||

}

|

||||

|

||||

return w.configurer.FullStats()

|

||||

}

|

||||

|

||||

|

||||

@@ -12,7 +12,6 @@ import (

|

||||

"github.com/netbirdio/netbird/client/internal/lazyconn"

|

||||

"github.com/netbirdio/netbird/client/internal/lazyconn/manager"

|

||||

"github.com/netbirdio/netbird/client/internal/peer"

|

||||

"github.com/netbirdio/netbird/client/internal/peer/dispatcher"

|

||||

"github.com/netbirdio/netbird/client/internal/peerstore"

|

||||

"github.com/netbirdio/netbird/route"

|

||||

)

|

||||

@@ -26,11 +25,11 @@ import (

|

||||

//

|

||||

// The implementation is not thread-safe; it is protected by engine.syncMsgMux.

|

||||

type ConnMgr struct {

|

||||

peerStore *peerstore.Store

|

||||

statusRecorder *peer.Status

|

||||

iface lazyconn.WGIface

|

||||

dispatcher *dispatcher.ConnectionDispatcher

|

||||

enabledLocally bool

|

||||

peerStore *peerstore.Store

|

||||

statusRecorder *peer.Status

|

||||

iface lazyconn.WGIface

|

||||

enabledLocally bool

|

||||

rosenpassEnabled bool

|

||||

|

||||

lazyConnMgr *manager.Manager

|

||||

|

||||

@@ -39,12 +38,12 @@ type ConnMgr struct {

|

||||

lazyCtxCancel context.CancelFunc

|

||||

}

|

||||

|

||||

func NewConnMgr(engineConfig *EngineConfig, statusRecorder *peer.Status, peerStore *peerstore.Store, iface lazyconn.WGIface, dispatcher *dispatcher.ConnectionDispatcher) *ConnMgr {

|

||||

func NewConnMgr(engineConfig *EngineConfig, statusRecorder *peer.Status, peerStore *peerstore.Store, iface lazyconn.WGIface) *ConnMgr {

|

||||

e := &ConnMgr{

|

||||

peerStore: peerStore,

|

||||

statusRecorder: statusRecorder,

|

||||

iface: iface,

|

||||

dispatcher: dispatcher,

|

||||

peerStore: peerStore,

|

||||

statusRecorder: statusRecorder,

|

||||

iface: iface,

|

||||

rosenpassEnabled: engineConfig.RosenpassEnabled,

|

||||

}

|

||||

if engineConfig.LazyConnectionEnabled || lazyconn.IsLazyConnEnabledByEnv() {

|

||||

e.enabledLocally = true

|

||||

@@ -64,6 +63,11 @@ func (e *ConnMgr) Start(ctx context.Context) {

|

||||

return

|

||||

}

|

||||

|

||||

if e.rosenpassEnabled {

|

||||

log.Warnf("rosenpass connection manager is enabled, lazy connection manager will not be started")

|

||||

return

|

||||

}

|

||||

|

||||

e.initLazyManager(ctx)

|

||||

e.statusRecorder.UpdateLazyConnection(true)

|

||||

}

|

||||

@@ -83,7 +87,12 @@ func (e *ConnMgr) UpdatedRemoteFeatureFlag(ctx context.Context, enabled bool) er

|

||||

return nil

|

||||

}

|

||||

|

||||

log.Infof("lazy connection manager is enabled by management feature flag")

|

||||

if e.rosenpassEnabled {

|

||||

log.Infof("rosenpass connection manager is enabled, lazy connection manager will not be started")

|

||||

return nil

|

||||

}

|

||||

|

||||

log.Warnf("lazy connection manager is enabled by management feature flag")

|

||||

e.initLazyManager(ctx)

|

||||

e.statusRecorder.UpdateLazyConnection(true)

|

||||

return e.addPeersToLazyConnManager()

|

||||

@@ -133,7 +142,7 @@ func (e *ConnMgr) SetExcludeList(ctx context.Context, peerIDs map[string]bool) {

|

||||

excludedPeers = append(excludedPeers, lazyPeerCfg)

|

||||

}

|

||||

|

||||

added := e.lazyConnMgr.ExcludePeer(e.lazyCtx, excludedPeers)

|

||||

added := e.lazyConnMgr.ExcludePeer(excludedPeers)

|

||||

for _, peerID := range added {

|

||||

var peerConn *peer.Conn

|

||||

var exists bool

|

||||

@@ -175,7 +184,7 @@ func (e *ConnMgr) AddPeerConn(ctx context.Context, peerKey string, conn *peer.Co

|

||||

PeerConnID: conn.ConnID(),

|

||||

Log: conn.Log,

|

||||

}

|

||||

excluded, err := e.lazyConnMgr.AddPeer(e.lazyCtx, lazyPeerCfg)

|

||||

excluded, err := e.lazyConnMgr.AddPeer(lazyPeerCfg)

|

||||

if err != nil {

|

||||

conn.Log.Errorf("failed to add peer to lazyconn manager: %v", err)

|

||||

if err := conn.Open(ctx); err != nil {

|

||||

@@ -201,7 +210,7 @@ func (e *ConnMgr) RemovePeerConn(peerKey string) {

|

||||

if !ok {

|

||||

return

|

||||

}

|

||||

defer conn.Close()

|

||||

defer conn.Close(false)

|

||||

|

||||

if !e.isStartedWithLazyMgr() {

|

||||

return

|

||||

@@ -211,23 +220,28 @@ func (e *ConnMgr) RemovePeerConn(peerKey string) {

|

||||

conn.Log.Infof("removed peer from lazy conn manager")

|

||||

}

|

||||

|

||||

func (e *ConnMgr) OnSignalMsg(ctx context.Context, peerKey string) (*peer.Conn, bool) {

|

||||

conn, ok := e.peerStore.PeerConn(peerKey)

|

||||

if !ok {

|

||||

return nil, false

|

||||

}

|

||||

|

||||

func (e *ConnMgr) ActivatePeer(ctx context.Context, conn *peer.Conn) {

|

||||

if !e.isStartedWithLazyMgr() {

|

||||

return conn, true

|

||||

return

|

||||

}

|

||||

|

||||

if found := e.lazyConnMgr.ActivatePeer(e.lazyCtx, peerKey); found {

|

||||

if found := e.lazyConnMgr.ActivatePeer(conn.GetKey()); found {

|

||||

conn.Log.Infof("activated peer from inactive state")

|

||||

if err := conn.Open(ctx); err != nil {

|

||||

conn.Log.Errorf("failed to open connection: %v", err)

|

||||

}

|

||||

}

|

||||

return conn, true

|

||||

}

|

||||

|

||||

// DeactivatePeer deactivates a peer connection in the lazy connection manager.

|

||||

// If locally the lazy connection is disabled, we force the peer connection open.

|

||||

func (e *ConnMgr) DeactivatePeer(conn *peer.Conn) {

|

||||

if !e.isStartedWithLazyMgr() {

|

||||

return

|

||||

}

|

||||

|

||||

conn.Log.Infof("closing peer connection: remote peer initiated inactive, idle lazy state and sent GOAWAY")

|

||||

e.lazyConnMgr.DeactivatePeer(conn.ConnID())

|

||||

}

|

||||

|

||||

func (e *ConnMgr) Close() {

|

||||

@@ -244,7 +258,7 @@ func (e *ConnMgr) initLazyManager(engineCtx context.Context) {

|

||||

cfg := manager.Config{

|

||||

InactivityThreshold: inactivityThresholdEnv(),

|

||||

}

|

||||

e.lazyConnMgr = manager.NewManager(cfg, engineCtx, e.peerStore, e.iface, e.dispatcher)

|

||||

e.lazyConnMgr = manager.NewManager(cfg, engineCtx, e.peerStore, e.iface)

|

||||

|

||||

e.lazyCtx, e.lazyCtxCancel = context.WithCancel(engineCtx)

|

||||

|

||||

@@ -275,7 +289,7 @@ func (e *ConnMgr) addPeersToLazyConnManager() error {

|

||||

lazyPeerCfgs = append(lazyPeerCfgs, lazyPeerCfg)

|

||||

}

|

||||

|

||||

return e.lazyConnMgr.AddActivePeers(e.lazyCtx, lazyPeerCfgs)

|

||||

return e.lazyConnMgr.AddActivePeers(lazyPeerCfgs)

|

||||

}

|

||||

|

||||

func (e *ConnMgr) closeManager(ctx context.Context) {

|

||||

|

||||

@@ -167,6 +167,7 @@ type BundleGenerator struct {

|

||||

anonymize bool

|

||||

clientStatus string

|

||||

includeSystemInfo bool

|

||||

logFileCount uint32

|

||||

|

||||

archive *zip.Writer

|

||||

}

|

||||

@@ -175,6 +176,7 @@ type BundleConfig struct {

|

||||

Anonymize bool

|

||||

ClientStatus string

|

||||

IncludeSystemInfo bool

|

||||

LogFileCount uint32

|

||||

}

|

||||

|

||||

type GeneratorDependencies struct {

|

||||

@@ -185,6 +187,12 @@ type GeneratorDependencies struct {

|

||||

}

|

||||

|

||||

func NewBundleGenerator(deps GeneratorDependencies, cfg BundleConfig) *BundleGenerator {

|

||||

// Default to 1 log file for backward compatibility when 0 is provided

|

||||

logFileCount := cfg.LogFileCount

|

||||

if logFileCount == 0 {

|

||||

logFileCount = 1

|

||||

}

|

||||

|

||||

return &BundleGenerator{

|

||||

anonymizer: anonymize.NewAnonymizer(anonymize.DefaultAddresses()),

|

||||

|

||||

@@ -196,6 +204,7 @@ func NewBundleGenerator(deps GeneratorDependencies, cfg BundleConfig) *BundleGen

|

||||

anonymize: cfg.Anonymize,

|

||||

clientStatus: cfg.ClientStatus,

|

||||

includeSystemInfo: cfg.IncludeSystemInfo,

|

||||

logFileCount: logFileCount,

|

||||

}

|

||||

}

|

||||

|

||||

@@ -573,32 +582,8 @@ func (g *BundleGenerator) addLogfile() error {

|

||||

return fmt.Errorf("add client log file to zip: %w", err)

|

||||

}

|

||||

|

||||

// add latest rotated log file

|

||||

pattern := filepath.Join(logDir, "client-*.log.gz")

|

||||

files, err := filepath.Glob(pattern)

|

||||

if err != nil {

|

||||

log.Warnf("failed to glob rotated logs: %v", err)

|

||||

} else if len(files) > 0 {

|

||||

// pick the file with the latest ModTime

|

||||

sort.Slice(files, func(i, j int) bool {

|

||||

fi, err := os.Stat(files[i])

|

||||

if err != nil {

|

||||

log.Warnf("failed to stat rotated log %s: %v", files[i], err)

|

||||

return false

|

||||

}

|

||||

fj, err := os.Stat(files[j])

|

||||

if err != nil {

|

||||

log.Warnf("failed to stat rotated log %s: %v", files[j], err)

|

||||

return false

|

||||

}

|

||||

return fi.ModTime().Before(fj.ModTime())

|

||||

})

|

||||

latest := files[len(files)-1]

|

||||

name := filepath.Base(latest)

|

||||

if err := g.addSingleLogFileGz(latest, name); err != nil {

|

||||

log.Warnf("failed to add rotated log %s: %v", name, err)

|

||||

}

|

||||

}

|

||||

// add rotated log files based on logFileCount

|

||||

g.addRotatedLogFiles(logDir)

|

||||

|

||||

stdErrLogPath := filepath.Join(logDir, errorLogFile)

|

||||

stdoutLogPath := filepath.Join(logDir, stdoutLogFile)

|

||||

@@ -682,6 +667,52 @@ func (g *BundleGenerator) addSingleLogFileGz(logPath, targetName string) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

// addRotatedLogFiles adds rotated log files to the bundle based on logFileCount

|

||||

func (g *BundleGenerator) addRotatedLogFiles(logDir string) {

|

||||

if g.logFileCount == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

pattern := filepath.Join(logDir, "client-*.log.gz")

|

||||

files, err := filepath.Glob(pattern)

|

||||

if err != nil {

|

||||

log.Warnf("failed to glob rotated logs: %v", err)

|

||||

return

|

||||

}

|

||||

|

||||

if len(files) == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

// sort files by modification time (newest first)

|

||||

sort.Slice(files, func(i, j int) bool {

|

||||

fi, err := os.Stat(files[i])

|

||||

if err != nil {

|

||||

log.Warnf("failed to stat rotated log %s: %v", files[i], err)

|

||||

return false

|

||||

}

|

||||

fj, err := os.Stat(files[j])

|

||||

if err != nil {

|

||||

log.Warnf("failed to stat rotated log %s: %v", files[j], err)

|

||||

return false

|

||||

}

|

||||

return fi.ModTime().After(fj.ModTime())

|

||||

})

|

||||

|

||||

// include up to logFileCount rotated files

|

||||

maxFiles := int(g.logFileCount)

|

||||

if maxFiles > len(files) {

|

||||

maxFiles = len(files)

|

||||

}

|

||||

|

||||

for i := 0; i < maxFiles; i++ {

|

||||

name := filepath.Base(files[i])

|

||||

if err := g.addSingleLogFileGz(files[i], name); err != nil {

|

||||

log.Warnf("failed to add rotated log %s: %v", name, err)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (g *BundleGenerator) addFileToZip(reader io.Reader, filename string) error {

|

||||

header := &zip.FileHeader{

|

||||

Name: filename,

|

||||

|

||||

@@ -36,7 +36,6 @@ import (

|

||||

nftypes "github.com/netbirdio/netbird/client/internal/netflow/types"

|

||||

"github.com/netbirdio/netbird/client/internal/networkmonitor"

|

||||

"github.com/netbirdio/netbird/client/internal/peer"

|

||||

"github.com/netbirdio/netbird/client/internal/peer/dispatcher"

|

||||

"github.com/netbirdio/netbird/client/internal/peer/guard"

|

||||

icemaker "github.com/netbirdio/netbird/client/internal/peer/ice"

|

||||

"github.com/netbirdio/netbird/client/internal/peerstore"

|

||||

@@ -175,8 +174,7 @@ type Engine struct {

|

||||

|

||||

sshServer sshServer

|

||||

|

||||

statusRecorder *peer.Status

|

||||

peerConnDispatcher *dispatcher.ConnectionDispatcher

|

||||

statusRecorder *peer.Status

|

||||

|

||||

firewall firewallManager.Manager

|

||||

routeManager routemanager.Manager

|

||||

@@ -463,9 +461,7 @@ func (e *Engine) Start() error {

|

||||

NATExternalIPs: e.parseNATExternalIPMappings(),

|

||||

}

|

||||

|

||||

e.peerConnDispatcher = dispatcher.NewConnectionDispatcher()

|

||||

|

||||

e.connMgr = NewConnMgr(e.config, e.statusRecorder, e.peerStore, wgIface, e.peerConnDispatcher)

|

||||

e.connMgr = NewConnMgr(e.config, e.statusRecorder, e.peerStore, wgIface)

|

||||

e.connMgr.Start(e.ctx)

|

||||

|

||||

e.srWatcher = guard.NewSRWatcher(e.signal, e.relayManager, e.mobileDep.IFaceDiscover, iceCfg)

|

||||

@@ -1222,7 +1218,7 @@ func (e *Engine) addNewPeer(peerConfig *mgmProto.RemotePeerConfig) error {

|

||||

}

|

||||

|

||||

if exists := e.connMgr.AddPeerConn(e.ctx, peerKey, conn); exists {

|

||||

conn.Close()

|

||||

conn.Close(false)

|

||||

return fmt.Errorf("peer already exists: %s", peerKey)

|

||||

}

|

||||

|

||||

@@ -1270,13 +1266,12 @@ func (e *Engine) createPeerConn(pubKey string, allowedIPs []netip.Prefix, agentV

|

||||

}

|

||||

|

||||

serviceDependencies := peer.ServiceDependencies{

|

||||

StatusRecorder: e.statusRecorder,

|

||||

Signaler: e.signaler,

|

||||

IFaceDiscover: e.mobileDep.IFaceDiscover,

|

||||

RelayManager: e.relayManager,

|

||||

SrWatcher: e.srWatcher,

|

||||

Semaphore: e.connSemaphore,

|

||||

PeerConnDispatcher: e.peerConnDispatcher,

|

||||

StatusRecorder: e.statusRecorder,

|

||||

Signaler: e.signaler,

|

||||

IFaceDiscover: e.mobileDep.IFaceDiscover,

|

||||

RelayManager: e.relayManager,

|

||||

SrWatcher: e.srWatcher,

|

||||

Semaphore: e.connSemaphore,

|

||||

}

|

||||

peerConn, err := peer.NewConn(config, serviceDependencies)

|

||||

if err != nil {

|

||||

@@ -1299,11 +1294,16 @@ func (e *Engine) receiveSignalEvents() {

|

||||

e.syncMsgMux.Lock()

|

||||

defer e.syncMsgMux.Unlock()

|

||||

|

||||

conn, ok := e.connMgr.OnSignalMsg(e.ctx, msg.Key)

|

||||

conn, ok := e.peerStore.PeerConn(msg.Key)

|

||||

if !ok {

|

||||

return fmt.Errorf("wrongly addressed message %s", msg.Key)

|

||||

}

|

||||

|

||||

msgType := msg.GetBody().GetType()

|

||||

if msgType != sProto.Body_GO_IDLE {

|

||||

e.connMgr.ActivatePeer(e.ctx, conn)

|

||||

}

|

||||

|

||||

switch msg.GetBody().Type {

|

||||

case sProto.Body_OFFER:

|

||||

remoteCred, err := signal.UnMarshalCredential(msg)

|

||||

@@ -1360,6 +1360,8 @@ func (e *Engine) receiveSignalEvents() {

|

||||

|

||||

go conn.OnRemoteCandidate(candidate, e.routeManager.GetClientRoutes())

|

||||

case sProto.Body_MODE:

|

||||

case sProto.Body_GO_IDLE:

|

||||

e.connMgr.DeactivatePeer(conn)

|

||||

}

|

||||

|

||||

return nil

|

||||

|

||||

@@ -36,7 +36,6 @@ import (

|

||||

"github.com/netbirdio/netbird/client/iface/wgproxy"

|

||||

"github.com/netbirdio/netbird/client/internal/dns"

|

||||

"github.com/netbirdio/netbird/client/internal/peer"

|

||||

"github.com/netbirdio/netbird/client/internal/peer/dispatcher"

|

||||

"github.com/netbirdio/netbird/client/internal/peer/guard"

|

||||

icemaker "github.com/netbirdio/netbird/client/internal/peer/ice"

|

||||

"github.com/netbirdio/netbird/client/internal/routemanager"

|

||||

@@ -97,6 +96,7 @@ type MockWGIface struct {

|

||||

GetInterfaceGUIDStringFunc func() (string, error)

|

||||

GetProxyFunc func() wgproxy.Proxy

|

||||

GetNetFunc func() *netstack.Net

|

||||

LastActivitiesFunc func() map[string]time.Time

|

||||

}

|

||||

|

||||

func (m *MockWGIface) FullStats() (*configurer.Stats, error) {

|

||||

@@ -187,6 +187,13 @@ func (m *MockWGIface) GetNet() *netstack.Net {

|

||||

return m.GetNetFunc()

|

||||

}

|

||||

|

||||

func (m *MockWGIface) LastActivities() map[string]time.Time {

|

||||

if m.LastActivitiesFunc != nil {

|

||||

return m.LastActivitiesFunc()

|

||||

}

|

||||

return nil

|

||||

}

|

||||

|

||||

func TestMain(m *testing.M) {

|

||||

_ = util.InitLog("debug", "console")

|

||||

code := m.Run()

|

||||

@@ -430,7 +437,7 @@ func TestEngine_UpdateNetworkMap(t *testing.T) {

|

||||

engine.udpMux = bind.NewUniversalUDPMuxDefault(bind.UniversalUDPMuxParams{UDPConn: conn})

|

||||

engine.ctx = ctx

|

||||

engine.srWatcher = guard.NewSRWatcher(nil, nil, nil, icemaker.Config{})

|

||||

engine.connMgr = NewConnMgr(engine.config, engine.statusRecorder, engine.peerStore, wgIface, dispatcher.NewConnectionDispatcher())

|

||||

engine.connMgr = NewConnMgr(engine.config, engine.statusRecorder, engine.peerStore, wgIface)

|

||||

engine.connMgr.Start(ctx)

|

||||

|

||||

type testCase struct {

|

||||

@@ -819,7 +826,7 @@ func TestEngine_UpdateNetworkMapWithRoutes(t *testing.T) {

|

||||

|

||||

engine.routeManager = mockRouteManager

|

||||

engine.dnsServer = &dns.MockServer{}

|

||||

engine.connMgr = NewConnMgr(engine.config, engine.statusRecorder, engine.peerStore, engine.wgInterface, dispatcher.NewConnectionDispatcher())

|

||||

engine.connMgr = NewConnMgr(engine.config, engine.statusRecorder, engine.peerStore, engine.wgInterface)

|

||||

engine.connMgr.Start(ctx)

|

||||

|

||||

defer func() {

|

||||

@@ -1017,7 +1024,7 @@ func TestEngine_UpdateNetworkMapWithDNSUpdate(t *testing.T) {

|

||||

}

|

||||

|

||||

engine.dnsServer = mockDNSServer

|

||||

engine.connMgr = NewConnMgr(engine.config, engine.statusRecorder, engine.peerStore, engine.wgInterface, dispatcher.NewConnectionDispatcher())

|

||||

engine.connMgr = NewConnMgr(engine.config, engine.statusRecorder, engine.peerStore, engine.wgInterface)

|

||||

engine.connMgr.Start(ctx)

|

||||

|

||||

defer func() {

|

||||

|

||||

@@ -38,4 +38,5 @@ type wgIfaceBase interface {

|

||||

GetStats() (map[string]configurer.WGStats, error)

|

||||

GetNet() *netstack.Net

|

||||

FullStats() (*configurer.Stats, error)

|

||||

LastActivities() map[string]time.Time

|

||||

}

|

||||

|

||||

@@ -13,7 +13,7 @@ import (

|

||||

|

||||

// Listener it is not a thread safe implementation, do not call Close before ReadPackets. It will cause blocking

|

||||

type Listener struct {

|

||||

wgIface lazyconn.WGIface

|

||||

wgIface WgInterface

|

||||

peerCfg lazyconn.PeerConfig

|

||||

conn *net.UDPConn

|

||||

endpoint *net.UDPAddr

|

||||

@@ -22,7 +22,7 @@ type Listener struct {

|

||||

isClosed atomic.Bool // use to avoid error log when closing the listener

|

||||

}

|

||||

|

||||

func NewListener(wgIface lazyconn.WGIface, cfg lazyconn.PeerConfig) (*Listener, error) {

|

||||

func NewListener(wgIface WgInterface, cfg lazyconn.PeerConfig) (*Listener, error) {

|

||||

d := &Listener{

|

||||

wgIface: wgIface,

|

||||

peerCfg: cfg,

|

||||

|

||||

@@ -1,18 +1,27 @@

|

||||

package activity

|

||||

|

||||

import (

|

||||

"net"

|

||||

"net/netip"

|

||||

"sync"

|

||||

"time"

|

||||

|

||||

log "github.com/sirupsen/logrus"

|

||||

"golang.zx2c4.com/wireguard/wgctrl/wgtypes"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal/lazyconn"

|

||||

peerid "github.com/netbirdio/netbird/client/internal/peer/id"

|

||||

)

|

||||

|

||||

type WgInterface interface {

|

||||

RemovePeer(peerKey string) error

|

||||

UpdatePeer(peerKey string, allowedIps []netip.Prefix, keepAlive time.Duration, endpoint *net.UDPAddr, preSharedKey *wgtypes.Key) error

|

||||

}

|

||||

|

||||

type Manager struct {

|

||||

OnActivityChan chan peerid.ConnID

|

||||

|

||||

wgIface lazyconn.WGIface

|

||||

wgIface WgInterface

|

||||

|

||||

peers map[peerid.ConnID]*Listener

|

||||

done chan struct{}

|

||||

@@ -20,7 +29,7 @@ type Manager struct {

|

||||

mu sync.Mutex

|

||||

}

|

||||

|

||||

func NewManager(wgIface lazyconn.WGIface) *Manager {

|

||||

func NewManager(wgIface WgInterface) *Manager {

|

||||

m := &Manager{

|

||||

OnActivityChan: make(chan peerid.ConnID, 1),

|

||||

wgIface: wgIface,

|

||||

|

||||

@@ -1,75 +0,0 @@

|

||||

package inactivity

|

||||

|

||||

import (

|

||||

"context"

|

||||

"time"

|

||||

|

||||

peer "github.com/netbirdio/netbird/client/internal/peer/id"

|

||||

)

|

||||

|

||||

const (

|

||||

DefaultInactivityThreshold = 60 * time.Minute // idle after 1 hour inactivity

|

||||

MinimumInactivityThreshold = 3 * time.Minute

|

||||

)

|

||||

|

||||

type Monitor struct {

|

||||

id peer.ConnID

|

||||

timer *time.Timer

|

||||

cancel context.CancelFunc

|

||||

inactivityThreshold time.Duration

|

||||

}

|

||||

|

||||

func NewInactivityMonitor(peerID peer.ConnID, threshold time.Duration) *Monitor {

|

||||

i := &Monitor{

|

||||

id: peerID,

|

||||

timer: time.NewTimer(0),

|

||||

inactivityThreshold: threshold,

|

||||

}

|

||||

i.timer.Stop()

|

||||

return i

|

||||

}

|

||||

|

||||

func (i *Monitor) Start(ctx context.Context, timeoutChan chan peer.ConnID) {

|

||||

i.timer.Reset(i.inactivityThreshold)

|

||||

defer i.timer.Stop()

|

||||

|

||||

ctx, i.cancel = context.WithCancel(ctx)

|

||||

defer func() {

|

||||

defer i.cancel()

|

||||

select {

|

||||

case <-i.timer.C:

|

||||

default:

|

||||

}

|

||||

}()

|

||||

|

||||

select {

|

||||

case <-i.timer.C:

|

||||

select {

|

||||

case timeoutChan <- i.id:

|

||||

case <-ctx.Done():

|

||||

return

|

||||

}

|

||||

case <-ctx.Done():

|

||||

return

|

||||

}

|

||||

}

|

||||

|

||||

func (i *Monitor) Stop() {

|

||||

if i.cancel == nil {

|

||||

return

|

||||

}

|

||||

i.cancel()

|

||||

}

|

||||

|

||||

func (i *Monitor) PauseTimer() {

|

||||

i.timer.Stop()

|

||||

}

|

||||

|

||||

func (i *Monitor) ResetTimer() {

|

||||

i.timer.Reset(i.inactivityThreshold)

|

||||

}

|

||||

|

||||

func (i *Monitor) ResetMonitor(ctx context.Context, timeoutChan chan peer.ConnID) {

|

||||

i.Stop()

|

||||

go i.Start(ctx, timeoutChan)

|

||||

}

|

||||

@@ -1,156 +0,0 @@

|

||||

package inactivity

|

||||

|

||||

import (

|

||||

"context"

|

||||

"testing"

|

||||

"time"

|

||||

|

||||

peerid "github.com/netbirdio/netbird/client/internal/peer/id"

|

||||

)

|

||||

|

||||

type MocPeer struct {

|

||||

}

|

||||

|

||||

func (m *MocPeer) ConnID() peerid.ConnID {

|

||||

return peerid.ConnID(m)

|

||||

}

|

||||

|

||||

func TestInactivityMonitor(t *testing.T) {

|

||||

tCtx, testTimeoutCancel := context.WithTimeout(context.Background(), time.Second*5)

|

||||

defer testTimeoutCancel()

|

||||

|

||||

p := &MocPeer{}

|

||||

im := NewInactivityMonitor(p.ConnID(), time.Second*2)

|

||||

|

||||

timeoutChan := make(chan peerid.ConnID)

|

||||

|

||||

exitChan := make(chan struct{})

|

||||

|

||||

go func() {

|

||||

defer close(exitChan)

|

||||

im.Start(tCtx, timeoutChan)

|

||||

}()

|

||||

|

||||

select {

|

||||

case <-timeoutChan:

|

||||

case <-tCtx.Done():

|

||||

t.Fatal("timeout")

|

||||

}

|

||||

|

||||

select {

|

||||

case <-exitChan:

|

||||

case <-tCtx.Done():

|

||||

t.Fatal("timeout")

|

||||

}

|

||||

}

|

||||

|

||||

func TestReuseInactivityMonitor(t *testing.T) {

|

||||

p := &MocPeer{}

|

||||

im := NewInactivityMonitor(p.ConnID(), time.Second*2)

|

||||

|

||||

timeoutChan := make(chan peerid.ConnID)

|

||||

|

||||

for i := 2; i > 0; i-- {

|

||||

exitChan := make(chan struct{})

|

||||

|

||||

testTimeoutCtx, testTimeoutCancel := context.WithTimeout(context.Background(), time.Second*5)

|

||||

|

||||

go func() {

|

||||

defer close(exitChan)

|

||||

im.Start(testTimeoutCtx, timeoutChan)

|

||||

}()

|

||||

|

||||

select {

|

||||

case <-timeoutChan:

|

||||

case <-testTimeoutCtx.Done():

|

||||

t.Fatal("timeout")

|

||||

}

|

||||

|

||||

select {

|

||||

case <-exitChan:

|

||||

case <-testTimeoutCtx.Done():

|

||||

t.Fatal("timeout")

|

||||

}

|

||||

testTimeoutCancel()

|

||||

}

|

||||

}

|

||||

|

||||

func TestStopInactivityMonitor(t *testing.T) {

|

||||

tCtx, testTimeoutCancel := context.WithTimeout(context.Background(), time.Second*5)

|

||||

defer testTimeoutCancel()

|

||||

|

||||

p := &MocPeer{}

|

||||

im := NewInactivityMonitor(p.ConnID(), DefaultInactivityThreshold)

|

||||

|

||||

timeoutChan := make(chan peerid.ConnID)

|

||||

|

||||

exitChan := make(chan struct{})

|

||||

|

||||

go func() {

|

||||

defer close(exitChan)

|

||||

im.Start(tCtx, timeoutChan)

|

||||

}()

|

||||

|

||||

go func() {

|

||||

time.Sleep(3 * time.Second)

|

||||

im.Stop()

|

||||

}()

|

||||

|

||||

select {

|

||||

case <-timeoutChan:

|

||||

t.Fatal("unexpected timeout")

|

||||

case <-exitChan:

|

||||

case <-tCtx.Done():

|

||||

t.Fatal("timeout")

|

||||

}

|

||||

}

|

||||

|

||||

func TestPauseInactivityMonitor(t *testing.T) {

|

||||

tCtx, testTimeoutCancel := context.WithTimeout(context.Background(), time.Second*10)

|

||||

defer testTimeoutCancel()

|

||||

|

||||

p := &MocPeer{}

|

||||

trashHold := time.Second * 3

|

||||

im := NewInactivityMonitor(p.ConnID(), trashHold)

|

||||

|

||||

ctx, cancel := context.WithCancel(context.Background())

|

||||

defer cancel()

|

||||

|

||||

timeoutChan := make(chan peerid.ConnID)

|

||||

|

||||

exitChan := make(chan struct{})

|

||||

|

||||

go func() {

|

||||

defer close(exitChan)

|

||||

im.Start(ctx, timeoutChan)

|

||||

}()

|

||||

|

||||

time.Sleep(1 * time.Second) // grant time to start the monitor

|

||||

im.PauseTimer()

|

||||

|

||||

// check to do not receive timeout

|

||||

thresholdCtx, thresholdCancel := context.WithTimeout(context.Background(), trashHold+time.Second)

|

||||

defer thresholdCancel()

|

||||

select {

|

||||

case <-exitChan:

|

||||

t.Fatal("unexpected exit")

|

||||

case <-timeoutChan:

|

||||

t.Fatal("unexpected timeout")

|

||||

case <-thresholdCtx.Done():

|

||||

// test ok

|

||||

case <-tCtx.Done():

|

||||

t.Fatal("test timed out")

|

||||

}

|

||||

|

||||

// test reset timer

|

||||

im.ResetTimer()

|

||||

|

||||

select {

|

||||

case <-tCtx.Done():

|

||||

t.Fatal("test timed out")

|

||||

case <-exitChan:

|

||||

t.Fatal("unexpected exit")

|

||||

case <-timeoutChan:

|

||||

// expected timeout

|

||||

}

|

||||

}

|

||||

152

client/internal/lazyconn/inactivity/manager.go

Normal file

152

client/internal/lazyconn/inactivity/manager.go

Normal file

@@ -0,0 +1,152 @@

|

||||

package inactivity

|

||||

|

||||

import (

|

||||

"context"

|

||||

"fmt"

|

||||

"time"

|

||||

|

||||

log "github.com/sirupsen/logrus"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal/lazyconn"

|

||||

)

|

||||

|

||||

const (

|

||||

checkInterval = 1 * time.Minute

|

||||

|

||||

DefaultInactivityThreshold = 15 * time.Minute

|

||||

MinimumInactivityThreshold = 1 * time.Minute

|

||||

)

|

||||

|

||||

type WgInterface interface {

|

||||

LastActivities() map[string]time.Time

|

||||

}

|

||||

|

||||

type Manager struct {

|

||||

inactivePeersChan chan map[string]struct{}

|

||||

|

||||

iface WgInterface

|

||||

interestedPeers map[string]*lazyconn.PeerConfig

|

||||

inactivityThreshold time.Duration

|

||||

}

|

||||

|

||||

func NewManager(iface WgInterface, configuredThreshold *time.Duration) *Manager {

|

||||

inactivityThreshold, err := validateInactivityThreshold(configuredThreshold)

|

||||

if err != nil {

|

||||

inactivityThreshold = DefaultInactivityThreshold

|

||||

log.Warnf("invalid inactivity threshold configured: %v, using default: %v", err, DefaultInactivityThreshold)

|

||||

}

|

||||

|

||||

log.Infof("inactivity threshold configured: %v", inactivityThreshold)

|

||||

return &Manager{

|

||||

inactivePeersChan: make(chan map[string]struct{}, 1),

|

||||

iface: iface,

|

||||

interestedPeers: make(map[string]*lazyconn.PeerConfig),

|

||||

inactivityThreshold: inactivityThreshold,

|

||||

}

|

||||

}

|

||||

|

||||

func (m *Manager) InactivePeersChan() chan map[string]struct{} {

|

||||

if m == nil {

|

||||

// return a nil channel that blocks forever

|

||||

return nil

|

||||

}