mirror of

https://github.com/netbirdio/netbird.git

synced 2026-05-12 03:39:55 +00:00

Compare commits

12 Commits

v0.30.0

...

fix/limit-

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

56af98b5b4 | ||

|

|

b2379175fe | ||

|

|

09bdd271f1 | ||

|

|

208a2b7169 | ||

|

|

8284ae959c | ||

|

|

6ce09bca16 | ||

|

|

b79c1d64cc | ||

|

|

b1eda43f4b | ||

|

|

d4ef84fe6e | ||

|

|

44e8107383 | ||

|

|

2c1f5e46d5 | ||

|

|

dbec24b520 |

2

.github/workflows/golang-test-darwin.yml

vendored

2

.github/workflows/golang-test-darwin.yml

vendored

@@ -42,4 +42,4 @@ jobs:

|

|||||||

run: git --no-pager diff --exit-code

|

run: git --no-pager diff --exit-code

|

||||||

|

|

||||||

- name: Test

|

- name: Test

|

||||||

run: NETBIRD_STORE_ENGINE=${{ matrix.store }} go test -exec 'sudo --preserve-env=CI,NETBIRD_STORE_ENGINE' -timeout 5m -p 1 ./...

|

run: NETBIRD_STORE_ENGINE=${{ matrix.store }} CI=true go test -exec 'sudo --preserve-env=CI,NETBIRD_STORE_ENGINE' -timeout 5m -p 1 ./...

|

||||||

|

|||||||

4

.github/workflows/golang-test-linux.yml

vendored

4

.github/workflows/golang-test-linux.yml

vendored

@@ -16,7 +16,7 @@ jobs:

|

|||||||

matrix:

|

matrix:

|

||||||

arch: [ '386','amd64' ]

|

arch: [ '386','amd64' ]

|

||||||

store: [ 'sqlite', 'postgres']

|

store: [ 'sqlite', 'postgres']

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-22.04

|

||||||

steps:

|

steps:

|

||||||

- name: Install Go

|

- name: Install Go

|

||||||

uses: actions/setup-go@v5

|

uses: actions/setup-go@v5

|

||||||

@@ -49,7 +49,7 @@ jobs:

|

|||||||

run: git --no-pager diff --exit-code

|

run: git --no-pager diff --exit-code

|

||||||

|

|

||||||

- name: Test

|

- name: Test

|

||||||

run: CGO_ENABLED=1 GOARCH=${{ matrix.arch }} NETBIRD_STORE_ENGINE=${{ matrix.store }} go test -exec 'sudo --preserve-env=CI,NETBIRD_STORE_ENGINE' -timeout 6m -p 1 ./...

|

run: CGO_ENABLED=1 GOARCH=${{ matrix.arch }} NETBIRD_STORE_ENGINE=${{ matrix.store }} CI=true go test -exec 'sudo --preserve-env=CI,NETBIRD_STORE_ENGINE' -timeout 6m -p 1 ./...

|

||||||

|

|

||||||

test_client_on_docker:

|

test_client_on_docker:

|

||||||

runs-on: ubuntu-20.04

|

runs-on: ubuntu-20.04

|

||||||

|

|||||||

2

.github/workflows/release.yml

vendored

2

.github/workflows/release.yml

vendored

@@ -20,7 +20,7 @@ concurrency:

|

|||||||

|

|

||||||

jobs:

|

jobs:

|

||||||

release:

|

release:

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-22.04

|

||||||

env:

|

env:

|

||||||

flags: ""

|

flags: ""

|

||||||

steps:

|

steps:

|

||||||

|

|||||||

@@ -49,6 +49,8 @@

|

|||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

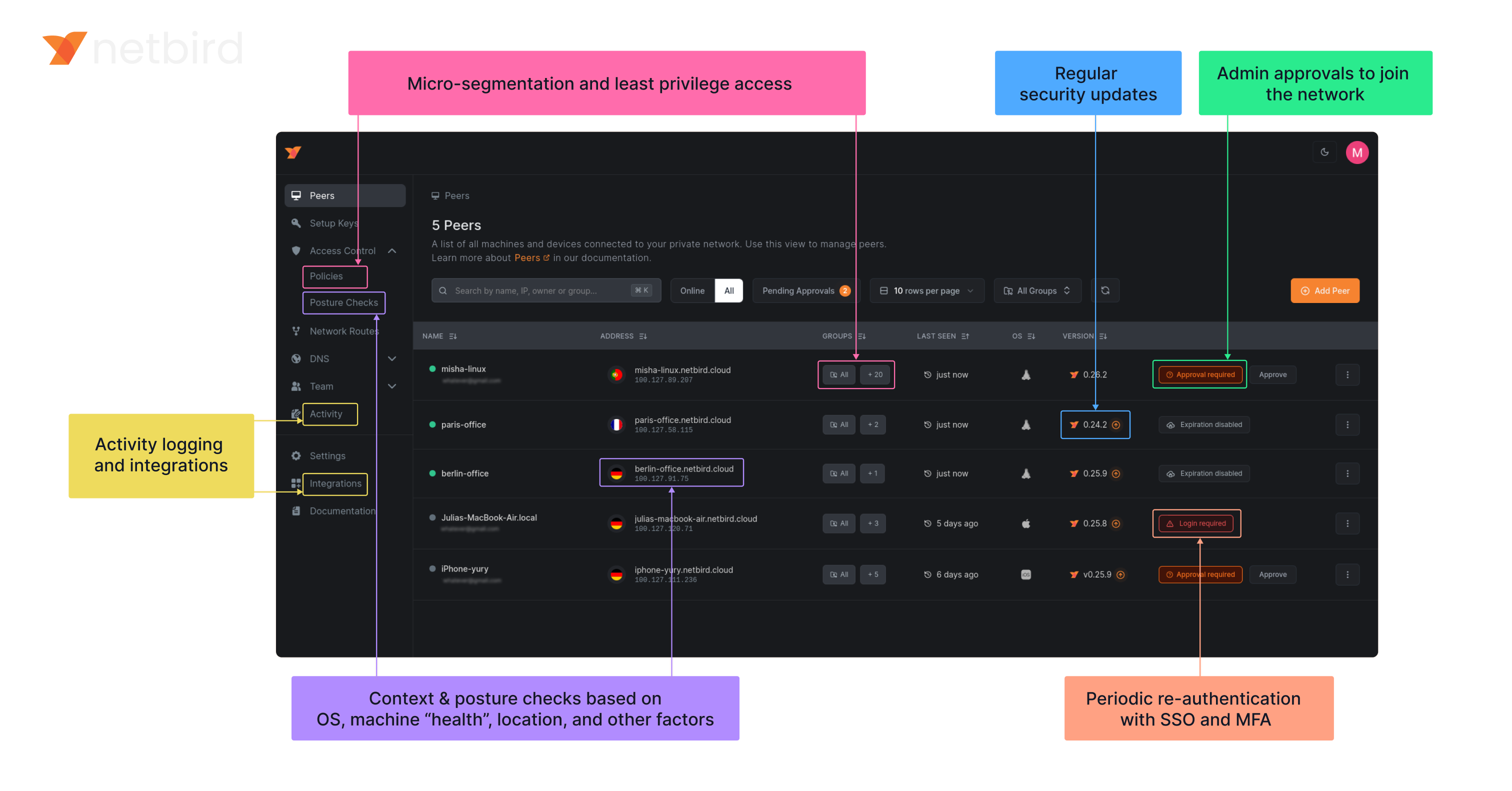

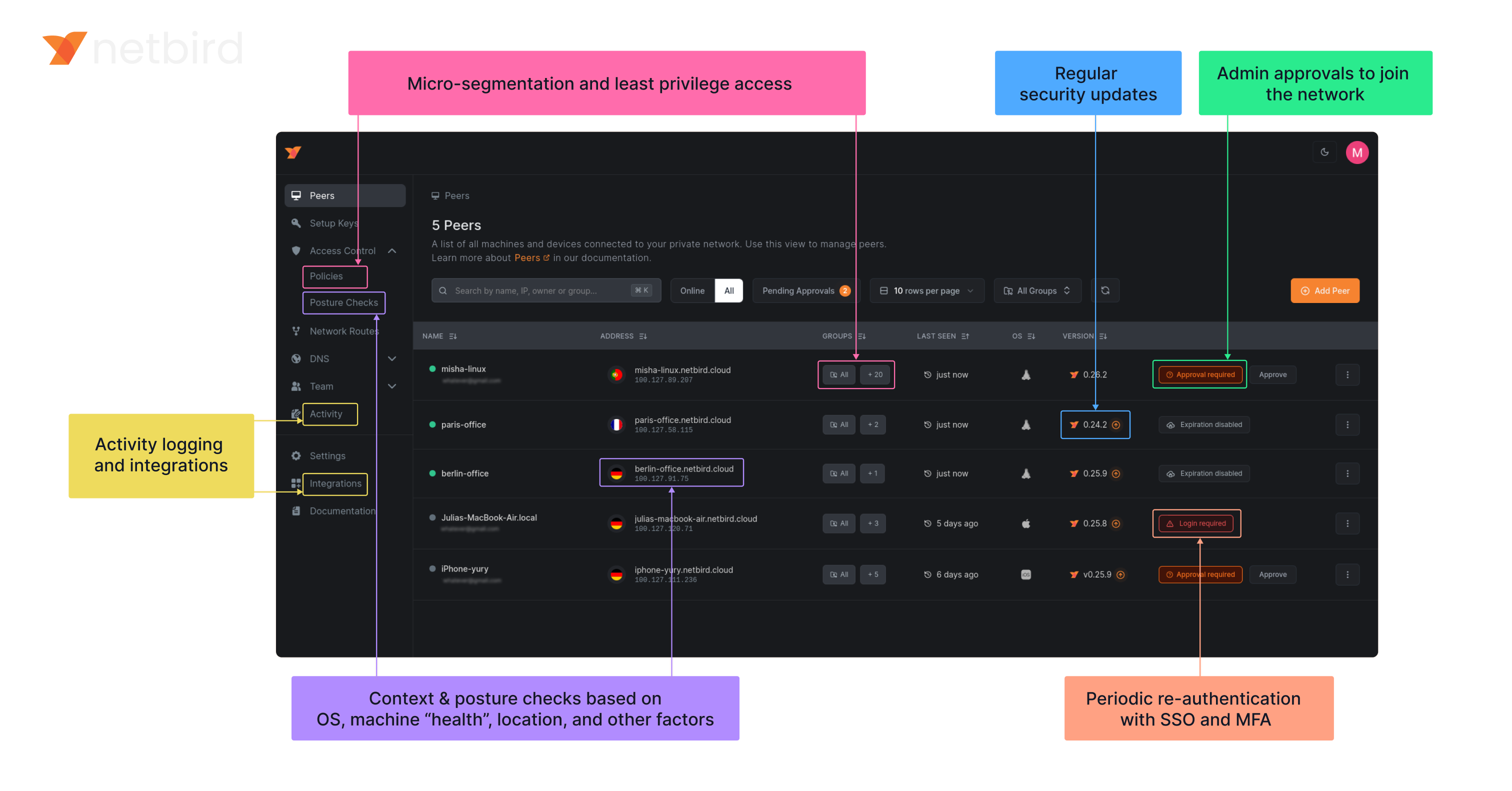

### NetBird on Lawrence Systems (Video)

|

||||||

|

[](https://www.youtube.com/watch?v=Kwrff6h0rEw)

|

||||||

|

|

||||||

### Key features

|

### Key features

|

||||||

|

|

||||||

@@ -62,6 +64,7 @@

|

|||||||

| | | <ul><li> - \[x] [Quantum-resistance with Rosenpass](https://netbird.io/knowledge-hub/the-first-quantum-resistant-mesh-vpn) </ul></li> | | <ul><li> - \[x] OpenWRT </ul></li> |

|

| | | <ul><li> - \[x] [Quantum-resistance with Rosenpass](https://netbird.io/knowledge-hub/the-first-quantum-resistant-mesh-vpn) </ul></li> | | <ul><li> - \[x] OpenWRT </ul></li> |

|

||||||

| | | <ui><li> - \[x] [Periodic re-authentication](https://docs.netbird.io/how-to/enforce-periodic-user-authentication)</ul></li> | | <ul><li> - \[x] [Serverless](https://docs.netbird.io/how-to/netbird-on-faas) </ul></li> |

|

| | | <ui><li> - \[x] [Periodic re-authentication](https://docs.netbird.io/how-to/enforce-periodic-user-authentication)</ul></li> | | <ul><li> - \[x] [Serverless](https://docs.netbird.io/how-to/netbird-on-faas) </ul></li> |

|

||||||

| | | | | <ul><li> - \[x] Docker </ul></li> |

|

| | | | | <ul><li> - \[x] Docker </ul></li> |

|

||||||

|

|

||||||

### Quickstart with NetBird Cloud

|

### Quickstart with NetBird Cloud

|

||||||

|

|

||||||

- Download and install NetBird at [https://app.netbird.io/install](https://app.netbird.io/install)

|

- Download and install NetBird at [https://app.netbird.io/install](https://app.netbird.io/install)

|

||||||

|

|||||||

@@ -38,7 +38,7 @@ func startTestingServices(t *testing.T) string {

|

|||||||

signalAddr := signalLis.Addr().String()

|

signalAddr := signalLis.Addr().String()

|

||||||

config.Signal.URI = signalAddr

|

config.Signal.URI = signalAddr

|

||||||

|

|

||||||

_, mgmLis := startManagement(t, config, "../testdata/store.sqlite")

|

_, mgmLis := startManagement(t, config, "../testdata/store.sql")

|

||||||

mgmAddr := mgmLis.Addr().String()

|

mgmAddr := mgmLis.Addr().String()

|

||||||

return mgmAddr

|

return mgmAddr

|

||||||

}

|

}

|

||||||

@@ -71,7 +71,7 @@ func startManagement(t *testing.T, config *mgmt.Config, testFile string) (*grpc.

|

|||||||

t.Fatal(err)

|

t.Fatal(err)

|

||||||

}

|

}

|

||||||

s := grpc.NewServer()

|

s := grpc.NewServer()

|

||||||

store, cleanUp, err := mgmt.NewTestStoreFromSqlite(context.Background(), testFile, t.TempDir())

|

store, cleanUp, err := mgmt.NewTestStoreFromSQL(context.Background(), testFile, t.TempDir())

|

||||||

if err != nil {

|

if err != nil {

|

||||||

t.Fatal(err)

|

t.Fatal(err)

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -11,6 +11,7 @@ import (

|

|||||||

log "github.com/sirupsen/logrus"

|

log "github.com/sirupsen/logrus"

|

||||||

|

|

||||||

firewall "github.com/netbirdio/netbird/client/firewall/manager"

|

firewall "github.com/netbirdio/netbird/client/firewall/manager"

|

||||||

|

nbnet "github.com/netbirdio/netbird/util/net"

|

||||||

)

|

)

|

||||||

|

|

||||||

const (

|

const (

|

||||||

@@ -21,13 +22,19 @@ const (

|

|||||||

chainNameOutputRules = "NETBIRD-ACL-OUTPUT"

|

chainNameOutputRules = "NETBIRD-ACL-OUTPUT"

|

||||||

)

|

)

|

||||||

|

|

||||||

|

type entry struct {

|

||||||

|

spec []string

|

||||||

|

position int

|

||||||

|

}

|

||||||

|

|

||||||

type aclManager struct {

|

type aclManager struct {

|

||||||

iptablesClient *iptables.IPTables

|

iptablesClient *iptables.IPTables

|

||||||

wgIface iFaceMapper

|

wgIface iFaceMapper

|

||||||

routingFwChainName string

|

routingFwChainName string

|

||||||

|

|

||||||

entries map[string][][]string

|

entries map[string][][]string

|

||||||

ipsetStore *ipsetStore

|

optionalEntries map[string][]entry

|

||||||

|

ipsetStore *ipsetStore

|

||||||

}

|

}

|

||||||

|

|

||||||

func newAclManager(iptablesClient *iptables.IPTables, wgIface iFaceMapper, routingFwChainName string) (*aclManager, error) {

|

func newAclManager(iptablesClient *iptables.IPTables, wgIface iFaceMapper, routingFwChainName string) (*aclManager, error) {

|

||||||

@@ -36,8 +43,9 @@ func newAclManager(iptablesClient *iptables.IPTables, wgIface iFaceMapper, routi

|

|||||||

wgIface: wgIface,

|

wgIface: wgIface,

|

||||||

routingFwChainName: routingFwChainName,

|

routingFwChainName: routingFwChainName,

|

||||||

|

|

||||||

entries: make(map[string][][]string),

|

entries: make(map[string][][]string),

|

||||||

ipsetStore: newIpsetStore(),

|

optionalEntries: make(map[string][]entry),

|

||||||

|

ipsetStore: newIpsetStore(),

|

||||||

}

|

}

|

||||||

|

|

||||||

err := ipset.Init()

|

err := ipset.Init()

|

||||||

@@ -46,6 +54,7 @@ func newAclManager(iptablesClient *iptables.IPTables, wgIface iFaceMapper, routi

|

|||||||

}

|

}

|

||||||

|

|

||||||

m.seedInitialEntries()

|

m.seedInitialEntries()

|

||||||

|

m.seedInitialOptionalEntries()

|

||||||

|

|

||||||

err = m.cleanChains()

|

err = m.cleanChains()

|

||||||

if err != nil {

|

if err != nil {

|

||||||

@@ -232,6 +241,19 @@ func (m *aclManager) cleanChains() error {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

ok, err = m.iptablesClient.ChainExists("mangle", "PREROUTING")

|

||||||

|

if err != nil {

|

||||||

|

return fmt.Errorf("list chains: %w", err)

|

||||||

|

}

|

||||||

|

if ok {

|

||||||

|

for _, rule := range m.entries["PREROUTING"] {

|

||||||

|

err := m.iptablesClient.DeleteIfExists("mangle", "PREROUTING", rule...)

|

||||||

|

if err != nil {

|

||||||

|

log.Errorf("failed to delete rule: %v, %s", rule, err)

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

for _, ipsetName := range m.ipsetStore.ipsetNames() {

|

for _, ipsetName := range m.ipsetStore.ipsetNames() {

|

||||||

if err := ipset.Flush(ipsetName); err != nil {

|

if err := ipset.Flush(ipsetName); err != nil {

|

||||||

log.Errorf("flush ipset %q during reset: %v", ipsetName, err)

|

log.Errorf("flush ipset %q during reset: %v", ipsetName, err)

|

||||||

@@ -267,6 +289,17 @@ func (m *aclManager) createDefaultChains() error {

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

for chainName, entries := range m.optionalEntries {

|

||||||

|

for _, entry := range entries {

|

||||||

|

if err := m.iptablesClient.InsertUnique(tableName, chainName, entry.position, entry.spec...); err != nil {

|

||||||

|

log.Errorf("failed to insert optional entry %v: %v", entry.spec, err)

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

m.entries[chainName] = append(m.entries[chainName], entry.spec)

|

||||||

|

}

|

||||||

|

}

|

||||||

|

clear(m.optionalEntries)

|

||||||

|

|

||||||

return nil

|

return nil

|

||||||

}

|

}

|

||||||

|

|

||||||

@@ -295,6 +328,22 @@ func (m *aclManager) seedInitialEntries() {

|

|||||||

m.appendToEntries("FORWARD", append([]string{"-o", m.wgIface.Name()}, established...))

|

m.appendToEntries("FORWARD", append([]string{"-o", m.wgIface.Name()}, established...))

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func (m *aclManager) seedInitialOptionalEntries() {

|

||||||

|

m.optionalEntries["FORWARD"] = []entry{

|

||||||

|

{

|

||||||

|

spec: []string{"-m", "mark", "--mark", fmt.Sprintf("%#x", nbnet.PreroutingFwmark), "-j", chainNameInputRules},

|

||||||

|

position: 2,

|

||||||

|

},

|

||||||

|

}

|

||||||

|

|

||||||

|

m.optionalEntries["PREROUTING"] = []entry{

|

||||||

|

{

|

||||||

|

spec: []string{"-t", "mangle", "-i", m.wgIface.Name(), "-m", "addrtype", "--dst-type", "LOCAL", "-j", "MARK", "--set-mark", fmt.Sprintf("%#x", nbnet.PreroutingFwmark)},

|

||||||

|

position: 1,

|

||||||

|

},

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

func (m *aclManager) appendToEntries(chainName string, spec []string) {

|

func (m *aclManager) appendToEntries(chainName string, spec []string) {

|

||||||

m.entries[chainName] = append(m.entries[chainName], spec)

|

m.entries[chainName] = append(m.entries[chainName], spec)

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -78,7 +78,7 @@ func (m *Manager) AddPeerFiltering(

|

|||||||

}

|

}

|

||||||

|

|

||||||

func (m *Manager) AddRouteFiltering(

|

func (m *Manager) AddRouteFiltering(

|

||||||

sources [] netip.Prefix,

|

sources []netip.Prefix,

|

||||||

destination netip.Prefix,

|

destination netip.Prefix,

|

||||||

proto firewall.Protocol,

|

proto firewall.Protocol,

|

||||||

sPort *firewall.Port,

|

sPort *firewall.Port,

|

||||||

|

|||||||

@@ -305,10 +305,7 @@ func (r *router) cleanUpDefaultForwardRules() error {

|

|||||||

|

|

||||||

log.Debug("flushing routing related tables")

|

log.Debug("flushing routing related tables")

|

||||||

for _, chain := range []string{chainRTFWD, chainRTNAT} {

|

for _, chain := range []string{chainRTFWD, chainRTNAT} {

|

||||||

table := tableFilter

|

table := r.getTableForChain(chain)

|

||||||

if chain == chainRTNAT {

|

|

||||||

table = tableNat

|

|

||||||

}

|

|

||||||

|

|

||||||

ok, err := r.iptablesClient.ChainExists(table, chain)

|

ok, err := r.iptablesClient.ChainExists(table, chain)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

@@ -329,15 +326,19 @@ func (r *router) cleanUpDefaultForwardRules() error {

|

|||||||

func (r *router) createContainers() error {

|

func (r *router) createContainers() error {

|

||||||

for _, chain := range []string{chainRTFWD, chainRTNAT} {

|

for _, chain := range []string{chainRTFWD, chainRTNAT} {

|

||||||

if err := r.createAndSetupChain(chain); err != nil {

|

if err := r.createAndSetupChain(chain); err != nil {

|

||||||

return fmt.Errorf("create chain %s: %v", chain, err)

|

return fmt.Errorf("create chain %s: %w", chain, err)

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

if err := r.insertEstablishedRule(chainRTFWD); err != nil {

|

if err := r.insertEstablishedRule(chainRTFWD); err != nil {

|

||||||

return fmt.Errorf("insert established rule: %v", err)

|

return fmt.Errorf("insert established rule: %w", err)

|

||||||

}

|

}

|

||||||

|

|

||||||

return r.addJumpRules()

|

if err := r.addJumpRules(); err != nil {

|

||||||

|

return fmt.Errorf("add jump rules: %w", err)

|

||||||

|

}

|

||||||

|

|

||||||

|

return nil

|

||||||

}

|

}

|

||||||

|

|

||||||

func (r *router) createAndSetupChain(chain string) error {

|

func (r *router) createAndSetupChain(chain string) error {

|

||||||

|

|||||||

@@ -132,7 +132,7 @@ func SetLegacyManagement(router LegacyManager, isLegacy bool) error {

|

|||||||

// GenerateSetName generates a unique name for an ipset based on the given sources.

|

// GenerateSetName generates a unique name for an ipset based on the given sources.

|

||||||

func GenerateSetName(sources []netip.Prefix) string {

|

func GenerateSetName(sources []netip.Prefix) string {

|

||||||

// sort for consistent naming

|

// sort for consistent naming

|

||||||

sortPrefixes(sources)

|

SortPrefixes(sources)

|

||||||

|

|

||||||

var sourcesStr strings.Builder

|

var sourcesStr strings.Builder

|

||||||

for _, src := range sources {

|

for _, src := range sources {

|

||||||

@@ -170,9 +170,9 @@ func MergeIPRanges(prefixes []netip.Prefix) []netip.Prefix {

|

|||||||

return merged

|

return merged

|

||||||

}

|

}

|

||||||

|

|

||||||

// sortPrefixes sorts the given slice of netip.Prefix in place.

|

// SortPrefixes sorts the given slice of netip.Prefix in place.

|

||||||

// It sorts first by IP address, then by prefix length (most specific to least specific).

|

// It sorts first by IP address, then by prefix length (most specific to least specific).

|

||||||

func sortPrefixes(prefixes []netip.Prefix) {

|

func SortPrefixes(prefixes []netip.Prefix) {

|

||||||

sort.Slice(prefixes, func(i, j int) bool {

|

sort.Slice(prefixes, func(i, j int) bool {

|

||||||

addrCmp := prefixes[i].Addr().Compare(prefixes[j].Addr())

|

addrCmp := prefixes[i].Addr().Compare(prefixes[j].Addr())

|

||||||

if addrCmp != 0 {

|

if addrCmp != 0 {

|

||||||

|

|||||||

@@ -11,12 +11,14 @@ import (

|

|||||||

"time"

|

"time"

|

||||||

|

|

||||||

"github.com/google/nftables"

|

"github.com/google/nftables"

|

||||||

|

"github.com/google/nftables/binaryutil"

|

||||||

"github.com/google/nftables/expr"

|

"github.com/google/nftables/expr"

|

||||||

log "github.com/sirupsen/logrus"

|

log "github.com/sirupsen/logrus"

|

||||||

"golang.org/x/sys/unix"

|

"golang.org/x/sys/unix"

|

||||||

|

|

||||||

firewall "github.com/netbirdio/netbird/client/firewall/manager"

|

firewall "github.com/netbirdio/netbird/client/firewall/manager"

|

||||||

"github.com/netbirdio/netbird/client/iface"

|

"github.com/netbirdio/netbird/client/iface"

|

||||||

|

nbnet "github.com/netbirdio/netbird/util/net"

|

||||||

)

|

)

|

||||||

|

|

||||||

const (

|

const (

|

||||||

@@ -29,6 +31,7 @@ const (

|

|||||||

chainNameInputFilter = "netbird-acl-input-filter"

|

chainNameInputFilter = "netbird-acl-input-filter"

|

||||||

chainNameOutputFilter = "netbird-acl-output-filter"

|

chainNameOutputFilter = "netbird-acl-output-filter"

|

||||||

chainNameForwardFilter = "netbird-acl-forward-filter"

|

chainNameForwardFilter = "netbird-acl-forward-filter"

|

||||||

|

chainNamePrerouting = "netbird-rt-prerouting"

|

||||||

|

|

||||||

allowNetbirdInputRuleID = "allow Netbird incoming traffic"

|

allowNetbirdInputRuleID = "allow Netbird incoming traffic"

|

||||||

)

|

)

|

||||||

@@ -40,15 +43,14 @@ var (

|

|||||||

)

|

)

|

||||||

|

|

||||||

type AclManager struct {

|

type AclManager struct {

|

||||||

rConn *nftables.Conn

|

rConn *nftables.Conn

|

||||||

sConn *nftables.Conn

|

sConn *nftables.Conn

|

||||||

wgIface iFaceMapper

|

wgIface iFaceMapper

|

||||||

routeingFwChainName string

|

routingFwChainName string

|

||||||

|

|

||||||

workTable *nftables.Table

|

workTable *nftables.Table

|

||||||

chainInputRules *nftables.Chain

|

chainInputRules *nftables.Chain

|

||||||

chainOutputRules *nftables.Chain

|

chainOutputRules *nftables.Chain

|

||||||

chainFwFilter *nftables.Chain

|

|

||||||

|

|

||||||

ipsetStore *ipsetStore

|

ipsetStore *ipsetStore

|

||||||

rules map[string]*Rule

|

rules map[string]*Rule

|

||||||

@@ -61,7 +63,7 @@ type iFaceMapper interface {

|

|||||||

IsUserspaceBind() bool

|

IsUserspaceBind() bool

|

||||||

}

|

}

|

||||||

|

|

||||||

func newAclManager(table *nftables.Table, wgIface iFaceMapper, routeingFwChainName string) (*AclManager, error) {

|

func newAclManager(table *nftables.Table, wgIface iFaceMapper, routingFwChainName string) (*AclManager, error) {

|

||||||

// sConn is used for creating sets and adding/removing elements from them

|

// sConn is used for creating sets and adding/removing elements from them

|

||||||

// it's differ then rConn (which does create new conn for each flush operation)

|

// it's differ then rConn (which does create new conn for each flush operation)

|

||||||

// and is permanent. Using same connection for both type of operations

|

// and is permanent. Using same connection for both type of operations

|

||||||

@@ -72,11 +74,11 @@ func newAclManager(table *nftables.Table, wgIface iFaceMapper, routeingFwChainNa

|

|||||||

}

|

}

|

||||||

|

|

||||||

m := &AclManager{

|

m := &AclManager{

|

||||||

rConn: &nftables.Conn{},

|

rConn: &nftables.Conn{},

|

||||||

sConn: sConn,

|

sConn: sConn,

|

||||||

wgIface: wgIface,

|

wgIface: wgIface,

|

||||||

workTable: table,

|

workTable: table,

|

||||||

routeingFwChainName: routeingFwChainName,

|

routingFwChainName: routingFwChainName,

|

||||||

|

|

||||||

ipsetStore: newIpsetStore(),

|

ipsetStore: newIpsetStore(),

|

||||||

rules: make(map[string]*Rule),

|

rules: make(map[string]*Rule),

|

||||||

@@ -462,9 +464,9 @@ func (m *AclManager) createDefaultChains() (err error) {

|

|||||||

}

|

}

|

||||||

|

|

||||||

// netbird-acl-forward-filter

|

// netbird-acl-forward-filter

|

||||||

m.chainFwFilter = m.createFilterChainWithHook(chainNameForwardFilter, nftables.ChainHookForward)

|

chainFwFilter := m.createFilterChainWithHook(chainNameForwardFilter, nftables.ChainHookForward)

|

||||||

m.addJumpRulesToRtForward() // to netbird-rt-fwd

|

m.addJumpRulesToRtForward(chainFwFilter) // to netbird-rt-fwd

|

||||||

m.addDropExpressions(m.chainFwFilter, expr.MetaKeyIIFNAME)

|

m.addDropExpressions(chainFwFilter, expr.MetaKeyIIFNAME)

|

||||||

|

|

||||||

err = m.rConn.Flush()

|

err = m.rConn.Flush()

|

||||||

if err != nil {

|

if err != nil {

|

||||||

@@ -472,10 +474,96 @@ func (m *AclManager) createDefaultChains() (err error) {

|

|||||||

return fmt.Errorf(flushError, err)

|

return fmt.Errorf(flushError, err)

|

||||||

}

|

}

|

||||||

|

|

||||||

|

if err := m.allowRedirectedTraffic(chainFwFilter); err != nil {

|

||||||

|

log.Errorf("failed to allow redirected traffic: %s", err)

|

||||||

|

}

|

||||||

|

|

||||||

return nil

|

return nil

|

||||||

}

|

}

|

||||||

|

|

||||||

func (m *AclManager) addJumpRulesToRtForward() {

|

// Makes redirected traffic originally destined for the host itself (now subject to the forward filter)

|

||||||

|

// go through the input filter as well. This will enable e.g. Docker services to keep working by accessing the

|

||||||

|

// netbird peer IP.

|

||||||

|

func (m *AclManager) allowRedirectedTraffic(chainFwFilter *nftables.Chain) error {

|

||||||

|

preroutingChain := m.rConn.AddChain(&nftables.Chain{

|

||||||

|

Name: chainNamePrerouting,

|

||||||

|

Table: m.workTable,

|

||||||

|

Type: nftables.ChainTypeFilter,

|

||||||

|

Hooknum: nftables.ChainHookPrerouting,

|

||||||

|

Priority: nftables.ChainPriorityMangle,

|

||||||

|

})

|

||||||

|

|

||||||

|

m.addPreroutingRule(preroutingChain)

|

||||||

|

|

||||||

|

m.addFwmarkToForward(chainFwFilter)

|

||||||

|

|

||||||

|

if err := m.rConn.Flush(); err != nil {

|

||||||

|

return fmt.Errorf(flushError, err)

|

||||||

|

}

|

||||||

|

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

func (m *AclManager) addPreroutingRule(preroutingChain *nftables.Chain) {

|

||||||

|

m.rConn.AddRule(&nftables.Rule{

|

||||||

|

Table: m.workTable,

|

||||||

|

Chain: preroutingChain,

|

||||||

|

Exprs: []expr.Any{

|

||||||

|

&expr.Meta{

|

||||||

|

Key: expr.MetaKeyIIFNAME,

|

||||||

|

Register: 1,

|

||||||

|

},

|

||||||

|

&expr.Cmp{

|

||||||

|

Op: expr.CmpOpEq,

|

||||||

|

Register: 1,

|

||||||

|

Data: ifname(m.wgIface.Name()),

|

||||||

|

},

|

||||||

|

&expr.Fib{

|

||||||

|

Register: 1,

|

||||||

|

ResultADDRTYPE: true,

|

||||||

|

FlagDADDR: true,

|

||||||

|

},

|

||||||

|

&expr.Cmp{

|

||||||

|

Op: expr.CmpOpEq,

|

||||||

|

Register: 1,

|

||||||

|

Data: binaryutil.NativeEndian.PutUint32(unix.RTN_LOCAL),

|

||||||

|

},

|

||||||

|

&expr.Immediate{

|

||||||

|

Register: 1,

|

||||||

|

Data: binaryutil.NativeEndian.PutUint32(nbnet.PreroutingFwmark),

|

||||||

|

},

|

||||||

|

&expr.Meta{

|

||||||

|

Key: expr.MetaKeyMARK,

|

||||||

|

Register: 1,

|

||||||

|

SourceRegister: true,

|

||||||

|

},

|

||||||

|

},

|

||||||

|

})

|

||||||

|

}

|

||||||

|

|

||||||

|

func (m *AclManager) addFwmarkToForward(chainFwFilter *nftables.Chain) {

|

||||||

|

m.rConn.InsertRule(&nftables.Rule{

|

||||||

|

Table: m.workTable,

|

||||||

|

Chain: chainFwFilter,

|

||||||

|

Exprs: []expr.Any{

|

||||||

|

&expr.Meta{

|

||||||

|

Key: expr.MetaKeyMARK,

|

||||||

|

Register: 1,

|

||||||

|

},

|

||||||

|

&expr.Cmp{

|

||||||

|

Op: expr.CmpOpEq,

|

||||||

|

Register: 1,

|

||||||

|

Data: binaryutil.NativeEndian.PutUint32(nbnet.PreroutingFwmark),

|

||||||

|

},

|

||||||

|

&expr.Verdict{

|

||||||

|

Kind: expr.VerdictJump,

|

||||||

|

Chain: m.chainInputRules.Name,

|

||||||

|

},

|

||||||

|

},

|

||||||

|

})

|

||||||

|

}

|

||||||

|

|

||||||

|

func (m *AclManager) addJumpRulesToRtForward(chainFwFilter *nftables.Chain) {

|

||||||

expressions := []expr.Any{

|

expressions := []expr.Any{

|

||||||

&expr.Meta{Key: expr.MetaKeyIIFNAME, Register: 1},

|

&expr.Meta{Key: expr.MetaKeyIIFNAME, Register: 1},

|

||||||

&expr.Cmp{

|

&expr.Cmp{

|

||||||

@@ -485,13 +573,13 @@ func (m *AclManager) addJumpRulesToRtForward() {

|

|||||||

},

|

},

|

||||||

&expr.Verdict{

|

&expr.Verdict{

|

||||||

Kind: expr.VerdictJump,

|

Kind: expr.VerdictJump,

|

||||||

Chain: m.routeingFwChainName,

|

Chain: m.routingFwChainName,

|

||||||

},

|

},

|

||||||

}

|

}

|

||||||

|

|

||||||

_ = m.rConn.AddRule(&nftables.Rule{

|

_ = m.rConn.AddRule(&nftables.Rule{

|

||||||

Table: m.workTable,

|

Table: m.workTable,

|

||||||

Chain: m.chainFwFilter,

|

Chain: chainFwFilter,

|

||||||

Exprs: expressions,

|

Exprs: expressions,

|

||||||

})

|

})

|

||||||

}

|

}

|

||||||

@@ -509,7 +597,7 @@ func (m *AclManager) createChain(name string) *nftables.Chain {

|

|||||||

return chain

|

return chain

|

||||||

}

|

}

|

||||||

|

|

||||||

func (m *AclManager) createFilterChainWithHook(name string, hookNum nftables.ChainHook) *nftables.Chain {

|

func (m *AclManager) createFilterChainWithHook(name string, hookNum *nftables.ChainHook) *nftables.Chain {

|

||||||

polAccept := nftables.ChainPolicyAccept

|

polAccept := nftables.ChainPolicyAccept

|

||||||

chain := &nftables.Chain{

|

chain := &nftables.Chain{

|

||||||

Name: name,

|

Name: name,

|

||||||

|

|||||||

@@ -10,6 +10,7 @@ import (

|

|||||||

"net/netip"

|

"net/netip"

|

||||||

"strings"

|

"strings"

|

||||||

|

|

||||||

|

"github.com/davecgh/go-spew/spew"

|

||||||

"github.com/google/nftables"

|

"github.com/google/nftables"

|

||||||

"github.com/google/nftables/binaryutil"

|

"github.com/google/nftables/binaryutil"

|

||||||

"github.com/google/nftables/expr"

|

"github.com/google/nftables/expr"

|

||||||

@@ -24,7 +25,7 @@ import (

|

|||||||

|

|

||||||

const (

|

const (

|

||||||

chainNameRoutingFw = "netbird-rt-fwd"

|

chainNameRoutingFw = "netbird-rt-fwd"

|

||||||

chainNameRoutingNat = "netbird-rt-nat"

|

chainNameRoutingNat = "netbird-rt-postrouting"

|

||||||

chainNameForward = "FORWARD"

|

chainNameForward = "FORWARD"

|

||||||

|

|

||||||

userDataAcceptForwardRuleIif = "frwacceptiif"

|

userDataAcceptForwardRuleIif = "frwacceptiif"

|

||||||

@@ -149,7 +150,6 @@ func (r *router) loadFilterTable() (*nftables.Table, error) {

|

|||||||

}

|

}

|

||||||

|

|

||||||

func (r *router) createContainers() error {

|

func (r *router) createContainers() error {

|

||||||

|

|

||||||

r.chains[chainNameRoutingFw] = r.conn.AddChain(&nftables.Chain{

|

r.chains[chainNameRoutingFw] = r.conn.AddChain(&nftables.Chain{

|

||||||

Name: chainNameRoutingFw,

|

Name: chainNameRoutingFw,

|

||||||

Table: r.workTable,

|

Table: r.workTable,

|

||||||

@@ -157,25 +157,26 @@ func (r *router) createContainers() error {

|

|||||||

|

|

||||||

insertReturnTrafficRule(r.conn, r.workTable, r.chains[chainNameRoutingFw])

|

insertReturnTrafficRule(r.conn, r.workTable, r.chains[chainNameRoutingFw])

|

||||||

|

|

||||||

|

prio := *nftables.ChainPriorityNATSource - 1

|

||||||

|

|

||||||

r.chains[chainNameRoutingNat] = r.conn.AddChain(&nftables.Chain{

|

r.chains[chainNameRoutingNat] = r.conn.AddChain(&nftables.Chain{

|

||||||

Name: chainNameRoutingNat,

|

Name: chainNameRoutingNat,

|

||||||

Table: r.workTable,

|

Table: r.workTable,

|

||||||

Hooknum: nftables.ChainHookPostrouting,

|

Hooknum: nftables.ChainHookPostrouting,

|

||||||

Priority: nftables.ChainPriorityNATSource - 1,

|

Priority: &prio,

|

||||||

Type: nftables.ChainTypeNAT,

|

Type: nftables.ChainTypeNAT,

|

||||||

})

|

})

|

||||||

|

|

||||||

r.acceptForwardRules()

|

r.acceptForwardRules()

|

||||||

|

|

||||||

err := r.refreshRulesMap()

|

if err := r.refreshRulesMap(); err != nil {

|

||||||

if err != nil {

|

|

||||||

log.Errorf("failed to clean up rules from FORWARD chain: %s", err)

|

log.Errorf("failed to clean up rules from FORWARD chain: %s", err)

|

||||||

}

|

}

|

||||||

|

|

||||||

err = r.conn.Flush()

|

if err := r.conn.Flush(); err != nil {

|

||||||

if err != nil {

|

|

||||||

return fmt.Errorf("nftables: unable to initialize table: %v", err)

|

return fmt.Errorf("nftables: unable to initialize table: %v", err)

|

||||||

}

|

}

|

||||||

|

|

||||||

return nil

|

return nil

|

||||||

}

|

}

|

||||||

|

|

||||||

@@ -188,6 +189,7 @@ func (r *router) AddRouteFiltering(

|

|||||||

dPort *firewall.Port,

|

dPort *firewall.Port,

|

||||||

action firewall.Action,

|

action firewall.Action,

|

||||||

) (firewall.Rule, error) {

|

) (firewall.Rule, error) {

|

||||||

|

|

||||||

ruleKey := id.GenerateRouteRuleKey(sources, destination, proto, sPort, dPort, action)

|

ruleKey := id.GenerateRouteRuleKey(sources, destination, proto, sPort, dPort, action)

|

||||||

if _, ok := r.rules[string(ruleKey)]; ok {

|

if _, ok := r.rules[string(ruleKey)]; ok {

|

||||||

return ruleKey, nil

|

return ruleKey, nil

|

||||||

@@ -248,9 +250,18 @@ func (r *router) AddRouteFiltering(

|

|||||||

UserData: []byte(ruleKey),

|

UserData: []byte(ruleKey),

|

||||||

}

|

}

|

||||||

|

|

||||||

r.rules[string(ruleKey)] = r.conn.AddRule(rule)

|

rule = r.conn.AddRule(rule)

|

||||||

|

|

||||||

return ruleKey, r.conn.Flush()

|

log.Tracef("Adding route rule %s", spew.Sdump(rule))

|

||||||

|

if err := r.conn.Flush(); err != nil {

|

||||||

|

return nil, fmt.Errorf(flushError, err)

|

||||||

|

}

|

||||||

|

|

||||||

|

r.rules[string(ruleKey)] = rule

|

||||||

|

|

||||||

|

log.Debugf("nftables: added route rule: sources=%v, destination=%v, proto=%v, sPort=%v, dPort=%v, action=%v", sources, destination, proto, sPort, dPort, action)

|

||||||

|

|

||||||

|

return ruleKey, nil

|

||||||

}

|

}

|

||||||

|

|

||||||

func (r *router) getIpSetExprs(sources []netip.Prefix, exprs []expr.Any) ([]expr.Any, error) {

|

func (r *router) getIpSetExprs(sources []netip.Prefix, exprs []expr.Any) ([]expr.Any, error) {

|

||||||

@@ -288,6 +299,10 @@ func (r *router) DeleteRouteRule(rule firewall.Rule) error {

|

|||||||

return nil

|

return nil

|

||||||

}

|

}

|

||||||

|

|

||||||

|

if nftRule.Handle == 0 {

|

||||||

|

return fmt.Errorf("route rule %s has no handle", ruleKey)

|

||||||

|

}

|

||||||

|

|

||||||

setName := r.findSetNameInRule(nftRule)

|

setName := r.findSetNameInRule(nftRule)

|

||||||

|

|

||||||

if err := r.deleteNftRule(nftRule, ruleKey); err != nil {

|

if err := r.deleteNftRule(nftRule, ruleKey); err != nil {

|

||||||

@@ -658,7 +673,7 @@ func (r *router) RemoveNatRule(pair firewall.RouterPair) error {

|

|||||||

return fmt.Errorf("nftables: received error while applying rule removal for %s: %v", pair.Destination, err)

|

return fmt.Errorf("nftables: received error while applying rule removal for %s: %v", pair.Destination, err)

|

||||||

}

|

}

|

||||||

|

|

||||||

log.Debugf("nftables: removed rules for %s", pair.Destination)

|

log.Debugf("nftables: removed nat rules for %s", pair.Destination)

|

||||||

return nil

|

return nil

|

||||||

}

|

}

|

||||||

|

|

||||||

|

|||||||

@@ -314,6 +314,10 @@ func TestRouter_AddRouteFiltering(t *testing.T) {

|

|||||||

ruleKey, err := r.AddRouteFiltering(tt.sources, tt.destination, tt.proto, tt.sPort, tt.dPort, tt.action)

|

ruleKey, err := r.AddRouteFiltering(tt.sources, tt.destination, tt.proto, tt.sPort, tt.dPort, tt.action)

|

||||||

require.NoError(t, err, "AddRouteFiltering failed")

|

require.NoError(t, err, "AddRouteFiltering failed")

|

||||||

|

|

||||||

|

t.Cleanup(func() {

|

||||||

|

require.NoError(t, r.DeleteRouteRule(ruleKey), "Failed to delete rule")

|

||||||

|

})

|

||||||

|

|

||||||

// Check if the rule is in the internal map

|

// Check if the rule is in the internal map

|

||||||

rule, ok := r.rules[ruleKey.GetRuleID()]

|

rule, ok := r.rules[ruleKey.GetRuleID()]

|

||||||

assert.True(t, ok, "Rule not found in internal map")

|

assert.True(t, ok, "Rule not found in internal map")

|

||||||

@@ -346,10 +350,6 @@ func TestRouter_AddRouteFiltering(t *testing.T) {

|

|||||||

|

|

||||||

// Verify actual nftables rule content

|

// Verify actual nftables rule content

|

||||||

verifyRule(t, nftRule, tt.sources, tt.destination, tt.proto, tt.sPort, tt.dPort, tt.direction, tt.action, tt.expectSet)

|

verifyRule(t, nftRule, tt.sources, tt.destination, tt.proto, tt.sPort, tt.dPort, tt.direction, tt.action, tt.expectSet)

|

||||||

|

|

||||||

// Clean up

|

|

||||||

err = r.DeleteRouteRule(ruleKey)

|

|

||||||

require.NoError(t, err, "Failed to delete rule")

|

|

||||||

})

|

})

|

||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -1,8 +1,11 @@

|

|||||||

package id

|

package id

|

||||||

|

|

||||||

import (

|

import (

|

||||||

|

"crypto/sha256"

|

||||||

|

"encoding/hex"

|

||||||

"fmt"

|

"fmt"

|

||||||

"net/netip"

|

"net/netip"

|

||||||

|

"strconv"

|

||||||

|

|

||||||

"github.com/netbirdio/netbird/client/firewall/manager"

|

"github.com/netbirdio/netbird/client/firewall/manager"

|

||||||

)

|

)

|

||||||

@@ -21,5 +24,41 @@ func GenerateRouteRuleKey(

|

|||||||

dPort *manager.Port,

|

dPort *manager.Port,

|

||||||

action manager.Action,

|

action manager.Action,

|

||||||

) RuleID {

|

) RuleID {

|

||||||

return RuleID(fmt.Sprintf("%s-%s-%s-%s-%s-%d", sources, destination, proto, sPort, dPort, action))

|

manager.SortPrefixes(sources)

|

||||||

|

|

||||||

|

h := sha256.New()

|

||||||

|

|

||||||

|

// Write all fields to the hasher, with delimiters

|

||||||

|

h.Write([]byte("sources:"))

|

||||||

|

for _, src := range sources {

|

||||||

|

h.Write([]byte(src.String()))

|

||||||

|

h.Write([]byte(","))

|

||||||

|

}

|

||||||

|

|

||||||

|

h.Write([]byte("destination:"))

|

||||||

|

h.Write([]byte(destination.String()))

|

||||||

|

|

||||||

|

h.Write([]byte("proto:"))

|

||||||

|

h.Write([]byte(proto))

|

||||||

|

|

||||||

|

h.Write([]byte("sPort:"))

|

||||||

|

if sPort != nil {

|

||||||

|

h.Write([]byte(sPort.String()))

|

||||||

|

} else {

|

||||||

|

h.Write([]byte("<nil>"))

|

||||||

|

}

|

||||||

|

|

||||||

|

h.Write([]byte("dPort:"))

|

||||||

|

if dPort != nil {

|

||||||

|

h.Write([]byte(dPort.String()))

|

||||||

|

} else {

|

||||||

|

h.Write([]byte("<nil>"))

|

||||||

|

}

|

||||||

|

|

||||||

|

h.Write([]byte("action:"))

|

||||||

|

h.Write([]byte(strconv.Itoa(int(action))))

|

||||||

|

hash := hex.EncodeToString(h.Sum(nil))

|

||||||

|

|

||||||

|

// prepend destination prefix to be able to identify the rule

|

||||||

|

return RuleID(fmt.Sprintf("%s-%s", destination.String(), hash[:16]))

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -832,7 +832,7 @@ func TestEngine_MultiplePeers(t *testing.T) {

|

|||||||

return

|

return

|

||||||

}

|

}

|

||||||

defer sigServer.Stop()

|

defer sigServer.Stop()

|

||||||

mgmtServer, mgmtAddr, err := startManagement(t, t.TempDir(), "../testdata/store.sqlite")

|

mgmtServer, mgmtAddr, err := startManagement(t, t.TempDir(), "../testdata/store.sql")

|

||||||

if err != nil {

|

if err != nil {

|

||||||

t.Fatal(err)

|

t.Fatal(err)

|

||||||

return

|

return

|

||||||

@@ -1080,7 +1080,7 @@ func startManagement(t *testing.T, dataDir, testFile string) (*grpc.Server, stri

|

|||||||

}

|

}

|

||||||

s := grpc.NewServer(grpc.KeepaliveEnforcementPolicy(kaep), grpc.KeepaliveParams(kasp))

|

s := grpc.NewServer(grpc.KeepaliveEnforcementPolicy(kaep), grpc.KeepaliveParams(kasp))

|

||||||

|

|

||||||

store, cleanUp, err := server.NewTestStoreFromSqlite(context.Background(), testFile, config.Datadir)

|

store, cleanUp, err := server.NewTestStoreFromSQL(context.Background(), testFile, config.Datadir)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

return nil, "", err

|

return nil, "", err

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -32,6 +32,8 @@ const (

|

|||||||

connPriorityRelay ConnPriority = 1

|

connPriorityRelay ConnPriority = 1

|

||||||

connPriorityICETurn ConnPriority = 1

|

connPriorityICETurn ConnPriority = 1

|

||||||

connPriorityICEP2P ConnPriority = 2

|

connPriorityICEP2P ConnPriority = 2

|

||||||

|

|

||||||

|

reconnectMaxElapsedTime = 30 * time.Minute

|

||||||

)

|

)

|

||||||

|

|

||||||

type WgConfig struct {

|

type WgConfig struct {

|

||||||

@@ -83,6 +85,7 @@ type Conn struct {

|

|||||||

wgProxyICE wgproxy.Proxy

|

wgProxyICE wgproxy.Proxy

|

||||||

wgProxyRelay wgproxy.Proxy

|

wgProxyRelay wgproxy.Proxy

|

||||||

signaler *Signaler

|

signaler *Signaler

|

||||||

|

iFaceDiscover stdnet.ExternalIFaceDiscover

|

||||||

relayManager *relayClient.Manager

|

relayManager *relayClient.Manager

|

||||||

allowedIPsIP string

|

allowedIPsIP string

|

||||||

handshaker *Handshaker

|

handshaker *Handshaker

|

||||||

@@ -108,6 +111,8 @@ type Conn struct {

|

|||||||

// for reconnection operations

|

// for reconnection operations

|

||||||

iCEDisconnected chan bool

|

iCEDisconnected chan bool

|

||||||

relayDisconnected chan bool

|

relayDisconnected chan bool

|

||||||

|

connMonitor *ConnMonitor

|

||||||

|

reconnectCh <-chan struct{}

|

||||||

}

|

}

|

||||||

|

|

||||||

// NewConn creates a new not opened Conn to the remote peer.

|

// NewConn creates a new not opened Conn to the remote peer.

|

||||||

@@ -123,21 +128,31 @@ func NewConn(engineCtx context.Context, config ConnConfig, statusRecorder *Statu

|

|||||||

connLog := log.WithField("peer", config.Key)

|

connLog := log.WithField("peer", config.Key)

|

||||||

|

|

||||||

var conn = &Conn{

|

var conn = &Conn{

|

||||||

log: connLog,

|

log: connLog,

|

||||||

ctx: ctx,

|

ctx: ctx,

|

||||||

ctxCancel: ctxCancel,

|

ctxCancel: ctxCancel,

|

||||||

config: config,

|

config: config,

|

||||||

statusRecorder: statusRecorder,

|

statusRecorder: statusRecorder,

|

||||||

wgProxyFactory: wgProxyFactory,

|

wgProxyFactory: wgProxyFactory,

|

||||||

signaler: signaler,

|

signaler: signaler,

|

||||||

relayManager: relayManager,

|

iFaceDiscover: iFaceDiscover,

|

||||||

allowedIPsIP: allowedIPsIP.String(),

|

relayManager: relayManager,

|

||||||

statusRelay: NewAtomicConnStatus(),

|

allowedIPsIP: allowedIPsIP.String(),

|

||||||

statusICE: NewAtomicConnStatus(),

|

statusRelay: NewAtomicConnStatus(),

|

||||||

|

statusICE: NewAtomicConnStatus(),

|

||||||

|

|

||||||

iCEDisconnected: make(chan bool, 1),

|

iCEDisconnected: make(chan bool, 1),

|

||||||

relayDisconnected: make(chan bool, 1),

|

relayDisconnected: make(chan bool, 1),

|

||||||

}

|

}

|

||||||

|

|

||||||

|

conn.connMonitor, conn.reconnectCh = NewConnMonitor(

|

||||||

|

signaler,

|

||||||

|

iFaceDiscover,

|

||||||

|

config,

|

||||||

|

conn.relayDisconnected,

|

||||||

|

conn.iCEDisconnected,

|

||||||

|

)

|

||||||

|

|

||||||

rFns := WorkerRelayCallbacks{

|

rFns := WorkerRelayCallbacks{

|

||||||

OnConnReady: conn.relayConnectionIsReady,

|

OnConnReady: conn.relayConnectionIsReady,

|

||||||

OnDisconnected: conn.onWorkerRelayStateDisconnected,

|

OnDisconnected: conn.onWorkerRelayStateDisconnected,

|

||||||

@@ -200,6 +215,8 @@ func (conn *Conn) startHandshakeAndReconnect() {

|

|||||||

conn.log.Errorf("failed to send initial offer: %v", err)

|

conn.log.Errorf("failed to send initial offer: %v", err)

|

||||||

}

|

}

|

||||||

|

|

||||||

|

go conn.connMonitor.Start(conn.ctx)

|

||||||

|

|

||||||

if conn.workerRelay.IsController() {

|

if conn.workerRelay.IsController() {

|

||||||

conn.reconnectLoopWithRetry()

|

conn.reconnectLoopWithRetry()

|

||||||

} else {

|

} else {

|

||||||

@@ -309,12 +326,14 @@ func (conn *Conn) reconnectLoopWithRetry() {

|

|||||||

// With it, we can decrease to send necessary offer

|

// With it, we can decrease to send necessary offer

|

||||||

select {

|

select {

|

||||||

case <-conn.ctx.Done():

|

case <-conn.ctx.Done():

|

||||||

|

return

|

||||||

case <-time.After(3 * time.Second):

|

case <-time.After(3 * time.Second):

|

||||||

}

|

}

|

||||||

|

|

||||||

ticker := conn.prepareExponentTicker()

|

ticker := conn.prepareExponentTicker()

|

||||||

defer ticker.Stop()

|

defer ticker.Stop()

|

||||||

time.Sleep(1 * time.Second)

|

time.Sleep(1 * time.Second)

|

||||||

|

|

||||||

for {

|

for {

|

||||||

select {

|

select {

|

||||||

case t := <-ticker.C:

|

case t := <-ticker.C:

|

||||||

@@ -342,20 +361,11 @@ func (conn *Conn) reconnectLoopWithRetry() {

|

|||||||

if err != nil {

|

if err != nil {

|

||||||

conn.log.Errorf("failed to do handshake: %v", err)

|

conn.log.Errorf("failed to do handshake: %v", err)

|

||||||

}

|

}

|

||||||

case changed := <-conn.relayDisconnected:

|

|

||||||

if !changed {

|

case <-conn.reconnectCh:

|

||||||

continue

|

|

||||||

}

|

|

||||||

conn.log.Debugf("Relay state changed, reset reconnect timer")

|

|

||||||

ticker.Stop()

|

|

||||||

ticker = conn.prepareExponentTicker()

|

|

||||||

case changed := <-conn.iCEDisconnected:

|

|

||||||

if !changed {

|

|

||||||

continue

|

|

||||||

}

|

|

||||||

conn.log.Debugf("ICE state changed, reset reconnect timer")

|

|

||||||

ticker.Stop()

|

ticker.Stop()

|

||||||

ticker = conn.prepareExponentTicker()

|

ticker = conn.prepareExponentTicker()

|

||||||

|

|

||||||

case <-conn.ctx.Done():

|

case <-conn.ctx.Done():

|

||||||

conn.log.Debugf("context is done, stop reconnect loop")

|

conn.log.Debugf("context is done, stop reconnect loop")

|

||||||

return

|

return

|

||||||

@@ -366,10 +376,10 @@ func (conn *Conn) reconnectLoopWithRetry() {

|

|||||||

func (conn *Conn) prepareExponentTicker() *backoff.Ticker {

|

func (conn *Conn) prepareExponentTicker() *backoff.Ticker {

|

||||||

bo := backoff.WithContext(&backoff.ExponentialBackOff{

|

bo := backoff.WithContext(&backoff.ExponentialBackOff{

|

||||||

InitialInterval: 800 * time.Millisecond,

|

InitialInterval: 800 * time.Millisecond,

|

||||||

RandomizationFactor: 0.01,

|

RandomizationFactor: 0.1,

|

||||||

Multiplier: 2,

|

Multiplier: 2,

|

||||||

MaxInterval: conn.config.Timeout,

|

MaxInterval: conn.config.Timeout,

|

||||||

MaxElapsedTime: 0,

|

MaxElapsedTime: reconnectMaxElapsedTime,

|

||||||

Stop: backoff.Stop,

|

Stop: backoff.Stop,

|

||||||

Clock: backoff.SystemClock,

|

Clock: backoff.SystemClock,

|

||||||

}, conn.ctx)

|

}, conn.ctx)

|

||||||

|

|||||||

212

client/internal/peer/conn_monitor.go

Normal file

212

client/internal/peer/conn_monitor.go

Normal file

@@ -0,0 +1,212 @@

|

|||||||

|

package peer

|

||||||

|

|

||||||

|

import (

|

||||||

|

"context"

|

||||||

|

"fmt"

|

||||||

|

"sync"

|

||||||

|

"time"

|

||||||

|

|

||||||

|

"github.com/pion/ice/v3"

|

||||||

|

log "github.com/sirupsen/logrus"

|

||||||

|

|

||||||

|

"github.com/netbirdio/netbird/client/internal/stdnet"

|

||||||

|

)

|

||||||

|

|

||||||

|

const (

|

||||||

|

signalerMonitorPeriod = 5 * time.Second

|

||||||

|

candidatesMonitorPeriod = 5 * time.Minute

|

||||||

|

candidateGatheringTimeout = 5 * time.Second

|

||||||

|

)

|

||||||

|

|

||||||

|

type ConnMonitor struct {

|

||||||

|

signaler *Signaler

|

||||||

|

iFaceDiscover stdnet.ExternalIFaceDiscover

|

||||||

|

config ConnConfig

|

||||||

|

relayDisconnected chan bool

|

||||||

|

iCEDisconnected chan bool

|

||||||

|

reconnectCh chan struct{}

|

||||||

|

currentCandidates []ice.Candidate

|

||||||

|

candidatesMu sync.Mutex

|

||||||

|

}

|

||||||

|

|

||||||

|

func NewConnMonitor(signaler *Signaler, iFaceDiscover stdnet.ExternalIFaceDiscover, config ConnConfig, relayDisconnected, iCEDisconnected chan bool) (*ConnMonitor, <-chan struct{}) {

|

||||||

|

reconnectCh := make(chan struct{}, 1)

|

||||||

|

cm := &ConnMonitor{

|

||||||

|

signaler: signaler,

|

||||||

|

iFaceDiscover: iFaceDiscover,

|

||||||

|

config: config,

|

||||||

|

relayDisconnected: relayDisconnected,

|

||||||

|

iCEDisconnected: iCEDisconnected,

|

||||||

|

reconnectCh: reconnectCh,

|

||||||

|

}

|

||||||

|

return cm, reconnectCh

|

||||||

|

}

|

||||||

|

|

||||||

|

func (cm *ConnMonitor) Start(ctx context.Context) {

|

||||||

|

signalerReady := make(chan struct{}, 1)

|

||||||

|

go cm.monitorSignalerReady(ctx, signalerReady)

|

||||||

|

|

||||||

|

localCandidatesChanged := make(chan struct{}, 1)

|

||||||

|

go cm.monitorLocalCandidatesChanged(ctx, localCandidatesChanged)

|

||||||

|

|

||||||

|

for {

|

||||||

|

select {

|

||||||

|

case changed := <-cm.relayDisconnected:

|

||||||

|

if !changed {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

log.Debugf("Relay state changed, triggering reconnect")

|

||||||

|

cm.triggerReconnect()

|

||||||

|

|

||||||

|

case changed := <-cm.iCEDisconnected:

|

||||||

|

if !changed {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

log.Debugf("ICE state changed, triggering reconnect")

|

||||||

|

cm.triggerReconnect()

|

||||||

|

|

||||||

|

case <-signalerReady:

|

||||||

|

log.Debugf("Signaler became ready, triggering reconnect")

|

||||||

|

cm.triggerReconnect()

|

||||||

|

|

||||||

|

case <-localCandidatesChanged:

|

||||||

|

log.Debugf("Local candidates changed, triggering reconnect")

|

||||||

|

cm.triggerReconnect()

|

||||||

|

|

||||||

|

case <-ctx.Done():

|

||||||

|

return

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

func (cm *ConnMonitor) monitorSignalerReady(ctx context.Context, signalerReady chan<- struct{}) {

|

||||||

|

if cm.signaler == nil {

|

||||||

|

return

|

||||||

|

}

|

||||||

|

|

||||||

|

ticker := time.NewTicker(signalerMonitorPeriod)

|

||||||

|

defer ticker.Stop()

|

||||||

|

|

||||||

|

lastReady := true

|

||||||

|

for {

|

||||||

|

select {

|

||||||

|

case <-ticker.C:

|

||||||

|

currentReady := cm.signaler.Ready()

|

||||||

|

if !lastReady && currentReady {

|

||||||

|

select {

|

||||||

|

case signalerReady <- struct{}{}:

|

||||||

|

default:

|

||||||

|

}

|

||||||

|

}

|

||||||

|

lastReady = currentReady

|

||||||

|

case <-ctx.Done():

|

||||||

|

return

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

func (cm *ConnMonitor) monitorLocalCandidatesChanged(ctx context.Context, localCandidatesChanged chan<- struct{}) {

|

||||||

|

ufrag, pwd, err := generateICECredentials()

|

||||||

|

if err != nil {

|

||||||

|

log.Warnf("Failed to generate ICE credentials: %v", err)

|

||||||

|

return

|

||||||

|

}

|

||||||

|

|

||||||

|

ticker := time.NewTicker(candidatesMonitorPeriod)

|

||||||

|

defer ticker.Stop()

|

||||||

|

|

||||||

|

for {

|

||||||

|

select {

|

||||||

|

case <-ticker.C:

|

||||||

|

if err := cm.handleCandidateTick(ctx, localCandidatesChanged, ufrag, pwd); err != nil {

|

||||||

|

log.Warnf("Failed to handle candidate tick: %v", err)

|

||||||

|

}

|

||||||

|

case <-ctx.Done():

|

||||||

|

return

|

||||||

|

}

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

func (cm *ConnMonitor) handleCandidateTick(ctx context.Context, localCandidatesChanged chan<- struct{}, ufrag string, pwd string) error {

|

||||||

|

log.Debugf("Gathering ICE candidates")

|

||||||

|

|

||||||

|

transportNet, err := newStdNet(cm.iFaceDiscover, cm.config.ICEConfig.InterfaceBlackList)

|

||||||

|

if err != nil {

|

||||||

|

log.Errorf("failed to create pion's stdnet: %s", err)

|

||||||

|

}

|

||||||

|

|

||||||

|

agent, err := newAgent(cm.config, transportNet, candidateTypesP2P(), ufrag, pwd)

|

||||||

|

if err != nil {

|

||||||

|

return fmt.Errorf("create ICE agent: %w", err)

|

||||||

|

}

|

||||||

|

defer func() {

|

||||||

|

if err := agent.Close(); err != nil {

|

||||||

|

log.Warnf("Failed to close ICE agent: %v", err)

|

||||||

|

}

|

||||||

|

}()

|

||||||

|

|

||||||

|

gatherDone := make(chan struct{})

|

||||||

|

err = agent.OnCandidate(func(c ice.Candidate) {

|

||||||

|

log.Tracef("Got candidate: %v", c)

|

||||||

|

if c == nil {

|

||||||

|

close(gatherDone)

|

||||||

|

}

|

||||||

|

})

|

||||||

|

if err != nil {

|

||||||

|

return fmt.Errorf("set ICE candidate handler: %w", err)

|

||||||

|

}

|

||||||

|

|

||||||

|

if err := agent.GatherCandidates(); err != nil {

|

||||||

|

return fmt.Errorf("gather ICE candidates: %w", err)

|

||||||

|

}

|

||||||

|

|

||||||

|

ctx, cancel := context.WithTimeout(ctx, candidateGatheringTimeout)

|

||||||

|

defer cancel()

|

||||||

|

|

||||||

|

select {

|

||||||

|

case <-ctx.Done():

|

||||||

|

return fmt.Errorf("wait for gathering: %w", ctx.Err())

|

||||||

|

case <-gatherDone:

|

||||||

|

}

|

||||||

|

|

||||||

|

candidates, err := agent.GetLocalCandidates()

|

||||||

|

if err != nil {

|

||||||

|

return fmt.Errorf("get local candidates: %w", err)

|

||||||

|

}

|

||||||

|

log.Tracef("Got candidates: %v", candidates)

|

||||||

|

|

||||||

|

if changed := cm.updateCandidates(candidates); changed {

|

||||||

|

select {

|

||||||

|

case localCandidatesChanged <- struct{}{}:

|

||||||

|

default:

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

func (cm *ConnMonitor) updateCandidates(newCandidates []ice.Candidate) bool {

|

||||||

|

cm.candidatesMu.Lock()

|

||||||

|

defer cm.candidatesMu.Unlock()

|

||||||

|

|

||||||

|

if len(cm.currentCandidates) != len(newCandidates) {

|

||||||

|

cm.currentCandidates = newCandidates

|

||||||

|

return true

|

||||||

|

}

|

||||||

|

|

||||||

|

for i, candidate := range cm.currentCandidates {

|

||||||

|

if candidate.Address() != newCandidates[i].Address() {

|

||||||

|

cm.currentCandidates = newCandidates

|

||||||

|

return true

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

return false

|

||||||

|

}

|

||||||

|

|

||||||

|

func (cm *ConnMonitor) triggerReconnect() {

|

||||||

|

select {

|

||||||

|

case cm.reconnectCh <- struct{}{}:

|

||||||

|

default:

|

||||||

|

}

|

||||||

|

}

|

||||||

@@ -6,6 +6,6 @@ import (

|

|||||||

"github.com/netbirdio/netbird/client/internal/stdnet"

|

"github.com/netbirdio/netbird/client/internal/stdnet"

|

||||||

)

|

)

|

||||||

|

|

||||||

func (w *WorkerICE) newStdNet() (*stdnet.Net, error) {

|

func newStdNet(_ stdnet.ExternalIFaceDiscover, ifaceBlacklist []string) (*stdnet.Net, error) {

|

||||||

return stdnet.NewNet(w.config.ICEConfig.InterfaceBlackList)

|

return stdnet.NewNet(ifaceBlacklist)

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -2,6 +2,6 @@ package peer

|

|||||||

|

|

||||||

import "github.com/netbirdio/netbird/client/internal/stdnet"

|

import "github.com/netbirdio/netbird/client/internal/stdnet"

|

||||||

|

|

||||||

func (w *WorkerICE) newStdNet() (*stdnet.Net, error) {

|

func newStdNet(iFaceDiscover stdnet.ExternalIFaceDiscover, ifaceBlacklist []string) (*stdnet.Net, error) {

|

||||||

return stdnet.NewNetWithDiscover(w.iFaceDiscover, w.config.ICEConfig.InterfaceBlackList)

|

return stdnet.NewNetWithDiscover(iFaceDiscover, ifaceBlacklist)

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -233,41 +233,16 @@ func (w *WorkerICE) Close() {

|

|||||||

}

|

}

|

||||||

|

|

||||||

func (w *WorkerICE) reCreateAgent(agentCancel context.CancelFunc, relaySupport []ice.CandidateType) (*ice.Agent, error) {

|

func (w *WorkerICE) reCreateAgent(agentCancel context.CancelFunc, relaySupport []ice.CandidateType) (*ice.Agent, error) {

|

||||||

transportNet, err := w.newStdNet()

|

transportNet, err := newStdNet(w.iFaceDiscover, w.config.ICEConfig.InterfaceBlackList)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

w.log.Errorf("failed to create pion's stdnet: %s", err)

|

w.log.Errorf("failed to create pion's stdnet: %s", err)

|

||||||

}

|

}

|

||||||

|

|

||||||

iceKeepAlive := iceKeepAlive()

|

|

||||||

iceDisconnectedTimeout := iceDisconnectedTimeout()

|

|

||||||

iceRelayAcceptanceMinWait := iceRelayAcceptanceMinWait()

|

|

||||||

|

|

||||||

agentConfig := &ice.AgentConfig{

|

|

||||||

MulticastDNSMode: ice.MulticastDNSModeDisabled,

|

|

||||||

NetworkTypes: []ice.NetworkType{ice.NetworkTypeUDP4, ice.NetworkTypeUDP6},

|

|

||||||

Urls: w.config.ICEConfig.StunTurn.Load().([]*stun.URI),

|

|

||||||

CandidateTypes: relaySupport,

|

|

||||||

InterfaceFilter: stdnet.InterfaceFilter(w.config.ICEConfig.InterfaceBlackList),

|

|

||||||

UDPMux: w.config.ICEConfig.UDPMux,

|

|

||||||

UDPMuxSrflx: w.config.ICEConfig.UDPMuxSrflx,

|

|

||||||

NAT1To1IPs: w.config.ICEConfig.NATExternalIPs,

|

|

||||||

Net: transportNet,

|

|

||||||

FailedTimeout: &failedTimeout,

|

|

||||||

DisconnectedTimeout: &iceDisconnectedTimeout,

|

|

||||||

KeepaliveInterval: &iceKeepAlive,

|

|

||||||

RelayAcceptanceMinWait: &iceRelayAcceptanceMinWait,

|

|

||||||

LocalUfrag: w.localUfrag,

|

|

||||||

LocalPwd: w.localPwd,

|

|

||||||

}

|

|

||||||

|

|

||||||

if w.config.ICEConfig.DisableIPv6Discovery {

|

|

||||||

agentConfig.NetworkTypes = []ice.NetworkType{ice.NetworkTypeUDP4}

|

|

||||||

}

|

|

||||||

|

|

||||||

w.sentExtraSrflx = false

|

w.sentExtraSrflx = false

|

||||||

agent, err := ice.NewAgent(agentConfig)

|

|

||||||

|

agent, err := newAgent(w.config, transportNet, relaySupport, w.localUfrag, w.localPwd)

|

||||||

if err != nil {

|

if err != nil {

|

||||||

return nil, err

|

return nil, fmt.Errorf("create agent: %w", err)

|

||||||

}

|

}

|

||||||

|

|

||||||

err = agent.OnCandidate(w.onICECandidate)

|

err = agent.OnCandidate(w.onICECandidate)

|

||||||

@@ -390,6 +365,36 @@ func (w *WorkerICE) turnAgentDial(ctx context.Context, remoteOfferAnswer *OfferA

|

|||||||

}

|

}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func newAgent(config ConnConfig, transportNet *stdnet.Net, candidateTypes []ice.CandidateType, ufrag string, pwd string) (*ice.Agent, error) {

|

||||||

|

iceKeepAlive := iceKeepAlive()

|

||||||

|

iceDisconnectedTimeout := iceDisconnectedTimeout()

|

||||||

|

iceRelayAcceptanceMinWait := iceRelayAcceptanceMinWait()

|

||||||

|

|

||||||

|

agentConfig := &ice.AgentConfig{

|

||||||

|

MulticastDNSMode: ice.MulticastDNSModeDisabled,

|

||||||

|

NetworkTypes: []ice.NetworkType{ice.NetworkTypeUDP4, ice.NetworkTypeUDP6},

|

||||||

|

Urls: config.ICEConfig.StunTurn.Load().([]*stun.URI),

|

||||||

|

CandidateTypes: candidateTypes,

|

||||||

|

InterfaceFilter: stdnet.InterfaceFilter(config.ICEConfig.InterfaceBlackList),

|

||||||

|

UDPMux: config.ICEConfig.UDPMux,

|

||||||

|

UDPMuxSrflx: config.ICEConfig.UDPMuxSrflx,

|

||||||

|

NAT1To1IPs: config.ICEConfig.NATExternalIPs,

|

||||||

|

Net: transportNet,

|

||||||

|

FailedTimeout: &failedTimeout,

|

||||||

|

DisconnectedTimeout: &iceDisconnectedTimeout,

|

||||||

|

KeepaliveInterval: &iceKeepAlive,

|

||||||

|

RelayAcceptanceMinWait: &iceRelayAcceptanceMinWait,

|

||||||

|

LocalUfrag: ufrag,

|

||||||

|

LocalPwd: pwd,

|

||||||

|

}

|

||||||

|

|

||||||