mirror of

https://github.com/netbirdio/netbird.git

synced 2026-05-05 08:36:37 +00:00

Compare commits

14 Commits

poc/prepro

...

v0.51.1

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

b524f486e2 | ||

|

|

0dab03252c | ||

|

|

e49bcc343d | ||

|

|

3e6eede152 | ||

|

|

a76c8eafb4 | ||

|

|

2b9f331980 | ||

|

|

a7ea881900 | ||

|

|

8632dd15f1 | ||

|

|

e3b40ba694 | ||

|

|

e59d75d56a | ||

|

|

408f423adc | ||

|

|

f17dd3619c | ||

|

|

969f1ed59a | ||

|

|

768ba24fda |

@@ -43,7 +43,7 @@ jobs:

|

||||

- name: gomobile init

|

||||

run: gomobile init

|

||||

- name: build android netbird lib

|

||||

run: PATH=$PATH:$(go env GOPATH) gomobile bind -o $GITHUB_WORKSPACE/netbird.aar -javapkg=io.netbird.gomobile -ldflags="-X golang.zx2c4.com/wireguard/ipc.socketDirectory=/data/data/io.netbird.client/cache/wireguard -X github.com/netbirdio/netbird/version.version=buildtest" $GITHUB_WORKSPACE/client/android

|

||||

run: PATH=$PATH:$(go env GOPATH) gomobile bind -o $GITHUB_WORKSPACE/netbird.aar -javapkg=io.netbird.gomobile -ldflags="-checklinkname=0 -X golang.zx2c4.com/wireguard/ipc.socketDirectory=/data/data/io.netbird.client/cache/wireguard -X github.com/netbirdio/netbird/version.version=buildtest" $GITHUB_WORKSPACE/client/android

|

||||

env:

|

||||

CGO_ENABLED: 0

|

||||

ANDROID_NDK_HOME: /usr/local/lib/android/sdk/ndk/23.1.7779620

|

||||

|

||||

@@ -50,10 +50,9 @@

|

||||

|

||||

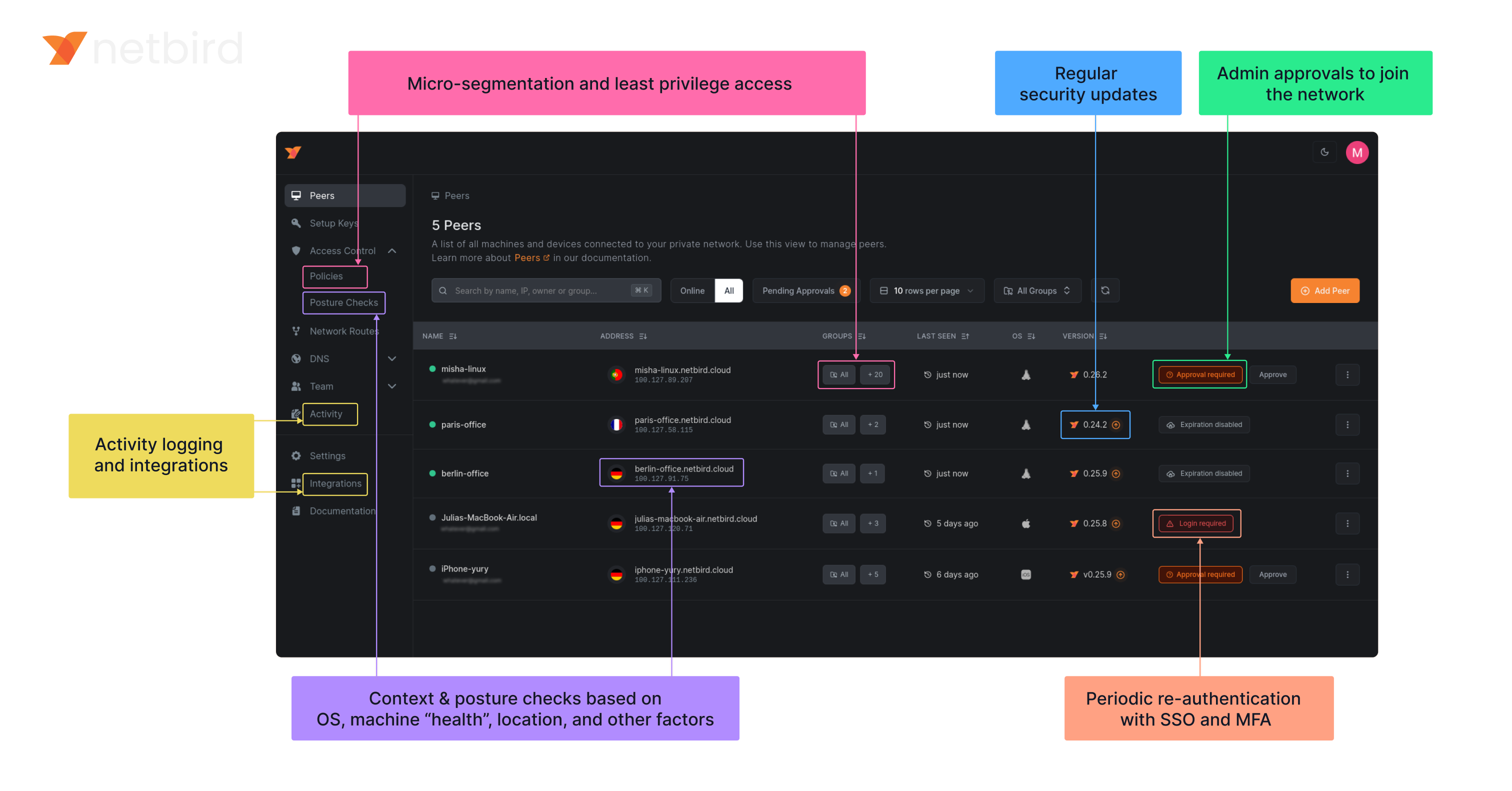

**Secure.** NetBird enables secure remote access by applying granular access policies while allowing you to manage them intuitively from a single place. Works universally on any infrastructure.

|

||||

|

||||

### Open-Source Network Security in a Single Platform

|

||||

### Open Source Network Security in a Single Platform

|

||||

|

||||

|

||||

|

||||

<img width="1188" alt="centralized-network-management 1" src="https://github.com/user-attachments/assets/c28cc8e4-15d2-4d2f-bb97-a6433db39d56" />

|

||||

|

||||

### NetBird on Lawrence Systems (Video)

|

||||

[](https://www.youtube.com/watch?v=Kwrff6h0rEw)

|

||||

|

||||

@@ -64,7 +64,9 @@ type Client struct {

|

||||

}

|

||||

|

||||

// NewClient instantiate a new Client

|

||||

func NewClient(cfgFile, deviceName string, uiVersion string, tunAdapter TunAdapter, iFaceDiscover IFaceDiscover, networkChangeListener NetworkChangeListener) *Client {

|

||||

func NewClient(cfgFile string, androidSDKVersion int, deviceName string, uiVersion string, tunAdapter TunAdapter, iFaceDiscover IFaceDiscover, networkChangeListener NetworkChangeListener) *Client {

|

||||

execWorkaround(androidSDKVersion)

|

||||

|

||||

net.SetAndroidProtectSocketFn(tunAdapter.ProtectSocket)

|

||||

return &Client{

|

||||

cfgFile: cfgFile,

|

||||

|

||||

26

client/android/exec.go

Normal file

26

client/android/exec.go

Normal file

@@ -0,0 +1,26 @@

|

||||

//go:build android

|

||||

|

||||

package android

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

_ "unsafe"

|

||||

)

|

||||

|

||||

// https://github.com/golang/go/pull/69543/commits/aad6b3b32c81795f86bc4a9e81aad94899daf520

|

||||

// In Android version 11 and earlier, pidfd-related system calls

|

||||

// are not allowed by the seccomp policy, which causes crashes due

|

||||

// to SIGSYS signals.

|

||||

|

||||

//go:linkname checkPidfdOnce os.checkPidfdOnce

|

||||

var checkPidfdOnce func() error

|

||||

|

||||

func execWorkaround(androidSDKVersion int) {

|

||||

if androidSDKVersion > 30 { // above Android 11

|

||||

return

|

||||

}

|

||||

|

||||

checkPidfdOnce = func() error {

|

||||

return fmt.Errorf("unsupported Android version")

|

||||

}

|

||||

}

|

||||

@@ -17,10 +17,18 @@ import (

|

||||

"github.com/netbirdio/netbird/client/server"

|

||||

nbstatus "github.com/netbirdio/netbird/client/status"

|

||||

mgmProto "github.com/netbirdio/netbird/management/proto"

|

||||

"github.com/netbirdio/netbird/upload-server/types"

|

||||

)

|

||||

|

||||

const errCloseConnection = "Failed to close connection: %v"

|

||||

|

||||

var (

|

||||

logFileCount uint32

|

||||

systemInfoFlag bool

|

||||

uploadBundleFlag bool

|

||||

uploadBundleURLFlag string

|

||||

)

|

||||

|

||||

var debugCmd = &cobra.Command{

|

||||

Use: "debug",

|

||||

Short: "Debugging commands",

|

||||

@@ -88,12 +96,13 @@ func debugBundle(cmd *cobra.Command, _ []string) error {

|

||||

|

||||

client := proto.NewDaemonServiceClient(conn)

|

||||

request := &proto.DebugBundleRequest{

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: getStatusOutput(cmd, anonymizeFlag),

|

||||

SystemInfo: debugSystemInfoFlag,

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: getStatusOutput(cmd, anonymizeFlag),

|

||||

SystemInfo: systemInfoFlag,

|

||||

LogFileCount: logFileCount,

|

||||

}

|

||||

if debugUploadBundle {

|

||||

request.UploadURL = debugUploadBundleURL

|

||||

if uploadBundleFlag {

|

||||

request.UploadURL = uploadBundleURLFlag

|

||||

}

|

||||

resp, err := client.DebugBundle(cmd.Context(), request)

|

||||

if err != nil {

|

||||

@@ -105,7 +114,7 @@ func debugBundle(cmd *cobra.Command, _ []string) error {

|

||||

return fmt.Errorf("upload failed: %s", resp.GetUploadFailureReason())

|

||||

}

|

||||

|

||||

if debugUploadBundle {

|

||||

if uploadBundleFlag {

|

||||

cmd.Printf("Upload file key:\n%s\n", resp.GetUploadedKey())

|

||||

}

|

||||

|

||||

@@ -223,12 +232,13 @@ func runForDuration(cmd *cobra.Command, args []string) error {

|

||||

headerPreDown := fmt.Sprintf("----- Netbird pre-down - Timestamp: %s - Duration: %s", time.Now().Format(time.RFC3339), duration)

|

||||

statusOutput = fmt.Sprintf("%s\n%s\n%s", statusOutput, headerPreDown, getStatusOutput(cmd, anonymizeFlag))

|

||||

request := &proto.DebugBundleRequest{

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: statusOutput,

|

||||

SystemInfo: debugSystemInfoFlag,

|

||||

Anonymize: anonymizeFlag,

|

||||

Status: statusOutput,

|

||||

SystemInfo: systemInfoFlag,

|

||||

LogFileCount: logFileCount,

|

||||

}

|

||||

if debugUploadBundle {

|

||||

request.UploadURL = debugUploadBundleURL

|

||||

if uploadBundleFlag {

|

||||

request.UploadURL = uploadBundleURLFlag

|

||||

}

|

||||

resp, err := client.DebugBundle(cmd.Context(), request)

|

||||

if err != nil {

|

||||

@@ -255,7 +265,7 @@ func runForDuration(cmd *cobra.Command, args []string) error {

|

||||

return fmt.Errorf("upload failed: %s", resp.GetUploadFailureReason())

|

||||

}

|

||||

|

||||

if debugUploadBundle {

|

||||

if uploadBundleFlag {

|

||||

cmd.Printf("Upload file key:\n%s\n", resp.GetUploadedKey())

|

||||

}

|

||||

|

||||

@@ -375,3 +385,15 @@ func generateDebugBundle(config *internal.Config, recorder *peer.Status, connect

|

||||

}

|

||||

log.Infof("Generated debug bundle from SIGUSR1 at: %s", path)

|

||||

}

|

||||

|

||||

func init() {

|

||||

debugBundleCmd.Flags().Uint32VarP(&logFileCount, "log-file-count", "C", 1, "Number of rotated log files to include in debug bundle")

|

||||

debugBundleCmd.Flags().BoolVarP(&systemInfoFlag, "system-info", "S", true, "Adds system information to the debug bundle")

|

||||

debugBundleCmd.Flags().BoolVarP(&uploadBundleFlag, "upload-bundle", "U", false, "Uploads the debug bundle to a server")

|

||||

debugBundleCmd.Flags().StringVar(&uploadBundleURLFlag, "upload-bundle-url", types.DefaultBundleURL, "Service URL to get an URL to upload the debug bundle")

|

||||

|

||||

forCmd.Flags().Uint32VarP(&logFileCount, "log-file-count", "C", 1, "Number of rotated log files to include in debug bundle")

|

||||

forCmd.Flags().BoolVarP(&systemInfoFlag, "system-info", "S", true, "Adds system information to the debug bundle")

|

||||

forCmd.Flags().BoolVarP(&uploadBundleFlag, "upload-bundle", "U", false, "Uploads the debug bundle to a server")

|

||||

forCmd.Flags().StringVar(&uploadBundleURLFlag, "upload-bundle-url", types.DefaultBundleURL, "Service URL to get an URL to upload the debug bundle")

|

||||

}

|

||||

|

||||

@@ -22,7 +22,6 @@ import (

|

||||

"google.golang.org/grpc/credentials/insecure"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal"

|

||||

"github.com/netbirdio/netbird/upload-server/types"

|

||||

)

|

||||

|

||||

const (

|

||||

@@ -38,10 +37,7 @@ const (

|

||||

serverSSHAllowedFlag = "allow-server-ssh"

|

||||

extraIFaceBlackListFlag = "extra-iface-blacklist"

|

||||

dnsRouteIntervalFlag = "dns-router-interval"

|

||||

systemInfoFlag = "system-info"

|

||||

enableLazyConnectionFlag = "enable-lazy-connection"

|

||||

uploadBundle = "upload-bundle"

|

||||

uploadBundleURL = "upload-bundle-url"

|

||||

)

|

||||

|

||||

var (

|

||||

@@ -75,10 +71,7 @@ var (

|

||||

autoConnectDisabled bool

|

||||

extraIFaceBlackList []string

|

||||

anonymizeFlag bool

|

||||

debugSystemInfoFlag bool

|

||||

dnsRouteInterval time.Duration

|

||||

debugUploadBundle bool

|

||||

debugUploadBundleURL string

|

||||

lazyConnEnabled bool

|

||||

|

||||

rootCmd = &cobra.Command{

|

||||

@@ -184,11 +177,8 @@ func init() {

|

||||

upCmd.PersistentFlags().BoolVar(&rosenpassPermissive, rosenpassPermissiveFlag, false, "[Experimental] Enable Rosenpass in permissive mode to allow this peer to accept WireGuard connections without requiring Rosenpass functionality from peers that do not have Rosenpass enabled.")

|

||||

upCmd.PersistentFlags().BoolVar(&serverSSHAllowed, serverSSHAllowedFlag, false, "Allow SSH server on peer. If enabled, the SSH server will be permitted")

|

||||

upCmd.PersistentFlags().BoolVar(&autoConnectDisabled, disableAutoConnectFlag, false, "Disables auto-connect feature. If enabled, then the client won't connect automatically when the service starts.")

|

||||

upCmd.PersistentFlags().BoolVar(&lazyConnEnabled, enableLazyConnectionFlag, false, "[Experimental] Enable the lazy connection feature. If enabled, the client will establish connections on-demand.")

|

||||

upCmd.PersistentFlags().BoolVar(&lazyConnEnabled, enableLazyConnectionFlag, false, "[Experimental] Enable the lazy connection feature. If enabled, the client will establish connections on-demand. Note: this setting may be overridden by management configuration.")

|

||||

|

||||

debugCmd.PersistentFlags().BoolVarP(&debugSystemInfoFlag, systemInfoFlag, "S", true, "Adds system information to the debug bundle")

|

||||

debugCmd.PersistentFlags().BoolVarP(&debugUploadBundle, uploadBundle, "U", false, fmt.Sprintf("Uploads the debug bundle to a server from URL defined by %s", uploadBundleURL))

|

||||

debugCmd.PersistentFlags().StringVar(&debugUploadBundleURL, uploadBundleURL, types.DefaultBundleURL, "Service URL to get an URL to upload the debug bundle")

|

||||

}

|

||||

|

||||

// SetupCloseHandler handles SIGTERM signal and exits with success

|

||||

|

||||

@@ -34,14 +34,14 @@ func NewActivityRecorder() *ActivityRecorder {

|

||||

}

|

||||

|

||||

// GetLastActivities returns a snapshot of peer last activity

|

||||

func (r *ActivityRecorder) GetLastActivities() map[string]time.Time {

|

||||

func (r *ActivityRecorder) GetLastActivities() map[string]monotime.Time {

|

||||

r.mu.RLock()

|

||||

defer r.mu.RUnlock()

|

||||

|

||||

activities := make(map[string]time.Time, len(r.peers))

|

||||

activities := make(map[string]monotime.Time, len(r.peers))

|

||||

for key, record := range r.peers {

|

||||

unixNano := record.LastActivity.Load()

|

||||

activities[key] = time.Unix(0, unixNano)

|

||||

monoTime := record.LastActivity.Load()

|

||||

activities[key] = monotime.Time(monoTime)

|

||||

}

|

||||

return activities

|

||||

}

|

||||

@@ -51,18 +51,20 @@ func (r *ActivityRecorder) UpsertAddress(publicKey string, address netip.AddrPor

|

||||

r.mu.Lock()

|

||||

defer r.mu.Unlock()

|

||||

|

||||

if pr, exists := r.peers[publicKey]; exists {

|

||||

delete(r.addrToPeer, pr.Address)

|

||||

pr.Address = address

|

||||

var record *PeerRecord

|

||||

record, exists := r.peers[publicKey]

|

||||

if exists {

|

||||

delete(r.addrToPeer, record.Address)

|

||||

record.Address = address

|

||||

} else {

|

||||

record := &PeerRecord{

|

||||

record = &PeerRecord{

|

||||

Address: address,

|

||||

}

|

||||

record.LastActivity.Store(monotime.Now())

|

||||

record.LastActivity.Store(int64(monotime.Now()))

|

||||

r.peers[publicKey] = record

|

||||

}

|

||||

|

||||

r.addrToPeer[address] = r.peers[publicKey]

|

||||

r.addrToPeer[address] = record

|

||||

}

|

||||

|

||||

func (r *ActivityRecorder) Remove(publicKey string) {

|

||||

@@ -84,7 +86,7 @@ func (r *ActivityRecorder) record(address netip.AddrPort) {

|

||||

return

|

||||

}

|

||||

|

||||

now := monotime.Now()

|

||||

now := int64(monotime.Now())

|

||||

last := record.LastActivity.Load()

|

||||

if now-last < saveFrequency {

|

||||

return

|

||||

|

||||

@@ -4,6 +4,8 @@ import (

|

||||

"net/netip"

|

||||

"testing"

|

||||

"time"

|

||||

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

)

|

||||

|

||||

func TestActivityRecorder_GetLastActivities(t *testing.T) {

|

||||

@@ -17,11 +19,7 @@ func TestActivityRecorder_GetLastActivities(t *testing.T) {

|

||||

t.Fatalf("Expected activity for peer %s, but got none", peer)

|

||||

}

|

||||

|

||||

if p.IsZero() {

|

||||

t.Fatalf("Expected activity for peer %s, but got zero", peer)

|

||||

}

|

||||

|

||||

if p.Before(time.Now().Add(-2 * time.Minute)) {

|

||||

if monotime.Since(p) > 5*time.Second {

|

||||

t.Fatalf("Expected activity for peer %s to be recent, but got %v", peer, p)

|

||||

}

|

||||

}

|

||||

|

||||

@@ -11,6 +11,8 @@ import (

|

||||

log "github.com/sirupsen/logrus"

|

||||

"golang.zx2c4.com/wireguard/wgctrl"

|

||||

"golang.zx2c4.com/wireguard/wgctrl/wgtypes"

|

||||

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

)

|

||||

|

||||

var zeroKey wgtypes.Key

|

||||

@@ -277,6 +279,6 @@ func (c *KernelConfigurer) GetStats() (map[string]WGStats, error) {

|

||||

return stats, nil

|

||||

}

|

||||

|

||||

func (c *KernelConfigurer) LastActivities() map[string]time.Time {

|

||||

func (c *KernelConfigurer) LastActivities() map[string]monotime.Time {

|

||||

return nil

|

||||

}

|

||||

|

||||

@@ -17,6 +17,7 @@ import (

|

||||

"golang.zx2c4.com/wireguard/wgctrl/wgtypes"

|

||||

|

||||

"github.com/netbirdio/netbird/client/iface/bind"

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

nbnet "github.com/netbirdio/netbird/util/net"

|

||||

)

|

||||

|

||||

@@ -223,7 +224,7 @@ func (c *WGUSPConfigurer) FullStats() (*Stats, error) {

|

||||

return parseStatus(c.deviceName, ipcStr)

|

||||

}

|

||||

|

||||

func (c *WGUSPConfigurer) LastActivities() map[string]time.Time {

|

||||

func (c *WGUSPConfigurer) LastActivities() map[string]monotime.Time {

|

||||

return c.activityRecorder.GetLastActivities()

|

||||

}

|

||||

|

||||

|

||||

@@ -8,6 +8,7 @@ import (

|

||||

"golang.zx2c4.com/wireguard/wgctrl/wgtypes"

|

||||

|

||||

"github.com/netbirdio/netbird/client/iface/configurer"

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

)

|

||||

|

||||

type WGConfigurer interface {

|

||||

@@ -19,5 +20,5 @@ type WGConfigurer interface {

|

||||

Close()

|

||||

GetStats() (map[string]configurer.WGStats, error)

|

||||

FullStats() (*configurer.Stats, error)

|

||||

LastActivities() map[string]time.Time

|

||||

LastActivities() map[string]monotime.Time

|

||||

}

|

||||

|

||||

@@ -21,6 +21,7 @@ import (

|

||||

"github.com/netbirdio/netbird/client/iface/device"

|

||||

"github.com/netbirdio/netbird/client/iface/wgaddr"

|

||||

"github.com/netbirdio/netbird/client/iface/wgproxy"

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

)

|

||||

|

||||

const (

|

||||

@@ -29,6 +30,11 @@ const (

|

||||

WgInterfaceDefault = configurer.WgInterfaceDefault

|

||||

)

|

||||

|

||||

var (

|

||||

// ErrIfaceNotFound is returned when the WireGuard interface is not found

|

||||

ErrIfaceNotFound = fmt.Errorf("wireguard interface not found")

|

||||

)

|

||||

|

||||

type wgProxyFactory interface {

|

||||

GetProxy() wgproxy.Proxy

|

||||

Free() error

|

||||

@@ -117,6 +123,9 @@ func (w *WGIface) UpdateAddr(newAddr string) error {

|

||||

func (w *WGIface) UpdatePeer(peerKey string, allowedIps []netip.Prefix, keepAlive time.Duration, endpoint *net.UDPAddr, preSharedKey *wgtypes.Key) error {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

if w.configurer == nil {

|

||||

return ErrIfaceNotFound

|

||||

}

|

||||

|

||||

log.Debugf("updating interface %s peer %s, endpoint %s, allowedIPs %v", w.tun.DeviceName(), peerKey, endpoint, allowedIps)

|

||||

return w.configurer.UpdatePeer(peerKey, allowedIps, keepAlive, endpoint, preSharedKey)

|

||||

@@ -126,6 +135,9 @@ func (w *WGIface) UpdatePeer(peerKey string, allowedIps []netip.Prefix, keepAliv

|

||||

func (w *WGIface) RemovePeer(peerKey string) error {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

if w.configurer == nil {

|

||||

return ErrIfaceNotFound

|

||||

}

|

||||

|

||||

log.Debugf("Removing peer %s from interface %s ", peerKey, w.tun.DeviceName())

|

||||

return w.configurer.RemovePeer(peerKey)

|

||||

@@ -135,6 +147,9 @@ func (w *WGIface) RemovePeer(peerKey string) error {

|

||||

func (w *WGIface) AddAllowedIP(peerKey string, allowedIP netip.Prefix) error {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

if w.configurer == nil {

|

||||

return ErrIfaceNotFound

|

||||

}

|

||||

|

||||

log.Debugf("Adding allowed IP to interface %s and peer %s: allowed IP %s ", w.tun.DeviceName(), peerKey, allowedIP)

|

||||

return w.configurer.AddAllowedIP(peerKey, allowedIP)

|

||||

@@ -144,6 +159,9 @@ func (w *WGIface) AddAllowedIP(peerKey string, allowedIP netip.Prefix) error {

|

||||

func (w *WGIface) RemoveAllowedIP(peerKey string, allowedIP netip.Prefix) error {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

if w.configurer == nil {

|

||||

return ErrIfaceNotFound

|

||||

}

|

||||

|

||||

log.Debugf("Removing allowed IP from interface %s and peer %s: allowed IP %s ", w.tun.DeviceName(), peerKey, allowedIP)

|

||||

return w.configurer.RemoveAllowedIP(peerKey, allowedIP)

|

||||

@@ -214,18 +232,29 @@ func (w *WGIface) GetWGDevice() *wgdevice.Device {

|

||||

|

||||

// GetStats returns the last handshake time, rx and tx bytes

|

||||

func (w *WGIface) GetStats() (map[string]configurer.WGStats, error) {

|

||||

if w.configurer == nil {

|

||||

return nil, ErrIfaceNotFound

|

||||

}

|

||||

return w.configurer.GetStats()

|

||||

}

|

||||

|

||||

func (w *WGIface) LastActivities() map[string]time.Time {

|

||||

func (w *WGIface) LastActivities() map[string]monotime.Time {

|

||||

w.mu.Lock()

|

||||

defer w.mu.Unlock()

|

||||

|

||||

if w.configurer == nil {

|

||||

return nil

|

||||

}

|

||||

|

||||

return w.configurer.LastActivities()

|

||||

|

||||

}

|

||||

|

||||

func (w *WGIface) FullStats() (*configurer.Stats, error) {

|

||||

if w.configurer == nil {

|

||||

return nil, ErrIfaceNotFound

|

||||

}

|

||||

|

||||

return w.configurer.FullStats()

|

||||

}

|

||||

|

||||

|

||||

@@ -41,9 +41,12 @@ func (t *NetStackTun) Create() (tun.Device, *netstack.Net, error) {

|

||||

}

|

||||

t.tundev = nsTunDev

|

||||

|

||||

skipProxy, err := strconv.ParseBool(os.Getenv(EnvSkipProxy))

|

||||

if err != nil {

|

||||

log.Errorf("failed to parse %s: %s", EnvSkipProxy, err)

|

||||

var skipProxy bool

|

||||

if val := os.Getenv(EnvSkipProxy); val != "" {

|

||||

skipProxy, err = strconv.ParseBool(val)

|

||||

if err != nil {

|

||||

log.Errorf("failed to parse %s: %s", EnvSkipProxy, err)

|

||||

}

|

||||

}

|

||||

if skipProxy {

|

||||

return nsTunDev, tunNet, nil

|

||||

|

||||

@@ -12,6 +12,7 @@ import (

|

||||

log "github.com/sirupsen/logrus"

|

||||

|

||||

"github.com/netbirdio/netbird/client/iface/bind"

|

||||

"github.com/netbirdio/netbird/client/iface/wgproxy/listener"

|

||||

)

|

||||

|

||||

type ProxyBind struct {

|

||||

@@ -28,6 +29,17 @@ type ProxyBind struct {

|

||||

pausedMu sync.Mutex

|

||||

paused bool

|

||||

isStarted bool

|

||||

|

||||

closeListener *listener.CloseListener

|

||||

}

|

||||

|

||||

func NewProxyBind(bind *bind.ICEBind) *ProxyBind {

|

||||

p := &ProxyBind{

|

||||

Bind: bind,

|

||||

closeListener: listener.NewCloseListener(),

|

||||

}

|

||||

|

||||

return p

|

||||

}

|

||||

|

||||

// AddTurnConn adds a new connection to the bind.

|

||||

@@ -54,6 +66,10 @@ func (p *ProxyBind) EndpointAddr() *net.UDPAddr {

|

||||

}

|

||||

}

|

||||

|

||||

func (p *ProxyBind) SetDisconnectListener(disconnected func()) {

|

||||

p.closeListener.SetCloseListener(disconnected)

|

||||

}

|

||||

|

||||

func (p *ProxyBind) Work() {

|

||||

if p.remoteConn == nil {

|

||||

return

|

||||

@@ -96,6 +112,9 @@ func (p *ProxyBind) close() error {

|

||||

if p.closed {

|

||||

return nil

|

||||

}

|

||||

|

||||

p.closeListener.SetCloseListener(nil)

|

||||

|

||||

p.closed = true

|

||||

|

||||

p.cancel()

|

||||

@@ -122,6 +141,7 @@ func (p *ProxyBind) proxyToLocal(ctx context.Context) {

|

||||

if ctx.Err() != nil {

|

||||

return

|

||||

}

|

||||

p.closeListener.Notify()

|

||||

log.Errorf("failed to read from remote conn: %s, %s", p.remoteConn.RemoteAddr(), err)

|

||||

return

|

||||

}

|

||||

|

||||

@@ -11,6 +11,8 @@ import (

|

||||

"sync"

|

||||

|

||||

log "github.com/sirupsen/logrus"

|

||||

|

||||

"github.com/netbirdio/netbird/client/iface/wgproxy/listener"

|

||||

)

|

||||

|

||||

// ProxyWrapper help to keep the remoteConn instance for net.Conn.Close function call

|

||||

@@ -26,6 +28,15 @@ type ProxyWrapper struct {

|

||||

pausedMu sync.Mutex

|

||||

paused bool

|

||||

isStarted bool

|

||||

|

||||

closeListener *listener.CloseListener

|

||||

}

|

||||

|

||||

func NewProxyWrapper(WgeBPFProxy *WGEBPFProxy) *ProxyWrapper {

|

||||

return &ProxyWrapper{

|

||||

WgeBPFProxy: WgeBPFProxy,

|

||||

closeListener: listener.NewCloseListener(),

|

||||

}

|

||||

}

|

||||

|

||||

func (p *ProxyWrapper) AddTurnConn(ctx context.Context, endpoint *net.UDPAddr, remoteConn net.Conn) error {

|

||||

@@ -43,6 +54,10 @@ func (p *ProxyWrapper) EndpointAddr() *net.UDPAddr {

|

||||

return p.wgEndpointAddr

|

||||

}

|

||||

|

||||

func (p *ProxyWrapper) SetDisconnectListener(disconnected func()) {

|

||||

p.closeListener.SetCloseListener(disconnected)

|

||||

}

|

||||

|

||||

func (p *ProxyWrapper) Work() {

|

||||

if p.remoteConn == nil {

|

||||

return

|

||||

@@ -77,6 +92,8 @@ func (e *ProxyWrapper) CloseConn() error {

|

||||

|

||||

e.cancel()

|

||||

|

||||

e.closeListener.SetCloseListener(nil)

|

||||

|

||||

if err := e.remoteConn.Close(); err != nil && !errors.Is(err, net.ErrClosed) {

|

||||

return fmt.Errorf("failed to close remote conn: %w", err)

|

||||

}

|

||||

@@ -117,6 +134,7 @@ func (p *ProxyWrapper) readFromRemote(ctx context.Context, buf []byte) (int, err

|

||||

if ctx.Err() != nil {

|

||||

return 0, ctx.Err()

|

||||

}

|

||||

p.closeListener.Notify()

|

||||

if !errors.Is(err, io.EOF) {

|

||||

log.Errorf("failed to read from turn conn (endpoint: :%d): %s", p.wgEndpointAddr.Port, err)

|

||||

}

|

||||

|

||||

@@ -36,9 +36,8 @@ func (w *KernelFactory) GetProxy() Proxy {

|

||||

return udpProxy.NewWGUDPProxy(w.wgPort)

|

||||

}

|

||||

|

||||

return &ebpf.ProxyWrapper{

|

||||

WgeBPFProxy: w.ebpfProxy,

|

||||

}

|

||||

return ebpf.NewProxyWrapper(w.ebpfProxy)

|

||||

|

||||

}

|

||||

|

||||

func (w *KernelFactory) Free() error {

|

||||

|

||||

@@ -20,9 +20,7 @@ func NewUSPFactory(iceBind *bind.ICEBind) *USPFactory {

|

||||

}

|

||||

|

||||

func (w *USPFactory) GetProxy() Proxy {

|

||||

return &proxyBind.ProxyBind{

|

||||

Bind: w.bind,

|

||||

}

|

||||

return proxyBind.NewProxyBind(w.bind)

|

||||

}

|

||||

|

||||

func (w *USPFactory) Free() error {

|

||||

|

||||

19

client/iface/wgproxy/listener/listener.go

Normal file

19

client/iface/wgproxy/listener/listener.go

Normal file

@@ -0,0 +1,19 @@

|

||||

package listener

|

||||

|

||||

type CloseListener struct {

|

||||

listener func()

|

||||

}

|

||||

|

||||

func NewCloseListener() *CloseListener {

|

||||

return &CloseListener{}

|

||||

}

|

||||

|

||||

func (c *CloseListener) SetCloseListener(listener func()) {

|

||||

c.listener = listener

|

||||

}

|

||||

|

||||

func (c *CloseListener) Notify() {

|

||||

if c.listener != nil {

|

||||

c.listener()

|

||||

}

|

||||

}

|

||||

@@ -12,4 +12,5 @@ type Proxy interface {

|

||||

Work() // Work start or resume the proxy

|

||||

Pause() // Pause to forward the packages from remote connection to WireGuard. The opposite way still works.

|

||||

CloseConn() error

|

||||

SetDisconnectListener(disconnected func())

|

||||

}

|

||||

|

||||

@@ -98,9 +98,7 @@ func TestProxyCloseByRemoteConn(t *testing.T) {

|

||||

t.Errorf("failed to free ebpf proxy: %s", err)

|

||||

}

|

||||

}()

|

||||

proxyWrapper := &ebpf.ProxyWrapper{

|

||||

WgeBPFProxy: ebpfProxy,

|

||||

}

|

||||

proxyWrapper := ebpf.NewProxyWrapper(ebpfProxy)

|

||||

|

||||

tests = append(tests, struct {

|

||||

name string

|

||||

|

||||

@@ -12,6 +12,7 @@ import (

|

||||

log "github.com/sirupsen/logrus"

|

||||

|

||||

cerrors "github.com/netbirdio/netbird/client/errors"

|

||||

"github.com/netbirdio/netbird/client/iface/wgproxy/listener"

|

||||

)

|

||||

|

||||

// WGUDPProxy proxies

|

||||

@@ -28,6 +29,8 @@ type WGUDPProxy struct {

|

||||

pausedMu sync.Mutex

|

||||

paused bool

|

||||

isStarted bool

|

||||

|

||||

closeListener *listener.CloseListener

|

||||

}

|

||||

|

||||

// NewWGUDPProxy instantiate a UDP based WireGuard proxy. This is not a thread safe implementation

|

||||

@@ -35,6 +38,7 @@ func NewWGUDPProxy(wgPort int) *WGUDPProxy {

|

||||

log.Debugf("Initializing new user space proxy with port %d", wgPort)

|

||||

p := &WGUDPProxy{

|

||||

localWGListenPort: wgPort,

|

||||

closeListener: listener.NewCloseListener(),

|

||||

}

|

||||

return p

|

||||

}

|

||||

@@ -67,6 +71,10 @@ func (p *WGUDPProxy) EndpointAddr() *net.UDPAddr {

|

||||

return endpointUdpAddr

|

||||

}

|

||||

|

||||

func (p *WGUDPProxy) SetDisconnectListener(disconnected func()) {

|

||||

p.closeListener.SetCloseListener(disconnected)

|

||||

}

|

||||

|

||||

// Work starts the proxy or resumes it if it was paused

|

||||

func (p *WGUDPProxy) Work() {

|

||||

if p.remoteConn == nil {

|

||||

@@ -111,6 +119,8 @@ func (p *WGUDPProxy) close() error {

|

||||

if p.closed {

|

||||

return nil

|

||||

}

|

||||

|

||||

p.closeListener.SetCloseListener(nil)

|

||||

p.closed = true

|

||||

|

||||

p.cancel()

|

||||

@@ -141,6 +151,7 @@ func (p *WGUDPProxy) proxyToRemote(ctx context.Context) {

|

||||

if ctx.Err() != nil {

|

||||

return

|

||||

}

|

||||

p.closeListener.Notify()

|

||||

log.Debugf("failed to read from wg interface conn: %s", err)

|

||||

return

|

||||

}

|

||||

|

||||

@@ -226,7 +226,6 @@ func (e *ConnMgr) ActivatePeer(ctx context.Context, conn *peer.Conn) {

|

||||

}

|

||||

|

||||

if found := e.lazyConnMgr.ActivatePeer(conn.GetKey()); found {

|

||||

conn.Log.Infof("activated peer from inactive state")

|

||||

if err := conn.Open(ctx); err != nil {

|

||||

conn.Log.Errorf("failed to open connection: %v", err)

|

||||

}

|

||||

|

||||

@@ -167,6 +167,7 @@ type BundleGenerator struct {

|

||||

anonymize bool

|

||||

clientStatus string

|

||||

includeSystemInfo bool

|

||||

logFileCount uint32

|

||||

|

||||

archive *zip.Writer

|

||||

}

|

||||

@@ -175,6 +176,7 @@ type BundleConfig struct {

|

||||

Anonymize bool

|

||||

ClientStatus string

|

||||

IncludeSystemInfo bool

|

||||

LogFileCount uint32

|

||||

}

|

||||

|

||||

type GeneratorDependencies struct {

|

||||

@@ -185,6 +187,12 @@ type GeneratorDependencies struct {

|

||||

}

|

||||

|

||||

func NewBundleGenerator(deps GeneratorDependencies, cfg BundleConfig) *BundleGenerator {

|

||||

// Default to 1 log file for backward compatibility when 0 is provided

|

||||

logFileCount := cfg.LogFileCount

|

||||

if logFileCount == 0 {

|

||||

logFileCount = 1

|

||||

}

|

||||

|

||||

return &BundleGenerator{

|

||||

anonymizer: anonymize.NewAnonymizer(anonymize.DefaultAddresses()),

|

||||

|

||||

@@ -196,6 +204,7 @@ func NewBundleGenerator(deps GeneratorDependencies, cfg BundleConfig) *BundleGen

|

||||

anonymize: cfg.Anonymize,

|

||||

clientStatus: cfg.ClientStatus,

|

||||

includeSystemInfo: cfg.IncludeSystemInfo,

|

||||

logFileCount: logFileCount,

|

||||

}

|

||||

}

|

||||

|

||||

@@ -561,32 +570,8 @@ func (g *BundleGenerator) addLogfile() error {

|

||||

return fmt.Errorf("add client log file to zip: %w", err)

|

||||

}

|

||||

|

||||

// add latest rotated log file

|

||||

pattern := filepath.Join(logDir, "client-*.log.gz")

|

||||

files, err := filepath.Glob(pattern)

|

||||

if err != nil {

|

||||

log.Warnf("failed to glob rotated logs: %v", err)

|

||||

} else if len(files) > 0 {

|

||||

// pick the file with the latest ModTime

|

||||

sort.Slice(files, func(i, j int) bool {

|

||||

fi, err := os.Stat(files[i])

|

||||

if err != nil {

|

||||

log.Warnf("failed to stat rotated log %s: %v", files[i], err)

|

||||

return false

|

||||

}

|

||||

fj, err := os.Stat(files[j])

|

||||

if err != nil {

|

||||

log.Warnf("failed to stat rotated log %s: %v", files[j], err)

|

||||

return false

|

||||

}

|

||||

return fi.ModTime().Before(fj.ModTime())

|

||||

})

|

||||

latest := files[len(files)-1]

|

||||

name := filepath.Base(latest)

|

||||

if err := g.addSingleLogFileGz(latest, name); err != nil {

|

||||

log.Warnf("failed to add rotated log %s: %v", name, err)

|

||||

}

|

||||

}

|

||||

// add rotated log files based on logFileCount

|

||||

g.addRotatedLogFiles(logDir)

|

||||

|

||||

stdErrLogPath := filepath.Join(logDir, errorLogFile)

|

||||

stdoutLogPath := filepath.Join(logDir, stdoutLogFile)

|

||||

@@ -670,6 +655,52 @@ func (g *BundleGenerator) addSingleLogFileGz(logPath, targetName string) error {

|

||||

return nil

|

||||

}

|

||||

|

||||

// addRotatedLogFiles adds rotated log files to the bundle based on logFileCount

|

||||

func (g *BundleGenerator) addRotatedLogFiles(logDir string) {

|

||||

if g.logFileCount == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

pattern := filepath.Join(logDir, "client-*.log.gz")

|

||||

files, err := filepath.Glob(pattern)

|

||||

if err != nil {

|

||||

log.Warnf("failed to glob rotated logs: %v", err)

|

||||

return

|

||||

}

|

||||

|

||||

if len(files) == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

// sort files by modification time (newest first)

|

||||

sort.Slice(files, func(i, j int) bool {

|

||||

fi, err := os.Stat(files[i])

|

||||

if err != nil {

|

||||

log.Warnf("failed to stat rotated log %s: %v", files[i], err)

|

||||

return false

|

||||

}

|

||||

fj, err := os.Stat(files[j])

|

||||

if err != nil {

|

||||

log.Warnf("failed to stat rotated log %s: %v", files[j], err)

|

||||

return false

|

||||

}

|

||||

return fi.ModTime().After(fj.ModTime())

|

||||

})

|

||||

|

||||

// include up to logFileCount rotated files

|

||||

maxFiles := int(g.logFileCount)

|

||||

if maxFiles > len(files) {

|

||||

maxFiles = len(files)

|

||||

}

|

||||

|

||||

for i := 0; i < maxFiles; i++ {

|

||||

name := filepath.Base(files[i])

|

||||

if err := g.addSingleLogFileGz(files[i], name); err != nil {

|

||||

log.Warnf("failed to add rotated log %s: %v", name, err)

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func (g *BundleGenerator) addFileToZip(reader io.Reader, filename string) error {

|

||||

header := &zip.FileHeader{

|

||||

Name: filename,

|

||||

|

||||

@@ -52,6 +52,7 @@ import (

|

||||

"github.com/netbirdio/netbird/management/server/store"

|

||||

"github.com/netbirdio/netbird/management/server/telemetry"

|

||||

"github.com/netbirdio/netbird/management/server/types"

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

relayClient "github.com/netbirdio/netbird/relay/client"

|

||||

"github.com/netbirdio/netbird/route"

|

||||

signal "github.com/netbirdio/netbird/signal/client"

|

||||

@@ -96,7 +97,7 @@ type MockWGIface struct {

|

||||

GetInterfaceGUIDStringFunc func() (string, error)

|

||||

GetProxyFunc func() wgproxy.Proxy

|

||||

GetNetFunc func() *netstack.Net

|

||||

LastActivitiesFunc func() map[string]time.Time

|

||||

LastActivitiesFunc func() map[string]monotime.Time

|

||||

}

|

||||

|

||||

func (m *MockWGIface) FullStats() (*configurer.Stats, error) {

|

||||

@@ -187,7 +188,7 @@ func (m *MockWGIface) GetNet() *netstack.Net {

|

||||

return m.GetNetFunc()

|

||||

}

|

||||

|

||||

func (m *MockWGIface) LastActivities() map[string]time.Time {

|

||||

func (m *MockWGIface) LastActivities() map[string]monotime.Time {

|

||||

if m.LastActivitiesFunc != nil {

|

||||

return m.LastActivitiesFunc()

|

||||

}

|

||||

|

||||

@@ -14,6 +14,7 @@ import (

|

||||

"github.com/netbirdio/netbird/client/iface/device"

|

||||

"github.com/netbirdio/netbird/client/iface/wgaddr"

|

||||

"github.com/netbirdio/netbird/client/iface/wgproxy"

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

)

|

||||

|

||||

type wgIfaceBase interface {

|

||||

@@ -38,5 +39,5 @@ type wgIfaceBase interface {

|

||||

GetStats() (map[string]configurer.WGStats, error)

|

||||

GetNet() *netstack.Net

|

||||

FullStats() (*configurer.Stats, error)

|

||||

LastActivities() map[string]time.Time

|

||||

LastActivities() map[string]monotime.Time

|

||||

}

|

||||

|

||||

@@ -48,7 +48,7 @@ func (d *Listener) ReadPackets() {

|

||||

n, remoteAddr, err := d.conn.ReadFromUDP(make([]byte, 1))

|

||||

if err != nil {

|

||||

if d.isClosed.Load() {

|

||||

d.peerCfg.Log.Debugf("exit from activity listener")

|

||||

d.peerCfg.Log.Infof("exit from activity listener")

|

||||

} else {

|

||||

d.peerCfg.Log.Errorf("failed to read from activity listener: %s", err)

|

||||

}

|

||||

@@ -59,9 +59,11 @@ func (d *Listener) ReadPackets() {

|

||||

d.peerCfg.Log.Warnf("received %d bytes from %s, too short", n, remoteAddr)

|

||||

continue

|

||||

}

|

||||

d.peerCfg.Log.Infof("activity detected")

|

||||

break

|

||||

}

|

||||

|

||||

d.peerCfg.Log.Debugf("removing lazy endpoint: %s", d.endpoint.String())

|

||||

if err := d.removeEndpoint(); err != nil {

|

||||

d.peerCfg.Log.Errorf("failed to remove endpoint: %s", err)

|

||||

}

|

||||

@@ -71,7 +73,7 @@ func (d *Listener) ReadPackets() {

|

||||

}

|

||||

|

||||

func (d *Listener) Close() {

|

||||

d.peerCfg.Log.Infof("closing listener: %s", d.conn.LocalAddr().String())

|

||||

d.peerCfg.Log.Infof("closing activity listener: %s", d.conn.LocalAddr().String())

|

||||

d.isClosed.Store(true)

|

||||

|

||||

if err := d.conn.Close(); err != nil {

|

||||

@@ -81,7 +83,6 @@ func (d *Listener) Close() {

|

||||

}

|

||||

|

||||

func (d *Listener) removeEndpoint() error {

|

||||

d.peerCfg.Log.Debugf("removing lazy endpoint: %s", d.endpoint.String())

|

||||

return d.wgIface.RemovePeer(d.peerCfg.PublicKey)

|

||||

}

|

||||

|

||||

|

||||

@@ -8,6 +8,7 @@ import (

|

||||

log "github.com/sirupsen/logrus"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal/lazyconn"

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

)

|

||||

|

||||

const (

|

||||

@@ -18,7 +19,7 @@ const (

|

||||

)

|

||||

|

||||

type WgInterface interface {

|

||||

LastActivities() map[string]time.Time

|

||||

LastActivities() map[string]monotime.Time

|

||||

}

|

||||

|

||||

type Manager struct {

|

||||

@@ -124,6 +125,7 @@ func (m *Manager) checkStats() (map[string]struct{}, error) {

|

||||

|

||||

idlePeers := make(map[string]struct{})

|

||||

|

||||

checkTime := time.Now()

|

||||

for peerID, peerCfg := range m.interestedPeers {

|

||||

lastActive, ok := lastActivities[peerID]

|

||||

if !ok {

|

||||

@@ -132,8 +134,9 @@ func (m *Manager) checkStats() (map[string]struct{}, error) {

|

||||

continue

|

||||

}

|

||||

|

||||

if time.Since(lastActive) > m.inactivityThreshold {

|

||||

peerCfg.Log.Infof("peer is inactive since: %v", lastActive)

|

||||

since := monotime.Since(lastActive)

|

||||

if since > m.inactivityThreshold {

|

||||

peerCfg.Log.Infof("peer is inactive since time: %s", checkTime.Add(-since).String())

|

||||

idlePeers[peerID] = struct{}{}

|

||||

}

|

||||

}

|

||||

|

||||

@@ -9,13 +9,14 @@ import (

|

||||

"github.com/stretchr/testify/assert"

|

||||

|

||||

"github.com/netbirdio/netbird/client/internal/lazyconn"

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

)

|

||||

|

||||

type mockWgInterface struct {

|

||||

lastActivities map[string]time.Time

|

||||

lastActivities map[string]monotime.Time

|

||||

}

|

||||

|

||||

func (m *mockWgInterface) LastActivities() map[string]time.Time {

|

||||

func (m *mockWgInterface) LastActivities() map[string]monotime.Time {

|

||||

return m.lastActivities

|

||||

}

|

||||

|

||||

@@ -23,8 +24,8 @@ func TestPeerTriggersInactivity(t *testing.T) {

|

||||

peerID := "peer1"

|

||||

|

||||

wgMock := &mockWgInterface{

|

||||

lastActivities: map[string]time.Time{

|

||||

peerID: time.Now().Add(-20 * time.Minute),

|

||||

lastActivities: map[string]monotime.Time{

|

||||

peerID: monotime.Time(int64(monotime.Now()) - int64(20*time.Minute)),

|

||||

},

|

||||

}

|

||||

|

||||

@@ -64,8 +65,8 @@ func TestPeerTriggersActivity(t *testing.T) {

|

||||

peerID := "peer1"

|

||||

|

||||

wgMock := &mockWgInterface{

|

||||

lastActivities: map[string]time.Time{

|

||||

peerID: time.Now().Add(-5 * time.Minute),

|

||||

lastActivities: map[string]monotime.Time{

|

||||

peerID: monotime.Time(int64(monotime.Now()) - int64(5*time.Minute)),

|

||||

},

|

||||

}

|

||||

|

||||

|

||||

@@ -258,12 +258,13 @@ func (m *Manager) ActivatePeer(peerID string) (found bool) {

|

||||

return false

|

||||

}

|

||||

|

||||

cfg.Log.Infof("activate peer from inactive state by remote signal message")

|

||||

|

||||

if !m.activateSinglePeer(cfg, mp) {

|

||||

return false

|

||||

}

|

||||

|

||||

m.activateHAGroupPeers(cfg)

|

||||

|

||||

return true

|

||||

}

|

||||

|

||||

@@ -571,12 +572,12 @@ func (m *Manager) onPeerInactivityTimedOut(peerIDs map[string]struct{}) {

|

||||

// this is blocking operation, potentially can be optimized

|

||||

m.peerStore.PeerConnIdle(mp.peerCfg.PublicKey)

|

||||

|

||||

mp.peerCfg.Log.Infof("start activity monitor")

|

||||

|

||||

mp.expectedWatcher = watcherActivity

|

||||

|

||||

m.inactivityManager.RemovePeer(mp.peerCfg.PublicKey)

|

||||

|

||||

mp.peerCfg.Log.Infof("start activity monitor")

|

||||

|

||||

if err := m.activityManager.MonitorPeerActivity(*mp.peerCfg); err != nil {

|

||||

mp.peerCfg.Log.Errorf("failed to create activity monitor: %v", err)

|

||||

continue

|

||||

|

||||

@@ -6,11 +6,13 @@ import (

|

||||

"time"

|

||||

|

||||

"golang.zx2c4.com/wireguard/wgctrl/wgtypes"

|

||||

|

||||

"github.com/netbirdio/netbird/monotime"

|

||||

)

|

||||

|

||||

type WGIface interface {

|

||||

RemovePeer(peerKey string) error

|

||||

UpdatePeer(peerKey string, allowedIps []netip.Prefix, keepAlive time.Duration, endpoint *net.UDPAddr, preSharedKey *wgtypes.Key) error

|

||||

IsUserspaceBind() bool

|

||||

LastActivities() map[string]time.Time

|

||||

LastActivities() map[string]monotime.Time

|

||||

}

|

||||

|

||||

@@ -167,7 +167,7 @@ func (conn *Conn) Open(engineCtx context.Context) error {

|

||||

|

||||

conn.ctx, conn.ctxCancel = context.WithCancel(engineCtx)

|

||||

|

||||

conn.workerRelay = NewWorkerRelay(conn.Log, isController(conn.config), conn.config, conn, conn.relayManager, conn.dumpState)

|

||||

conn.workerRelay = NewWorkerRelay(conn.ctx, conn.Log, isController(conn.config), conn.config, conn, conn.relayManager, conn.dumpState)

|

||||

|

||||

relayIsSupportedLocally := conn.workerRelay.RelayIsSupportedLocally()

|

||||

workerICE, err := NewWorkerICE(conn.ctx, conn.Log, conn.config, conn, conn.signaler, conn.iFaceDiscover, conn.statusRecorder, relayIsSupportedLocally)

|

||||

@@ -489,6 +489,8 @@ func (conn *Conn) onRelayConnectionIsReady(rci RelayConnInfo) {

|

||||

conn.Log.Errorf("failed to add relayed net.Conn to local proxy: %v", err)

|

||||

return

|

||||

}

|

||||

wgProxy.SetDisconnectListener(conn.onRelayDisconnected)

|

||||

|

||||

conn.dumpState.NewLocalProxy()

|

||||

|

||||

conn.Log.Infof("created new wgProxy for relay connection: %s", wgProxy.EndpointAddr().String())

|

||||

|

||||

@@ -19,6 +19,7 @@ type RelayConnInfo struct {

|

||||

}

|

||||

|

||||

type WorkerRelay struct {

|

||||

peerCtx context.Context

|

||||

log *log.Entry

|

||||

isController bool

|

||||

config ConnConfig

|

||||

@@ -33,8 +34,9 @@ type WorkerRelay struct {

|

||||

wgWatcher *WGWatcher

|

||||

}

|

||||

|

||||

func NewWorkerRelay(log *log.Entry, ctrl bool, config ConnConfig, conn *Conn, relayManager relayClient.ManagerService, stateDump *stateDump) *WorkerRelay {

|

||||

func NewWorkerRelay(ctx context.Context, log *log.Entry, ctrl bool, config ConnConfig, conn *Conn, relayManager relayClient.ManagerService, stateDump *stateDump) *WorkerRelay {

|

||||

r := &WorkerRelay{

|

||||

peerCtx: ctx,

|

||||

log: log,

|

||||

isController: ctrl,

|

||||

config: config,

|

||||

@@ -62,7 +64,7 @@ func (w *WorkerRelay) OnNewOffer(remoteOfferAnswer *OfferAnswer) {

|

||||

|

||||

srv := w.preferredRelayServer(currentRelayAddress, remoteOfferAnswer.RelaySrvAddress)

|

||||

|

||||

relayedConn, err := w.relayManager.OpenConn(srv, w.config.Key)

|

||||

relayedConn, err := w.relayManager.OpenConn(w.peerCtx, srv, w.config.Key)

|

||||

if err != nil {

|

||||

if errors.Is(err, relayClient.ErrConnAlreadyExists) {

|

||||

w.log.Debugf("handled offer by reusing existing relay connection")

|

||||

|

||||

@@ -2290,6 +2290,7 @@ type DebugBundleRequest struct {

|

||||

Status string `protobuf:"bytes,2,opt,name=status,proto3" json:"status,omitempty"`

|

||||

SystemInfo bool `protobuf:"varint,3,opt,name=systemInfo,proto3" json:"systemInfo,omitempty"`

|

||||

UploadURL string `protobuf:"bytes,4,opt,name=uploadURL,proto3" json:"uploadURL,omitempty"`

|

||||

LogFileCount uint32 `protobuf:"varint,5,opt,name=logFileCount,proto3" json:"logFileCount,omitempty"`

|

||||

unknownFields protoimpl.UnknownFields

|

||||

sizeCache protoimpl.SizeCache

|

||||

}

|

||||

@@ -2352,6 +2353,13 @@ func (x *DebugBundleRequest) GetUploadURL() string {

|

||||

return ""

|

||||

}

|

||||

|

||||

func (x *DebugBundleRequest) GetLogFileCount() uint32 {

|

||||

if x != nil {

|

||||

return x.LogFileCount

|

||||

}

|

||||

return 0

|

||||

}

|

||||

|

||||

type DebugBundleResponse struct {

|

||||

state protoimpl.MessageState `protogen:"open.v1"`

|

||||

Path string `protobuf:"bytes,1,opt,name=path,proto3" json:"path,omitempty"`

|

||||

@@ -3746,14 +3754,15 @@ const file_daemon_proto_rawDesc = "" +

|

||||

"\x12translatedHostname\x18\x04 \x01(\tR\x12translatedHostname\x128\n" +

|

||||

"\x0etranslatedPort\x18\x05 \x01(\v2\x10.daemon.PortInfoR\x0etranslatedPort\"G\n" +

|

||||

"\x17ForwardingRulesResponse\x12,\n" +

|

||||

"\x05rules\x18\x01 \x03(\v2\x16.daemon.ForwardingRuleR\x05rules\"\x88\x01\n" +

|

||||

"\x05rules\x18\x01 \x03(\v2\x16.daemon.ForwardingRuleR\x05rules\"\xac\x01\n" +

|

||||

"\x12DebugBundleRequest\x12\x1c\n" +

|

||||

"\tanonymize\x18\x01 \x01(\bR\tanonymize\x12\x16\n" +

|

||||

"\x06status\x18\x02 \x01(\tR\x06status\x12\x1e\n" +

|

||||

"\n" +

|

||||

"systemInfo\x18\x03 \x01(\bR\n" +

|

||||

"systemInfo\x12\x1c\n" +

|

||||

"\tuploadURL\x18\x04 \x01(\tR\tuploadURL\"}\n" +

|

||||

"\tuploadURL\x18\x04 \x01(\tR\tuploadURL\x12\"\n" +

|

||||

"\flogFileCount\x18\x05 \x01(\rR\flogFileCount\"}\n" +

|

||||

"\x13DebugBundleResponse\x12\x12\n" +

|

||||

"\x04path\x18\x01 \x01(\tR\x04path\x12 \n" +

|

||||

"\vuploadedKey\x18\x02 \x01(\tR\vuploadedKey\x120\n" +

|

||||

|

||||

@@ -356,6 +356,7 @@ message DebugBundleRequest {

|

||||

string status = 2;

|

||||

bool systemInfo = 3;

|

||||

string uploadURL = 4;

|

||||

uint32 logFileCount = 5;

|

||||

}

|

||||

|

||||

message DebugBundleResponse {

|

||||

|

||||

@@ -1,8 +1,4 @@

|

||||

// Code generated by protoc-gen-go-grpc. DO NOT EDIT.

|

||||

// versions:

|

||||

// - protoc-gen-go-grpc v1.5.1

|

||||

// - protoc v5.29.3

|

||||

// source: daemon.proto

|

||||

|

||||

package proto

|

||||

|

||||

@@ -15,31 +11,8 @@ import (

|

||||

|

||||

// This is a compile-time assertion to ensure that this generated file

|

||||

// is compatible with the grpc package it is being compiled against.

|

||||

// Requires gRPC-Go v1.64.0 or later.

|

||||

const _ = grpc.SupportPackageIsVersion9

|

||||

|

||||

const (

|

||||

DaemonService_Login_FullMethodName = "/daemon.DaemonService/Login"

|

||||

DaemonService_WaitSSOLogin_FullMethodName = "/daemon.DaemonService/WaitSSOLogin"

|

||||

DaemonService_Up_FullMethodName = "/daemon.DaemonService/Up"

|

||||

DaemonService_Status_FullMethodName = "/daemon.DaemonService/Status"

|

||||

DaemonService_Down_FullMethodName = "/daemon.DaemonService/Down"

|

||||

DaemonService_GetConfig_FullMethodName = "/daemon.DaemonService/GetConfig"

|

||||

DaemonService_ListNetworks_FullMethodName = "/daemon.DaemonService/ListNetworks"

|

||||

DaemonService_SelectNetworks_FullMethodName = "/daemon.DaemonService/SelectNetworks"

|

||||

DaemonService_DeselectNetworks_FullMethodName = "/daemon.DaemonService/DeselectNetworks"

|

||||

DaemonService_ForwardingRules_FullMethodName = "/daemon.DaemonService/ForwardingRules"

|

||||

DaemonService_DebugBundle_FullMethodName = "/daemon.DaemonService/DebugBundle"

|

||||

DaemonService_GetLogLevel_FullMethodName = "/daemon.DaemonService/GetLogLevel"

|

||||

DaemonService_SetLogLevel_FullMethodName = "/daemon.DaemonService/SetLogLevel"

|

||||

DaemonService_ListStates_FullMethodName = "/daemon.DaemonService/ListStates"

|

||||

DaemonService_CleanState_FullMethodName = "/daemon.DaemonService/CleanState"

|

||||

DaemonService_DeleteState_FullMethodName = "/daemon.DaemonService/DeleteState"

|

||||

DaemonService_SetNetworkMapPersistence_FullMethodName = "/daemon.DaemonService/SetNetworkMapPersistence"

|

||||

DaemonService_TracePacket_FullMethodName = "/daemon.DaemonService/TracePacket"

|

||||

DaemonService_SubscribeEvents_FullMethodName = "/daemon.DaemonService/SubscribeEvents"

|

||||

DaemonService_GetEvents_FullMethodName = "/daemon.DaemonService/GetEvents"

|

||||

)

|

||||

// Requires gRPC-Go v1.32.0 or later.

|

||||

const _ = grpc.SupportPackageIsVersion7

|

||||

|

||||

// DaemonServiceClient is the client API for DaemonService service.

|

||||

//

|

||||

@@ -80,7 +53,7 @@ type DaemonServiceClient interface {

|

||||

// SetNetworkMapPersistence enables or disables network map persistence

|

||||

SetNetworkMapPersistence(ctx context.Context, in *SetNetworkMapPersistenceRequest, opts ...grpc.CallOption) (*SetNetworkMapPersistenceResponse, error)

|

||||

TracePacket(ctx context.Context, in *TracePacketRequest, opts ...grpc.CallOption) (*TracePacketResponse, error)

|

||||

SubscribeEvents(ctx context.Context, in *SubscribeRequest, opts ...grpc.CallOption) (grpc.ServerStreamingClient[SystemEvent], error)

|

||||

SubscribeEvents(ctx context.Context, in *SubscribeRequest, opts ...grpc.CallOption) (DaemonService_SubscribeEventsClient, error)

|

||||

GetEvents(ctx context.Context, in *GetEventsRequest, opts ...grpc.CallOption) (*GetEventsResponse, error)

|

||||

}

|

||||

|

||||

@@ -93,9 +66,8 @@ func NewDaemonServiceClient(cc grpc.ClientConnInterface) DaemonServiceClient {

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) Login(ctx context.Context, in *LoginRequest, opts ...grpc.CallOption) (*LoginResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(LoginResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_Login_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/Login", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -103,9 +75,8 @@ func (c *daemonServiceClient) Login(ctx context.Context, in *LoginRequest, opts

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) WaitSSOLogin(ctx context.Context, in *WaitSSOLoginRequest, opts ...grpc.CallOption) (*WaitSSOLoginResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(WaitSSOLoginResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_WaitSSOLogin_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/WaitSSOLogin", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -113,9 +84,8 @@ func (c *daemonServiceClient) WaitSSOLogin(ctx context.Context, in *WaitSSOLogin

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) Up(ctx context.Context, in *UpRequest, opts ...grpc.CallOption) (*UpResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(UpResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_Up_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/Up", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -123,9 +93,8 @@ func (c *daemonServiceClient) Up(ctx context.Context, in *UpRequest, opts ...grp

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) Status(ctx context.Context, in *StatusRequest, opts ...grpc.CallOption) (*StatusResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(StatusResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_Status_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/Status", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -133,9 +102,8 @@ func (c *daemonServiceClient) Status(ctx context.Context, in *StatusRequest, opt

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) Down(ctx context.Context, in *DownRequest, opts ...grpc.CallOption) (*DownResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(DownResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_Down_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/Down", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -143,9 +111,8 @@ func (c *daemonServiceClient) Down(ctx context.Context, in *DownRequest, opts ..

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) GetConfig(ctx context.Context, in *GetConfigRequest, opts ...grpc.CallOption) (*GetConfigResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(GetConfigResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_GetConfig_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/GetConfig", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -153,9 +120,8 @@ func (c *daemonServiceClient) GetConfig(ctx context.Context, in *GetConfigReques

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) ListNetworks(ctx context.Context, in *ListNetworksRequest, opts ...grpc.CallOption) (*ListNetworksResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(ListNetworksResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_ListNetworks_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/ListNetworks", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -163,9 +129,8 @@ func (c *daemonServiceClient) ListNetworks(ctx context.Context, in *ListNetworks

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) SelectNetworks(ctx context.Context, in *SelectNetworksRequest, opts ...grpc.CallOption) (*SelectNetworksResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(SelectNetworksResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_SelectNetworks_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/SelectNetworks", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -173,9 +138,8 @@ func (c *daemonServiceClient) SelectNetworks(ctx context.Context, in *SelectNetw

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) DeselectNetworks(ctx context.Context, in *SelectNetworksRequest, opts ...grpc.CallOption) (*SelectNetworksResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(SelectNetworksResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_DeselectNetworks_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/DeselectNetworks", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -183,9 +147,8 @@ func (c *daemonServiceClient) DeselectNetworks(ctx context.Context, in *SelectNe

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) ForwardingRules(ctx context.Context, in *EmptyRequest, opts ...grpc.CallOption) (*ForwardingRulesResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(ForwardingRulesResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_ForwardingRules_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/ForwardingRules", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -193,9 +156,8 @@ func (c *daemonServiceClient) ForwardingRules(ctx context.Context, in *EmptyRequ

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) DebugBundle(ctx context.Context, in *DebugBundleRequest, opts ...grpc.CallOption) (*DebugBundleResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(DebugBundleResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_DebugBundle_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/DebugBundle", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -203,9 +165,8 @@ func (c *daemonServiceClient) DebugBundle(ctx context.Context, in *DebugBundleRe

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) GetLogLevel(ctx context.Context, in *GetLogLevelRequest, opts ...grpc.CallOption) (*GetLogLevelResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(GetLogLevelResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_GetLogLevel_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/GetLogLevel", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -213,9 +174,8 @@ func (c *daemonServiceClient) GetLogLevel(ctx context.Context, in *GetLogLevelRe

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) SetLogLevel(ctx context.Context, in *SetLogLevelRequest, opts ...grpc.CallOption) (*SetLogLevelResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(SetLogLevelResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_SetLogLevel_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/SetLogLevel", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -223,9 +183,8 @@ func (c *daemonServiceClient) SetLogLevel(ctx context.Context, in *SetLogLevelRe

|

||||

}

|

||||

|

||||

func (c *daemonServiceClient) ListStates(ctx context.Context, in *ListStatesRequest, opts ...grpc.CallOption) (*ListStatesResponse, error) {

|

||||

cOpts := append([]grpc.CallOption{grpc.StaticMethod()}, opts...)

|

||||

out := new(ListStatesResponse)

|

||||

err := c.cc.Invoke(ctx, DaemonService_ListStates_FullMethodName, in, out, cOpts...)

|

||||

err := c.cc.Invoke(ctx, "/daemon.DaemonService/ListStates", in, out, opts...)

|

||||

if err != nil {

|

||||

return nil, err

|

||||

}

|

||||

@@ -233,9 +192,8 @@ func (c *daemonServiceClient) ListStates(ctx context.Context, in *ListStatesRequ

|

||||

}

|

||||

|

||||