mirror of

https://github.com/netbirdio/netbird.git

synced 2026-04-19 08:46:38 +00:00

Compare commits

94 Commits

add-ns-pun

...

nb-interfa

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

417fa6e833 | ||

|

|

a7af15c4fc | ||

|

|

d6ed9c037e | ||

|

|

40fdeda838 | ||

|

|

f6e9d755e4 | ||

|

|

08fd460867 | ||

|

|

4f74509d55 | ||

|

|

58185ced16 | ||

|

|

e67f44f47c | ||

|

|

b524f486e2 | ||

|

|

0dab03252c | ||

|

|

e49bcc343d | ||

|

|

3e6eede152 | ||

|

|

a76c8eafb4 | ||

|

|

2b9f331980 | ||

|

|

a7ea881900 | ||

|

|

8632dd15f1 | ||

|

|

e3b40ba694 | ||

|

|

e59d75d56a | ||

|

|

408f423adc | ||

|

|

f17dd3619c | ||

|

|

969f1ed59a | ||

|

|

768ba24fda | ||

|

|

8942c40fde | ||

|

|

fbb1b55beb | ||

|

|

77ec32dd6f | ||

|

|

8c09a55057 | ||

|

|

f603ddf35e | ||

|

|

996b8c600c | ||

|

|

c4ed11d447 | ||

|

|

9afbecb7ac | ||

|

|

2c81cf2c1e | ||

|

|

551cb4e467 | ||

|

|

57961afe95 | ||

|

|

22678bce7f | ||

|

|

6c633497bc | ||

|

|

6922826919 | ||

|

|

56a1a75e3f | ||

|

|

d9402168ad | ||

|

|

dbdef04b9e | ||

|

|

29cbfe8467 | ||

|

|

6ce8643368 | ||

|

|

07d1ad35fc | ||

|

|

ef6cd36f1a | ||

|

|

c1c71b6d39 | ||

|

|

0480507a10 | ||

|

|

34ac4e4b5a | ||

|

|

52ff9d9602 | ||

|

|

1b73fae46e | ||

|

|

d897365abc | ||

|

|

f37aa2cc9d | ||

|

|

5343bee7b2 | ||

|

|

870e29db63 | ||

|

|

08e9b05d51 | ||

|

|

3581648071 | ||

|

|

2a51609436 | ||

|

|

83457f8b99 | ||

|

|

b45284f086 | ||

|

|

e9016aecea | ||

|

|

23b5d45b68 | ||

|

|

0e5dc9d412 | ||

|

|

91f7ee6a3c | ||

|

|

7c6b85b4cb | ||

|

|

08c9107c61 | ||

|

|

81d83245e1 | ||

|

|

af2b427751 | ||

|

|

f61ebdb3bc | ||

|

|

de7384e8ea | ||

|

|

75c1be69cf | ||

|

|

424ae28de9 | ||

|

|

d4a800edd5 | ||

|

|

dd9917f1a8 | ||

|

|

8df8c1012f | ||

|

|

bfa5c21d2d | ||

|

|

b1247a14ba | ||

|

|

f595057a0b | ||

|

|

089d442fb2 | ||

|

|

04a3765391 | ||

|

|

d24d8328f9 | ||

|

|

4f63996ae8 | ||

|

|

bdf2994e97 | ||

|

|

6d654acbad | ||

|

|

3e43298471 | ||

|

|

0ad2590974 | ||

|

|

9d11257b1a | ||

|

|

4ee1635baa | ||

|

|

75feb0da8b | ||

|

|

87376afd13 | ||

|

|

b76d9e8e9e | ||

|

|

e71383dcb9 | ||

|

|

e002a2e6e8 | ||

|

|

6127a01196 | ||

|

|

de27d6df36 | ||

|

|

3c535cdd2b |

4

.github/workflows/git-town.yml

vendored

4

.github/workflows/git-town.yml

vendored

@@ -16,6 +16,6 @@ jobs:

|

|||||||

|

|

||||||

steps:

|

steps:

|

||||||

- uses: actions/checkout@v4

|

- uses: actions/checkout@v4

|

||||||

- uses: git-town/action@v1

|

- uses: git-town/action@v1.2.1

|

||||||

with:

|

with:

|

||||||

skip-single-stacks: true

|

skip-single-stacks: true

|

||||||

|

|||||||

@@ -43,7 +43,7 @@ jobs:

|

|||||||

- name: gomobile init

|

- name: gomobile init

|

||||||

run: gomobile init

|

run: gomobile init

|

||||||

- name: build android netbird lib

|

- name: build android netbird lib

|

||||||

run: PATH=$PATH:$(go env GOPATH) gomobile bind -o $GITHUB_WORKSPACE/netbird.aar -javapkg=io.netbird.gomobile -ldflags="-X golang.zx2c4.com/wireguard/ipc.socketDirectory=/data/data/io.netbird.client/cache/wireguard -X github.com/netbirdio/netbird/version.version=buildtest" $GITHUB_WORKSPACE/client/android

|

run: PATH=$PATH:$(go env GOPATH) gomobile bind -o $GITHUB_WORKSPACE/netbird.aar -javapkg=io.netbird.gomobile -ldflags="-checklinkname=0 -X golang.zx2c4.com/wireguard/ipc.socketDirectory=/data/data/io.netbird.client/cache/wireguard -X github.com/netbirdio/netbird/version.version=buildtest" $GITHUB_WORKSPACE/client/android

|

||||||

env:

|

env:

|

||||||

CGO_ENABLED: 0

|

CGO_ENABLED: 0

|

||||||

ANDROID_NDK_HOME: /usr/local/lib/android/sdk/ndk/23.1.7779620

|

ANDROID_NDK_HOME: /usr/local/lib/android/sdk/ndk/23.1.7779620

|

||||||

|

|||||||

23

.github/workflows/release.yml

vendored

23

.github/workflows/release.yml

vendored

@@ -9,7 +9,7 @@ on:

|

|||||||

pull_request:

|

pull_request:

|

||||||

|

|

||||||

env:

|

env:

|

||||||

SIGN_PIPE_VER: "v0.0.18"

|

SIGN_PIPE_VER: "v0.0.21"

|

||||||

GORELEASER_VER: "v2.3.2"

|

GORELEASER_VER: "v2.3.2"

|

||||||

PRODUCT_NAME: "NetBird"

|

PRODUCT_NAME: "NetBird"

|

||||||

COPYRIGHT: "NetBird GmbH"

|

COPYRIGHT: "NetBird GmbH"

|

||||||

@@ -65,6 +65,13 @@ jobs:

|

|||||||

with:

|

with:

|

||||||

username: ${{ secrets.DOCKER_USER }}

|

username: ${{ secrets.DOCKER_USER }}

|

||||||

password: ${{ secrets.DOCKER_TOKEN }}

|

password: ${{ secrets.DOCKER_TOKEN }}

|

||||||

|

- name: Log in to the GitHub container registry

|

||||||

|

if: github.event_name != 'pull_request'

|

||||||

|

uses: docker/login-action@v3

|

||||||

|

with:

|

||||||

|

registry: ghcr.io

|

||||||

|

username: ${{ github.actor }}

|

||||||

|

password: ${{ secrets.CI_DOCKER_PUSH_GITHUB_TOKEN }}

|

||||||

- name: Install OS build dependencies

|

- name: Install OS build dependencies

|

||||||

run: sudo apt update && sudo apt install -y -q gcc-arm-linux-gnueabihf gcc-aarch64-linux-gnu

|

run: sudo apt update && sudo apt install -y -q gcc-arm-linux-gnueabihf gcc-aarch64-linux-gnu

|

||||||

|

|

||||||

@@ -224,3 +231,17 @@ jobs:

|

|||||||

ref: ${{ env.SIGN_PIPE_VER }}

|

ref: ${{ env.SIGN_PIPE_VER }}

|

||||||

token: ${{ secrets.SIGN_GITHUB_TOKEN }}

|

token: ${{ secrets.SIGN_GITHUB_TOKEN }}

|

||||||

inputs: '{ "tag": "${{ github.ref }}", "skipRelease": false }'

|

inputs: '{ "tag": "${{ github.ref }}", "skipRelease": false }'

|

||||||

|

|

||||||

|

post_on_forum:

|

||||||

|

runs-on: ubuntu-latest

|

||||||

|

continue-on-error: true

|

||||||

|

needs: [trigger_signer]

|

||||||

|

steps:

|

||||||

|

- uses: Codixer/discourse-topic-github-release-action@v2.0.1

|

||||||

|

with:

|

||||||

|

discourse-api-key: ${{ secrets.DISCOURSE_RELEASES_API_KEY }}

|

||||||

|

discourse-base-url: https://forum.netbird.io

|

||||||

|

discourse-author-username: NetBird

|

||||||

|

discourse-category: 17

|

||||||

|

discourse-tags:

|

||||||

|

releases

|

||||||

|

|||||||

@@ -134,6 +134,7 @@ jobs:

|

|||||||

NETBIRD_STORE_ENGINE_MYSQL_DSN: '${{ env.NETBIRD_STORE_ENGINE_MYSQL_DSN }}$'

|

NETBIRD_STORE_ENGINE_MYSQL_DSN: '${{ env.NETBIRD_STORE_ENGINE_MYSQL_DSN }}$'

|

||||||

CI_NETBIRD_MGMT_IDP_SIGNKEY_REFRESH: false

|

CI_NETBIRD_MGMT_IDP_SIGNKEY_REFRESH: false

|

||||||

CI_NETBIRD_TURN_EXTERNAL_IP: "1.2.3.4"

|

CI_NETBIRD_TURN_EXTERNAL_IP: "1.2.3.4"

|

||||||

|

CI_NETBIRD_MGMT_DISABLE_DEFAULT_POLICY: false

|

||||||

|

|

||||||

run: |

|

run: |

|

||||||

set -x

|

set -x

|

||||||

@@ -180,6 +181,7 @@ jobs:

|

|||||||

grep -A 7 Relay management.json | egrep '"Secret": ".+"'

|

grep -A 7 Relay management.json | egrep '"Secret": ".+"'

|

||||||

grep DisablePromptLogin management.json | grep 'true'

|

grep DisablePromptLogin management.json | grep 'true'

|

||||||

grep LoginFlag management.json | grep 0

|

grep LoginFlag management.json | grep 0

|

||||||

|

grep DisableDefaultPolicy management.json | grep "$CI_NETBIRD_MGMT_DISABLE_DEFAULT_POLICY"

|

||||||

|

|

||||||

- name: Install modules

|

- name: Install modules

|

||||||

run: go mod tidy

|

run: go mod tidy

|

||||||

|

|||||||

136

.goreleaser.yaml

136

.goreleaser.yaml

@@ -149,6 +149,7 @@ nfpms:

|

|||||||

dockers:

|

dockers:

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/netbird:{{ .Version }}-amd64

|

- netbirdio/netbird:{{ .Version }}-amd64

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-amd64

|

||||||

ids:

|

ids:

|

||||||

- netbird

|

- netbird

|

||||||

goarch: amd64

|

goarch: amd64

|

||||||

@@ -164,6 +165,7 @@ dockers:

|

|||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/netbird:{{ .Version }}-arm64v8

|

- netbirdio/netbird:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-arm64v8

|

||||||

ids:

|

ids:

|

||||||

- netbird

|

- netbird

|

||||||

goarch: arm64

|

goarch: arm64

|

||||||

@@ -175,10 +177,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/netbird:{{ .Version }}-arm

|

- netbirdio/netbird:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-arm

|

||||||

ids:

|

ids:

|

||||||

- netbird

|

- netbird

|

||||||

goarch: arm

|

goarch: arm

|

||||||

@@ -191,11 +194,12 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

|

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/netbird:{{ .Version }}-rootless-amd64

|

- netbirdio/netbird:{{ .Version }}-rootless-amd64

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-rootless-amd64

|

||||||

ids:

|

ids:

|

||||||

- netbird

|

- netbird

|

||||||

goarch: amd64

|

goarch: amd64

|

||||||

@@ -207,9 +211,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/netbird:{{ .Version }}-rootless-arm64v8

|

- netbirdio/netbird:{{ .Version }}-rootless-arm64v8

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-rootless-arm64v8

|

||||||

ids:

|

ids:

|

||||||

- netbird

|

- netbird

|

||||||

goarch: arm64

|

goarch: arm64

|

||||||

@@ -221,9 +227,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/netbird:{{ .Version }}-rootless-arm

|

- netbirdio/netbird:{{ .Version }}-rootless-arm

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-rootless-arm

|

||||||

ids:

|

ids:

|

||||||

- netbird

|

- netbird

|

||||||

goarch: arm

|

goarch: arm

|

||||||

@@ -236,10 +244,12 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

|

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/relay:{{ .Version }}-amd64

|

- netbirdio/relay:{{ .Version }}-amd64

|

||||||

|

- ghcr.io/netbirdio/relay:{{ .Version }}-amd64

|

||||||

ids:

|

ids:

|

||||||

- netbird-relay

|

- netbird-relay

|

||||||

goarch: amd64

|

goarch: amd64

|

||||||

@@ -251,10 +261,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/relay:{{ .Version }}-arm64v8

|

- netbirdio/relay:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/relay:{{ .Version }}-arm64v8

|

||||||

ids:

|

ids:

|

||||||

- netbird-relay

|

- netbird-relay

|

||||||

goarch: arm64

|

goarch: arm64

|

||||||

@@ -266,10 +277,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/relay:{{ .Version }}-arm

|

- netbirdio/relay:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/relay:{{ .Version }}-arm

|

||||||

ids:

|

ids:

|

||||||

- netbird-relay

|

- netbird-relay

|

||||||

goarch: arm

|

goarch: arm

|

||||||

@@ -282,10 +294,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/signal:{{ .Version }}-amd64

|

- netbirdio/signal:{{ .Version }}-amd64

|

||||||

|

- ghcr.io/netbirdio/signal:{{ .Version }}-amd64

|

||||||

ids:

|

ids:

|

||||||

- netbird-signal

|

- netbird-signal

|

||||||

goarch: amd64

|

goarch: amd64

|

||||||

@@ -297,10 +310,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/signal:{{ .Version }}-arm64v8

|

- netbirdio/signal:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/signal:{{ .Version }}-arm64v8

|

||||||

ids:

|

ids:

|

||||||

- netbird-signal

|

- netbird-signal

|

||||||

goarch: arm64

|

goarch: arm64

|

||||||

@@ -312,10 +326,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/signal:{{ .Version }}-arm

|

- netbirdio/signal:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/signal:{{ .Version }}-arm

|

||||||

ids:

|

ids:

|

||||||

- netbird-signal

|

- netbird-signal

|

||||||

goarch: arm

|

goarch: arm

|

||||||

@@ -328,10 +343,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/management:{{ .Version }}-amd64

|

- netbirdio/management:{{ .Version }}-amd64

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-amd64

|

||||||

ids:

|

ids:

|

||||||

- netbird-mgmt

|

- netbird-mgmt

|

||||||

goarch: amd64

|

goarch: amd64

|

||||||

@@ -343,10 +359,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/management:{{ .Version }}-arm64v8

|

- netbirdio/management:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-arm64v8

|

||||||

ids:

|

ids:

|

||||||

- netbird-mgmt

|

- netbird-mgmt

|

||||||

goarch: arm64

|

goarch: arm64

|

||||||

@@ -358,10 +375,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/management:{{ .Version }}-arm

|

- netbirdio/management:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-arm

|

||||||

ids:

|

ids:

|

||||||

- netbird-mgmt

|

- netbird-mgmt

|

||||||

goarch: arm

|

goarch: arm

|

||||||

@@ -374,10 +392,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/management:{{ .Version }}-debug-amd64

|

- netbirdio/management:{{ .Version }}-debug-amd64

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-debug-amd64

|

||||||

ids:

|

ids:

|

||||||

- netbird-mgmt

|

- netbird-mgmt

|

||||||

goarch: amd64

|

goarch: amd64

|

||||||

@@ -389,10 +408,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/management:{{ .Version }}-debug-arm64v8

|

- netbirdio/management:{{ .Version }}-debug-arm64v8

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-debug-arm64v8

|

||||||

ids:

|

ids:

|

||||||

- netbird-mgmt

|

- netbird-mgmt

|

||||||

goarch: arm64

|

goarch: arm64

|

||||||

@@ -404,11 +424,12 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

|

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/management:{{ .Version }}-debug-arm

|

- netbirdio/management:{{ .Version }}-debug-arm

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-debug-arm

|

||||||

ids:

|

ids:

|

||||||

- netbird-mgmt

|

- netbird-mgmt

|

||||||

goarch: arm

|

goarch: arm

|

||||||

@@ -421,10 +442,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/upload:{{ .Version }}-amd64

|

- netbirdio/upload:{{ .Version }}-amd64

|

||||||

|

- ghcr.io/netbirdio/upload:{{ .Version }}-amd64

|

||||||

ids:

|

ids:

|

||||||

- netbird-upload

|

- netbird-upload

|

||||||

goarch: amd64

|

goarch: amd64

|

||||||

@@ -436,10 +458,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/upload:{{ .Version }}-arm64v8

|

- netbirdio/upload:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/upload:{{ .Version }}-arm64v8

|

||||||

ids:

|

ids:

|

||||||

- netbird-upload

|

- netbird-upload

|

||||||

goarch: arm64

|

goarch: arm64

|

||||||

@@ -451,10 +474,11 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

- image_templates:

|

- image_templates:

|

||||||

- netbirdio/upload:{{ .Version }}-arm

|

- netbirdio/upload:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/upload:{{ .Version }}-arm

|

||||||

ids:

|

ids:

|

||||||

- netbird-upload

|

- netbird-upload

|

||||||

goarch: arm

|

goarch: arm

|

||||||

@@ -467,7 +491,7 @@ dockers:

|

|||||||

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

- "--label=org.opencontainers.image.title={{.ProjectName}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.version={{.Version}}"

|

||||||

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

- "--label=org.opencontainers.image.revision={{.FullCommit}}"

|

||||||

- "--label=org.opencontainers.image.version={{.Version}}"

|

- "--label=org.opencontainers.image.source=https://github.com/netbirdio/{{.ProjectName}}"

|

||||||

- "--label=maintainer=dev@netbird.io"

|

- "--label=maintainer=dev@netbird.io"

|

||||||

docker_manifests:

|

docker_manifests:

|

||||||

- name_template: netbirdio/netbird:{{ .Version }}

|

- name_template: netbirdio/netbird:{{ .Version }}

|

||||||

@@ -546,6 +570,84 @@ docker_manifests:

|

|||||||

- netbirdio/upload:{{ .Version }}-arm64v8

|

- netbirdio/upload:{{ .Version }}-arm64v8

|

||||||

- netbirdio/upload:{{ .Version }}-arm

|

- netbirdio/upload:{{ .Version }}-arm

|

||||||

- netbirdio/upload:{{ .Version }}-amd64

|

- netbirdio/upload:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/netbird:{{ .Version }}

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/netbird:latest

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/netbird:{{ .Version }}-rootless

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-rootless-arm64v8

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-rootless-arm

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-rootless-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/netbird:rootless-latest

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-rootless-arm64v8

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-rootless-arm

|

||||||

|

- ghcr.io/netbirdio/netbird:{{ .Version }}-rootless-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/relay:{{ .Version }}

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/relay:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/relay:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/relay:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/relay:latest

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/relay:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/relay:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/relay:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/signal:{{ .Version }}

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/signal:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/signal:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/signal:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/signal:latest

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/signal:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/signal:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/signal:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/management:{{ .Version }}

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/management:latest

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/management:debug-latest

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-debug-arm64v8

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-debug-arm

|

||||||

|

- ghcr.io/netbirdio/management:{{ .Version }}-debug-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/upload:{{ .Version }}

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/upload:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/upload:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/upload:{{ .Version }}-amd64

|

||||||

|

|

||||||

|

- name_template: ghcr.io/netbirdio/upload:latest

|

||||||

|

image_templates:

|

||||||

|

- ghcr.io/netbirdio/upload:{{ .Version }}-arm64v8

|

||||||

|

- ghcr.io/netbirdio/upload:{{ .Version }}-arm

|

||||||

|

- ghcr.io/netbirdio/upload:{{ .Version }}-amd64

|

||||||

brews:

|

brews:

|

||||||

- ids:

|

- ids:

|

||||||

- default

|

- default

|

||||||

|

|||||||

14

README.md

14

README.md

@@ -14,6 +14,9 @@

|

|||||||

<br>

|

<br>

|

||||||

<a href="https://docs.netbird.io/slack-url">

|

<a href="https://docs.netbird.io/slack-url">

|

||||||

<img src="https://img.shields.io/badge/slack-@netbird-red.svg?logo=slack"/>

|

<img src="https://img.shields.io/badge/slack-@netbird-red.svg?logo=slack"/>

|

||||||

|

</a>

|

||||||

|

<a href="https://forum.netbird.io">

|

||||||

|

<img src="https://img.shields.io/badge/community forum-@netbird-red.svg?logo=discourse"/>

|

||||||

</a>

|

</a>

|

||||||

<br>

|

<br>

|

||||||

<a href="https://gurubase.io/g/netbird">

|

<a href="https://gurubase.io/g/netbird">

|

||||||

@@ -29,13 +32,13 @@

|

|||||||

<br/>

|

<br/>

|

||||||

See <a href="https://netbird.io/docs/">Documentation</a>

|

See <a href="https://netbird.io/docs/">Documentation</a>

|

||||||

<br/>

|

<br/>

|

||||||

Join our <a href="https://docs.netbird.io/slack-url">Slack channel</a>

|

Join our <a href="https://docs.netbird.io/slack-url">Slack channel</a> or our <a href="https://forum.netbird.io">Community forum</a>

|

||||||

<br/>

|

<br/>

|

||||||

|

|

||||||

</strong>

|

</strong>

|

||||||

<br>

|

<br>

|

||||||

<a href="https://github.com/netbirdio/kubernetes-operator">

|

<a href="https://registry.terraform.io/providers/netbirdio/netbird/latest">

|

||||||

New: NetBird Kubernetes Operator

|

New: NetBird terraform provider

|

||||||

</a>

|

</a>

|

||||||

</p>

|

</p>

|

||||||

|

|

||||||

@@ -47,10 +50,9 @@

|

|||||||

|

|

||||||

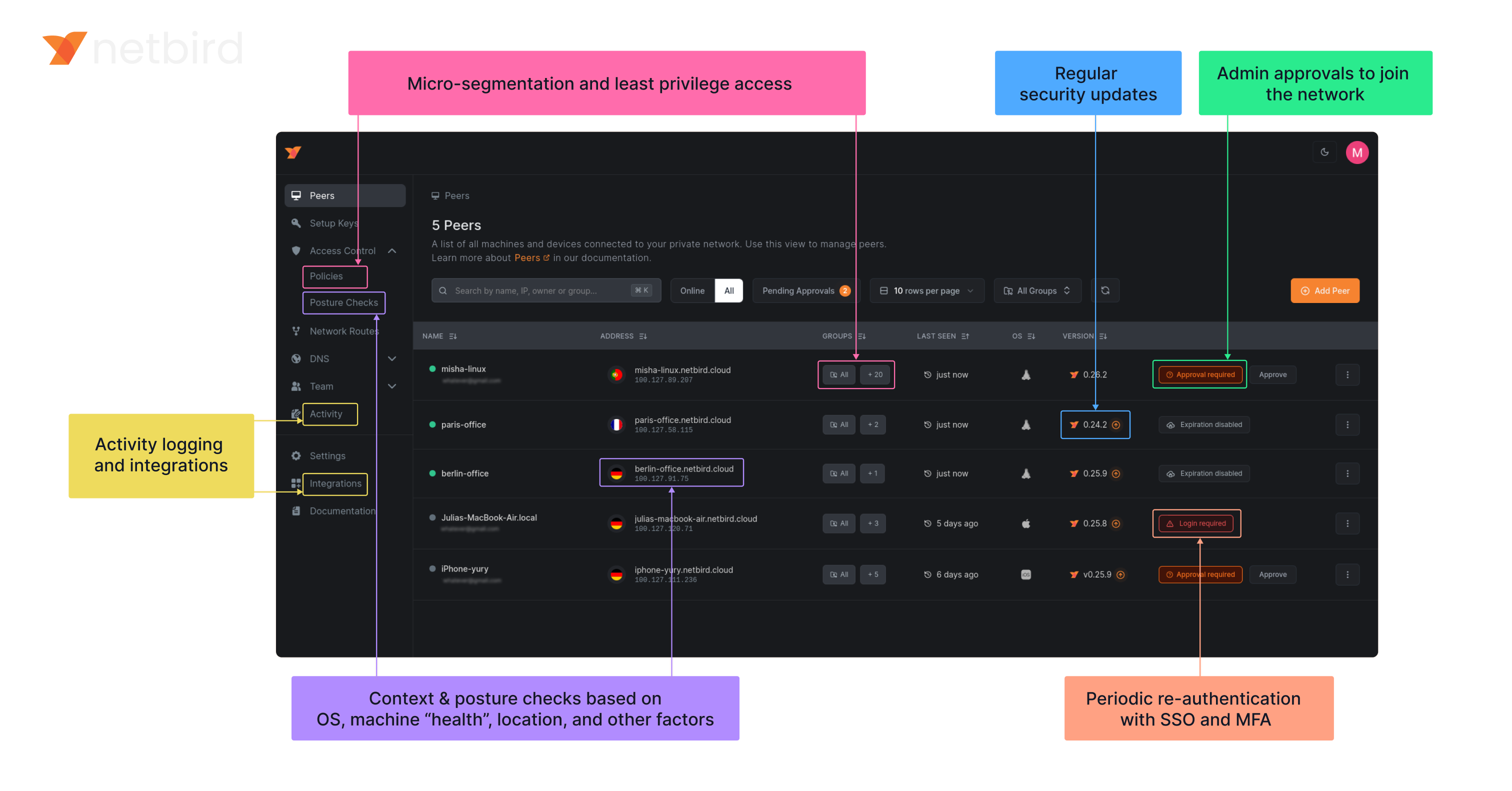

**Secure.** NetBird enables secure remote access by applying granular access policies while allowing you to manage them intuitively from a single place. Works universally on any infrastructure.

|

**Secure.** NetBird enables secure remote access by applying granular access policies while allowing you to manage them intuitively from a single place. Works universally on any infrastructure.

|

||||||

|

|

||||||

### Open-Source Network Security in a Single Platform

|

### Open Source Network Security in a Single Platform

|

||||||

|

|

||||||

|

<img width="1188" alt="centralized-network-management 1" src="https://github.com/user-attachments/assets/c28cc8e4-15d2-4d2f-bb97-a6433db39d56" />

|

||||||

|

|

||||||

|

|

||||||

### NetBird on Lawrence Systems (Video)

|

### NetBird on Lawrence Systems (Video)

|

||||||

[](https://www.youtube.com/watch?v=Kwrff6h0rEw)

|

[](https://www.youtube.com/watch?v=Kwrff6h0rEw)

|

||||||

|

|||||||

@@ -1,6 +1,9 @@

|

|||||||

FROM alpine:3.21.3

|

FROM alpine:3.21.3

|

||||||

# iproute2: busybox doesn't display ip rules properly

|

# iproute2: busybox doesn't display ip rules properly

|

||||||

RUN apk add --no-cache ca-certificates ip6tables iproute2 iptables

|

RUN apk add --no-cache ca-certificates ip6tables iproute2 iptables

|

||||||

|

|

||||||

|

ARG NETBIRD_BINARY=netbird

|

||||||

|

COPY ${NETBIRD_BINARY} /usr/local/bin/netbird

|

||||||

|

|

||||||

ENV NB_FOREGROUND_MODE=true

|

ENV NB_FOREGROUND_MODE=true

|

||||||

ENTRYPOINT [ "/usr/local/bin/netbird","up"]

|

ENTRYPOINT [ "/usr/local/bin/netbird","up"]

|

||||||

COPY netbird /usr/local/bin/netbird

|

|

||||||

|

|||||||

@@ -1,6 +1,7 @@

|

|||||||

FROM alpine:3.21.0

|

FROM alpine:3.21.0

|

||||||

|

|

||||||

COPY netbird /usr/local/bin/netbird

|

ARG NETBIRD_BINARY=netbird

|

||||||

|

COPY ${NETBIRD_BINARY} /usr/local/bin/netbird

|

||||||

|

|

||||||

RUN apk add --no-cache ca-certificates \

|

RUN apk add --no-cache ca-certificates \

|

||||||

&& adduser -D -h /var/lib/netbird netbird

|

&& adduser -D -h /var/lib/netbird netbird

|

||||||

|

|||||||

@@ -59,10 +59,14 @@ type Client struct {

|

|||||||

deviceName string

|

deviceName string

|

||||||

uiVersion string

|

uiVersion string

|

||||||

networkChangeListener listener.NetworkChangeListener

|

networkChangeListener listener.NetworkChangeListener

|

||||||

|

|

||||||

|

connectClient *internal.ConnectClient

|

||||||

}

|

}

|

||||||

|

|

||||||

// NewClient instantiate a new Client

|

// NewClient instantiate a new Client

|

||||||

func NewClient(cfgFile, deviceName string, uiVersion string, tunAdapter TunAdapter, iFaceDiscover IFaceDiscover, networkChangeListener NetworkChangeListener) *Client {

|

func NewClient(cfgFile string, androidSDKVersion int, deviceName string, uiVersion string, tunAdapter TunAdapter, iFaceDiscover IFaceDiscover, networkChangeListener NetworkChangeListener) *Client {

|

||||||

|

execWorkaround(androidSDKVersion)

|

||||||

|

|

||||||

net.SetAndroidProtectSocketFn(tunAdapter.ProtectSocket)

|

net.SetAndroidProtectSocketFn(tunAdapter.ProtectSocket)

|

||||||

return &Client{

|

return &Client{

|

||||||

cfgFile: cfgFile,

|

cfgFile: cfgFile,

|

||||||

@@ -106,8 +110,8 @@ func (c *Client) Run(urlOpener URLOpener, dns *DNSList, dnsReadyListener DnsRead

|

|||||||

|

|

||||||

// todo do not throw error in case of cancelled context

|

// todo do not throw error in case of cancelled context

|

||||||

ctx = internal.CtxInitState(ctx)

|

ctx = internal.CtxInitState(ctx)

|

||||||

connectClient := internal.NewConnectClient(ctx, cfg, c.recorder)

|

c.connectClient = internal.NewConnectClient(ctx, cfg, c.recorder)

|

||||||

return connectClient.RunOnAndroid(c.tunAdapter, c.iFaceDiscover, c.networkChangeListener, dns.items, dnsReadyListener)

|

return c.connectClient.RunOnAndroid(c.tunAdapter, c.iFaceDiscover, c.networkChangeListener, dns.items, dnsReadyListener)

|

||||||

}

|

}

|

||||||

|

|

||||||

// RunWithoutLogin we apply this type of run function when the backed has been started without UI (i.e. after reboot).

|

// RunWithoutLogin we apply this type of run function when the backed has been started without UI (i.e. after reboot).

|

||||||

@@ -132,8 +136,8 @@ func (c *Client) RunWithoutLogin(dns *DNSList, dnsReadyListener DnsReadyListener

|

|||||||

|

|

||||||

// todo do not throw error in case of cancelled context

|

// todo do not throw error in case of cancelled context

|

||||||

ctx = internal.CtxInitState(ctx)

|

ctx = internal.CtxInitState(ctx)

|

||||||

connectClient := internal.NewConnectClient(ctx, cfg, c.recorder)

|

c.connectClient = internal.NewConnectClient(ctx, cfg, c.recorder)

|

||||||

return connectClient.RunOnAndroid(c.tunAdapter, c.iFaceDiscover, c.networkChangeListener, dns.items, dnsReadyListener)

|

return c.connectClient.RunOnAndroid(c.tunAdapter, c.iFaceDiscover, c.networkChangeListener, dns.items, dnsReadyListener)

|

||||||

}

|

}

|

||||||

|

|

||||||

// Stop the internal client and free the resources

|

// Stop the internal client and free the resources

|

||||||

@@ -174,6 +178,55 @@ func (c *Client) PeersList() *PeerInfoArray {

|

|||||||

return &PeerInfoArray{items: peerInfos}

|

return &PeerInfoArray{items: peerInfos}

|

||||||

}

|

}

|

||||||

|

|

||||||

|

func (c *Client) Networks() *NetworkArray {

|

||||||

|

if c.connectClient == nil {

|

||||||

|

log.Error("not connected")

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

engine := c.connectClient.Engine()

|

||||||

|

if engine == nil {

|

||||||

|

log.Error("could not get engine")

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

routeManager := engine.GetRouteManager()

|

||||||

|

if routeManager == nil {

|

||||||

|

log.Error("could not get route manager")

|

||||||

|

return nil

|

||||||

|

}

|

||||||

|

|

||||||

|

networkArray := &NetworkArray{

|

||||||

|

items: make([]Network, 0),

|

||||||

|

}

|

||||||

|

|

||||||

|

for id, routes := range routeManager.GetClientRoutesWithNetID() {

|

||||||

|

if len(routes) == 0 {

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

|

||||||

|

r := routes[0]

|

||||||

|

netStr := r.Network.String()

|

||||||

|

if r.IsDynamic() {

|

||||||

|

netStr = r.Domains.SafeString()

|

||||||

|

}

|

||||||

|

|

||||||

|

peer, err := c.recorder.GetPeer(routes[0].Peer)

|

||||||

|

if err != nil {

|

||||||

|

log.Errorf("could not get peer info for %s: %v", routes[0].Peer, err)

|

||||||

|

continue

|

||||||

|

}

|

||||||

|

network := Network{

|

||||||

|

Name: string(id),

|

||||||

|

Network: netStr,

|

||||||

|

Peer: peer.FQDN,

|

||||||

|

Status: peer.ConnStatus.String(),

|

||||||

|

}

|

||||||

|

networkArray.Add(network)

|

||||||

|

}

|

||||||

|

return networkArray

|

||||||

|

}

|

||||||

|

|

||||||

// OnUpdatedHostDNS update the DNS servers addresses for root zones

|

// OnUpdatedHostDNS update the DNS servers addresses for root zones

|

||||||

func (c *Client) OnUpdatedHostDNS(list *DNSList) error {

|

func (c *Client) OnUpdatedHostDNS(list *DNSList) error {

|

||||||

dnsServer, err := dns.GetServerDns()

|

dnsServer, err := dns.GetServerDns()

|

||||||

|

|||||||

26

client/android/exec.go

Normal file

26

client/android/exec.go

Normal file

@@ -0,0 +1,26 @@

|

|||||||

|

//go:build android

|

||||||

|

|

||||||

|

package android

|

||||||

|

|

||||||

|

import (

|

||||||

|

"fmt"

|

||||||

|

_ "unsafe"

|

||||||

|

)

|

||||||

|

|

||||||

|

// https://github.com/golang/go/pull/69543/commits/aad6b3b32c81795f86bc4a9e81aad94899daf520

|

||||||

|

// In Android version 11 and earlier, pidfd-related system calls

|

||||||

|

// are not allowed by the seccomp policy, which causes crashes due

|

||||||

|

// to SIGSYS signals.

|

||||||

|

|

||||||

|

//go:linkname checkPidfdOnce os.checkPidfdOnce

|

||||||

|

var checkPidfdOnce func() error

|

||||||

|

|

||||||

|

func execWorkaround(androidSDKVersion int) {

|

||||||

|

if androidSDKVersion > 30 { // above Android 11

|

||||||

|

return

|

||||||

|

}

|

||||||

|

|

||||||

|

checkPidfdOnce = func() error {

|

||||||

|

return fmt.Errorf("unsupported Android version")

|

||||||

|

}

|

||||||

|

}

|

||||||

27

client/android/networks.go

Normal file

27

client/android/networks.go

Normal file

@@ -0,0 +1,27 @@

|

|||||||

|

//go:build android

|

||||||

|

|

||||||

|

package android

|

||||||

|

|

||||||

|

type Network struct {

|

||||||

|

Name string

|

||||||

|

Network string

|

||||||

|

Peer string

|

||||||

|

Status string

|

||||||

|

}

|

||||||

|

|

||||||

|

type NetworkArray struct {

|

||||||

|

items []Network

|

||||||

|

}

|

||||||

|

|

||||||

|

func (array *NetworkArray) Add(s Network) *NetworkArray {

|

||||||

|

array.items = append(array.items, s)

|

||||||

|

return array

|

||||||

|

}

|

||||||

|

|

||||||

|

func (array *NetworkArray) Get(i int) *Network {

|

||||||

|

return &array.items[i]

|

||||||

|

}

|

||||||

|

|

||||||

|

func (array *NetworkArray) Size() int {

|

||||||

|

return len(array.items)

|

||||||

|

}

|

||||||

@@ -7,30 +7,23 @@ type PeerInfo struct {

|

|||||||

ConnStatus string // Todo replace to enum

|

ConnStatus string // Todo replace to enum

|

||||||

}

|

}

|

||||||

|

|

||||||

// PeerInfoCollection made for Java layer to get non default types as collection

|

// PeerInfoArray is a wrapper of []PeerInfo

|

||||||

type PeerInfoCollection interface {

|

|

||||||

Add(s string) PeerInfoCollection

|

|

||||||

Get(i int) string

|

|

||||||

Size() int

|

|

||||||

}

|

|

||||||

|

|

||||||

// PeerInfoArray is the implementation of the PeerInfoCollection

|

|

||||||

type PeerInfoArray struct {

|

type PeerInfoArray struct {

|

||||||

items []PeerInfo

|

items []PeerInfo

|

||||||

}

|

}

|

||||||

|

|

||||||

// Add new PeerInfo to the collection

|

// Add new PeerInfo to the collection

|

||||||

func (array PeerInfoArray) Add(s PeerInfo) PeerInfoArray {

|

func (array *PeerInfoArray) Add(s PeerInfo) *PeerInfoArray {

|

||||||

array.items = append(array.items, s)

|

array.items = append(array.items, s)

|

||||||

return array

|

return array

|

||||||

}

|

}

|

||||||

|

|

||||||

// Get return an element of the collection

|

// Get return an element of the collection

|

||||||

func (array PeerInfoArray) Get(i int) *PeerInfo {

|

func (array *PeerInfoArray) Get(i int) *PeerInfo {

|

||||||

return &array.items[i]

|

return &array.items[i]

|

||||||

}

|

}

|

||||||

|

|

||||||

// Size return with the size of the collection

|

// Size return with the size of the collection

|

||||||

func (array PeerInfoArray) Size() int {

|

func (array *PeerInfoArray) Size() int {

|

||||||

return len(array.items)

|

return len(array.items)

|

||||||

}

|

}

|

||||||

|

|||||||

@@ -4,12 +4,12 @@ import (

|

|||||||

"github.com/netbirdio/netbird/client/internal"

|

"github.com/netbirdio/netbird/client/internal"

|

||||||

)

|

)

|

||||||

|

|

||||||

// Preferences export a subset of the internal config for gomobile

|

// Preferences exports a subset of the internal config for gomobile

|

||||||

type Preferences struct {

|

type Preferences struct {

|

||||||

configInput internal.ConfigInput

|

configInput internal.ConfigInput

|

||||||

}

|

}

|

||||||

|

|

||||||

// NewPreferences create new Preferences instance

|

// NewPreferences creates a new Preferences instance

|

||||||

func NewPreferences(configPath string) *Preferences {

|

func NewPreferences(configPath string) *Preferences {

|

||||||

ci := internal.ConfigInput{

|

ci := internal.ConfigInput{

|

||||||

ConfigPath: configPath,

|

ConfigPath: configPath,

|

||||||

@@ -17,7 +17,7 @@ func NewPreferences(configPath string) *Preferences {

|

|||||||

return &Preferences{ci}

|

return &Preferences{ci}

|

||||||

}

|

}

|

||||||

|

|

||||||

// GetManagementURL read url from config file

|

// GetManagementURL reads URL from config file

|

||||||

func (p *Preferences) GetManagementURL() (string, error) {

|

func (p *Preferences) GetManagementURL() (string, error) {

|

||||||

if p.configInput.ManagementURL != "" {

|

if p.configInput.ManagementURL != "" {

|

||||||

return p.configInput.ManagementURL, nil

|

return p.configInput.ManagementURL, nil

|

||||||

@@ -30,12 +30,12 @@ func (p *Preferences) GetManagementURL() (string, error) {

|

|||||||

return cfg.ManagementURL.String(), err

|

return cfg.ManagementURL.String(), err

|

||||||

}

|

}

|

||||||

|

|

||||||

// SetManagementURL store the given url and wait for commit

|

// SetManagementURL stores the given URL and waits for commit

|

||||||

func (p *Preferences) SetManagementURL(url string) {

|

func (p *Preferences) SetManagementURL(url string) {

|

||||||

p.configInput.ManagementURL = url

|

p.configInput.ManagementURL = url

|

||||||

}

|

}

|

||||||

|

|

||||||

// GetAdminURL read url from config file

|

// GetAdminURL reads URL from config file

|

||||||

func (p *Preferences) GetAdminURL() (string, error) {

|

func (p *Preferences) GetAdminURL() (string, error) {

|

||||||

if p.configInput.AdminURL != "" {

|

if p.configInput.AdminURL != "" {

|

||||||

return p.configInput.AdminURL, nil

|

return p.configInput.AdminURL, nil

|

||||||

@@ -48,12 +48,12 @@ func (p *Preferences) GetAdminURL() (string, error) {

|

|||||||

return cfg.AdminURL.String(), err

|

return cfg.AdminURL.String(), err

|

||||||

}

|

}

|

||||||

|

|

||||||

// SetAdminURL store the given url and wait for commit

|

// SetAdminURL stores the given URL and waits for commit

|

||||||

func (p *Preferences) SetAdminURL(url string) {

|

func (p *Preferences) SetAdminURL(url string) {

|

||||||

p.configInput.AdminURL = url

|

p.configInput.AdminURL = url

|

||||||

}

|

}

|

||||||

|

|

||||||

// GetPreSharedKey read preshared key from config file

|

// GetPreSharedKey reads pre-shared key from config file

|

||||||

func (p *Preferences) GetPreSharedKey() (string, error) {

|

func (p *Preferences) GetPreSharedKey() (string, error) {

|

||||||

if p.configInput.PreSharedKey != nil {

|

if p.configInput.PreSharedKey != nil {

|

||||||

return *p.configInput.PreSharedKey, nil

|

return *p.configInput.PreSharedKey, nil

|

||||||

@@ -66,12 +66,160 @@ func (p *Preferences) GetPreSharedKey() (string, error) {

|

|||||||

return cfg.PreSharedKey, err

|

return cfg.PreSharedKey, err

|

||||||

}

|

}

|

||||||

|

|

||||||

// SetPreSharedKey store the given key and wait for commit

|

// SetPreSharedKey stores the given key and waits for commit

|

||||||

func (p *Preferences) SetPreSharedKey(key string) {

|

func (p *Preferences) SetPreSharedKey(key string) {

|

||||||

p.configInput.PreSharedKey = &key

|

p.configInput.PreSharedKey = &key

|

||||||

}

|

}

|

||||||

|

|

||||||

// Commit write out the changes into config file

|

// SetRosenpassEnabled stores whether Rosenpass is enabled

|

||||||

|

func (p *Preferences) SetRosenpassEnabled(enabled bool) {

|

||||||

|

p.configInput.RosenpassEnabled = &enabled

|

||||||

|

}

|

||||||

|

|

||||||

|

// GetRosenpassEnabled reads Rosenpass enabled status from config file

|

||||||

|

func (p *Preferences) GetRosenpassEnabled() (bool, error) {

|

||||||

|

if p.configInput.RosenpassEnabled != nil {

|

||||||

|

return *p.configInput.RosenpassEnabled, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

cfg, err := internal.ReadConfig(p.configInput.ConfigPath)

|

||||||

|

if err != nil {

|

||||||

|

return false, err

|

||||||

|

}

|

||||||

|

return cfg.RosenpassEnabled, err

|

||||||

|

}

|

||||||

|

|

||||||

|

// SetRosenpassPermissive stores the given permissive setting and waits for commit

|

||||||

|

func (p *Preferences) SetRosenpassPermissive(permissive bool) {

|

||||||

|

p.configInput.RosenpassPermissive = &permissive

|

||||||

|

}

|

||||||

|

|

||||||

|

// GetRosenpassPermissive reads Rosenpass permissive setting from config file

|

||||||

|

func (p *Preferences) GetRosenpassPermissive() (bool, error) {

|

||||||

|

if p.configInput.RosenpassPermissive != nil {

|

||||||

|

return *p.configInput.RosenpassPermissive, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

cfg, err := internal.ReadConfig(p.configInput.ConfigPath)

|

||||||

|

if err != nil {

|

||||||

|

return false, err

|

||||||

|

}

|

||||||

|

return cfg.RosenpassPermissive, err

|

||||||

|

}

|

||||||

|

|

||||||

|

// GetDisableClientRoutes reads disable client routes setting from config file

|

||||||

|

func (p *Preferences) GetDisableClientRoutes() (bool, error) {

|

||||||

|

if p.configInput.DisableClientRoutes != nil {

|

||||||

|

return *p.configInput.DisableClientRoutes, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

cfg, err := internal.ReadConfig(p.configInput.ConfigPath)

|

||||||

|

if err != nil {

|

||||||

|

return false, err

|

||||||

|

}

|

||||||

|

return cfg.DisableClientRoutes, err

|

||||||

|

}

|

||||||

|

|

||||||

|

// SetDisableClientRoutes stores the given value and waits for commit

|

||||||

|

func (p *Preferences) SetDisableClientRoutes(disable bool) {

|

||||||

|

p.configInput.DisableClientRoutes = &disable

|

||||||

|

}

|

||||||

|

|

||||||

|

// GetDisableServerRoutes reads disable server routes setting from config file

|

||||||

|

func (p *Preferences) GetDisableServerRoutes() (bool, error) {

|

||||||

|

if p.configInput.DisableServerRoutes != nil {

|

||||||

|

return *p.configInput.DisableServerRoutes, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

cfg, err := internal.ReadConfig(p.configInput.ConfigPath)

|

||||||

|

if err != nil {

|

||||||

|

return false, err

|

||||||

|

}

|

||||||

|

return cfg.DisableServerRoutes, err

|

||||||

|

}

|

||||||

|

|

||||||

|

// SetDisableServerRoutes stores the given value and waits for commit

|

||||||

|

func (p *Preferences) SetDisableServerRoutes(disable bool) {

|

||||||

|

p.configInput.DisableServerRoutes = &disable

|

||||||

|

}

|

||||||

|

|

||||||

|

// GetDisableDNS reads disable DNS setting from config file

|

||||||

|

func (p *Preferences) GetDisableDNS() (bool, error) {

|

||||||

|

if p.configInput.DisableDNS != nil {

|

||||||

|

return *p.configInput.DisableDNS, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

cfg, err := internal.ReadConfig(p.configInput.ConfigPath)

|

||||||

|

if err != nil {

|

||||||

|

return false, err

|

||||||

|

}

|

||||||

|

return cfg.DisableDNS, err

|

||||||

|

}

|

||||||

|

|

||||||

|

// SetDisableDNS stores the given value and waits for commit

|

||||||

|

func (p *Preferences) SetDisableDNS(disable bool) {

|

||||||

|

p.configInput.DisableDNS = &disable

|

||||||

|

}

|

||||||

|

|

||||||

|

// GetDisableFirewall reads disable firewall setting from config file

|

||||||

|

func (p *Preferences) GetDisableFirewall() (bool, error) {

|

||||||

|

if p.configInput.DisableFirewall != nil {

|

||||||

|

return *p.configInput.DisableFirewall, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

cfg, err := internal.ReadConfig(p.configInput.ConfigPath)

|

||||||

|

if err != nil {

|

||||||

|

return false, err

|

||||||

|

}

|

||||||

|

return cfg.DisableFirewall, err

|

||||||

|

}

|

||||||

|

|

||||||

|

// SetDisableFirewall stores the given value and waits for commit

|

||||||

|

func (p *Preferences) SetDisableFirewall(disable bool) {

|

||||||

|

p.configInput.DisableFirewall = &disable

|

||||||

|

}

|

||||||

|

|

||||||

|

// GetServerSSHAllowed reads server SSH allowed setting from config file

|

||||||

|

func (p *Preferences) GetServerSSHAllowed() (bool, error) {

|

||||||

|

if p.configInput.ServerSSHAllowed != nil {

|

||||||

|

return *p.configInput.ServerSSHAllowed, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

cfg, err := internal.ReadConfig(p.configInput.ConfigPath)

|

||||||

|

if err != nil {

|

||||||

|

return false, err

|

||||||

|

}

|

||||||

|

if cfg.ServerSSHAllowed == nil {

|

||||||

|

// Default to false for security on Android

|

||||||

|

return false, nil

|

||||||

|

}

|

||||||

|

return *cfg.ServerSSHAllowed, err

|

||||||

|

}

|

||||||

|

|

||||||

|

// SetServerSSHAllowed stores the given value and waits for commit

|

||||||

|

func (p *Preferences) SetServerSSHAllowed(allowed bool) {

|

||||||

|

p.configInput.ServerSSHAllowed = &allowed

|

||||||

|

}

|

||||||

|

|

||||||

|

// GetBlockInbound reads block inbound setting from config file

|

||||||

|

func (p *Preferences) GetBlockInbound() (bool, error) {

|

||||||

|

if p.configInput.BlockInbound != nil {

|

||||||

|

return *p.configInput.BlockInbound, nil

|

||||||

|

}

|

||||||

|

|

||||||

|

cfg, err := internal.ReadConfig(p.configInput.ConfigPath)

|

||||||

|

if err != nil {

|

||||||

|

return false, err

|

||||||

|

}

|

||||||

|

return cfg.BlockInbound, err

|

||||||

|

}

|

||||||

|

|

||||||

|

// SetBlockInbound stores the given value and waits for commit

|

||||||

|

func (p *Preferences) SetBlockInbound(block bool) {

|

||||||

|

p.configInput.BlockInbound = &block

|

||||||

|

}

|

||||||

|

|

||||||

|

// Commit writes out the changes to the config file

|

||||||

func (p *Preferences) Commit() error {

|

func (p *Preferences) Commit() error {

|

||||||

_, err := internal.UpdateOrCreateConfig(p.configInput)

|

_, err := internal.UpdateOrCreateConfig(p.configInput)

|

||||||

return err

|

return err

|

||||||

|

|||||||

@@ -17,10 +17,18 @@ import (

|

|||||||

"github.com/netbirdio/netbird/client/server"

|

"github.com/netbirdio/netbird/client/server"

|

||||||

nbstatus "github.com/netbirdio/netbird/client/status"

|